如果你也在 怎样代写C++这个学科遇到相关的难题,请随时右上角联系我们的24/7代写客服。

C++ 是一种高级语言,它是由Bjarne Stroustrup 于1979 年在贝尔实验室开始设计开发的。 C++ 进一步扩充和完善了C 语言,是一种面向对象的程序设计语言。 C++ 可运行于多种平台上,如Windows、MAC 操作系统以及UNIX 的各种版本。

statistics-lab™ 为您的留学生涯保驾护航 在代写C++方面已经树立了自己的口碑, 保证靠谱, 高质且原创的统计Statistics代写服务。我们的专家在代写C++代写方面经验极为丰富,各种代写C++相关的作业也就用不着说。

我们提供的C++及其相关学科的代写,服务范围广, 其中包括但不限于:

- Statistical Inference 统计推断

- Statistical Computing 统计计算

- Advanced Probability Theory 高等概率论

- Advanced Mathematical Statistics 高等数理统计学

- (Generalized) Linear Models 广义线性模型

- Statistical Machine Learning 统计机器学习

- Longitudinal Data Analysis 纵向数据分析

- Foundations of Data Science 数据科学基础

计算机代写|C++作业代写C++代考|Think Parallel

For those new to parallel programming, we offer this Preface to provide a foundation that will make the remainder of the book more useful, approachable, and self-contained. We have attempted to assume only a basic understanding of $C$ programming and introduce the key elements of $\mathrm{C}++$ that $\mathrm{TBB}$ relies upon and supports. We introduce parallel programming from a practical standpoint that emphasizes what makes parallel programs most effective. For experienced parallel programmers, we hope this Preface will be a quick read that provides a useful refresher on the key vocabulary and thinking that allow us to make the most of parallel computer hardware.

After reading this Preface, you should be able to explain what it means to “Think Parallel” in terms of decomposition, scaling, correctness, abstraction, and patterns. You will appreciate that locality is a key concern for all parallel programming. You will understand the philosophy of supporting task programming instead of thread programming – a revolutionary development in parallel programming supported by TBB. You will also understand the elements of $\mathrm{C}_{++}$programming that are needed above and beyond a knowledge of $\mathrm{C}$ in order to use TBB well.

The remainder of this Preface contains five parts:

(1) An explanation of the motivations behind TBB (begins on page xxi)

(2) An introduction to parallel programming (begins on page xxvi)

(3) An introduction to locality and caches – we call “Locality and the Revenge of the Caches” – the one aspect of hardware that we feel essential to comprehend for top performance with parallel programming (begins on page lii)

(4) An introduction to vectorization (SIMD) (begins on page $l x$ )

(5) An introduction to the features of $\mathrm{C}++$ (beyond those in the $\mathrm{C}$ language) which are supported or used by TBB (begins on page lxii)

计算机代写|C++作业代写C++代考|Motivations Behind Threading Building Blocks

TBB first appeared in 2006. It was the product of experts in parallel programming at Intel, many of whom had decades of experience in parallel programming models, including OpenMP. Many members of the TBB team had previously spent years helping drive OpenMP to the great success it enjoys by developing and supporting OpenMP implementations. Appendix A is dedicated to a deeper dive on the history of TBB and the core concepts that go into it, including the breakthrough concept of task-stealing schedulers.

Born in the early days of multicore processors, TBB quickly emerged as the most popular parallel programming model for $\mathrm{C}++$ programmers. TBB has evolved over its first decade to incorporate a rich set of additions that have made it an obvious choice for parallel programming for novices and experts alike. As an open source project, TBB has enjoyed feedback and contributions from around the world.

TBB promotes a revolutionary idea: parallel programming should enable the programmer to expose opportunities for parallelism without hesitation, and the underlying programming model implementation (TBB) should map that to the hardware at runtime.

Understanding the importance and value of TBB rests on understanding three things: (1) program using tasks, not threads; (2) parallel programming models do not need to be messy; and (3) how to obtain scaling, performance, and performance portability with portable low overhead parallel programming models such as TBB. We will dive into each of these three next because they are so important! It is safe to say that the importance of these were underestimated for a long time before emerging as cornerstones in our understanding of how to achieve effective, and structured, parallel programming.

计算机代写|C++作业代写C++代考|Program Using Tasks Not Threads

Parallel programming should always be done in terms of tasks, not threads. We cite an authoritative and in-depth examination of this by Edward Lee at the end of this Preface. In 2006, he observed that “For concurrent programming to become mainstream, we must discard threads as a programming model.”

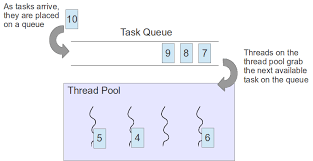

Parallel programming expressed with threads is an exercise in mapping an application to the specific number of parallel execution threads on the machine we happen to run upon. Parallel programming expressed with tasks is an exercise in exposing opportunities for parallelism and allowing a runtime (e.g., TBB runtime) to map tasks onto the hardware at runtime without complicating the logic of our application.

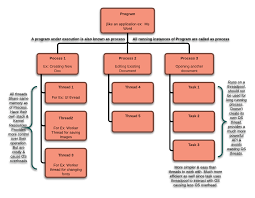

Threads represent an execution stream that executes on a hardware thread for a time slice and may be assigned other hardware threads for a future time slice. Parallel programming in terms of threads fail because they are too often used as a one-to-one correspondence between threads (as in execution threads) and threads (as in hardware threads, e.g., processor cores). A hardware thread is a physical capability, and the number of hardware threads available varies from machine to machine, as do some subtle characteristics of various thread implementations.

In contrast, tasks represent opportunities for parallelism. The ability to subdivide tasks can be exploited, as needed, to fill available threads when needed.

With these definitions in mind, a program written in terms of threads would have to map each algorithm onto specific systems of hardware and software. This is not only a distraction, it causes a whole host of issues that make parallel programming more difficult, less effective, and far less portable.

Whereas, a program written in terms of tasks allows a runtime mechanism, for example, the TBB runtime, to map tasks onto the hardware which is actually present at runtime. This removes the distraction of worrying about the number of actual hardware threads available on a system. More importantly, in practice this is the only method which opens up nested parallelism effectively. This is such an important capability, that we will revisit and emphasize the importance of nested parallelism in several chapters.

C++/C代写

计算机代写|C++作业代写C++代考|Think Parallel

对于那些刚接触并行编程的人,我们提供这个前言是为了提供一个基础,使本书的其余部分更加有用、平易近人、自成一体。我们试图假设只有一个基本的理解C编程和介绍的关键要素C++那吨乙乙依靠和支持。我们从实用的角度介绍并行编程,强调什么使并行程序最有效。对于有经验的并行程序员,我们希望这本前言能够快速阅读,提供对关键词汇和思想的有用复习,使我们能够充分利用并行计算机硬件。

读完这篇前言,你应该能够从分解、缩放、正确性、抽象和模式等方面解释“并行思考”的含义。您将意识到局部性是所有并行编程的关键问题。您将了解支持任务编程而不是线程编程的理念——TBB 支持的并行编程的革命性发展。您还将了解C++超越知识所需的编程C为了用好TBB。

本前言的其余部分包含五个部分:

(1) 解释 TBB 背后的动机(从第 xxi 页开始)

(2) 并行编程介绍(从第 xxvi 页开始)

(3) 对局部性和缓存的介绍——我们称之为“局部性和缓存的复仇”——我们认为硬件的一个方面是我们认为对于实现并行编程的最佳性能至关重要的一个方面(从第 lii 页开始)

(4) 矢量化 (SIMD) 简介(从第 1 页开始)lX)

(5) 特点介绍C++(除了那些在CTBB 支持或使用的语言)(从第 lxii 页开始)

计算机代写|C++作业代写C++代考|Motivations Behind Threading Building Blocks

TBB 于 2006 年首次出现。它是英特尔并行编程专家的产物,其中许多人在包括 OpenMP 在内的并行编程模型方面拥有数十年的经验。TBB 团队的许多成员此前曾花费数年时间帮助推动 OpenMP 取得巨大成功,通过开发和支持 OpenMP 实施。附录 A 致力于深入探讨 TBB 的历史和其中的核心概念,包括任务窃取调度程序的突破性概念。

TBB 诞生于多核处理器的早期,迅速成为最流行的并行编程模型C++程序员。TBB 在其第一个十年中不断发展,包含了一组丰富的附加功能,使其成为新手和专家等并行编程的明显选择。作为一个开源项目,TBB 得到了来自世界各地的反馈和贡献。

TBB 提出了一个革命性的想法:并行编程应该使程序员能够毫不犹豫地展示并行性的机会,并且底层编程模型实现 (TBB) 应该在运行时将其映射到硬件。

理解 TBB 的重要性和价值在于理解三件事:(1)程序使用任务,而不是线程;(2) 并行编程模型不需要凌乱;(3) 如何通过可移植的低开销并行编程模型(例如 TBB)获得可扩展性、性能和性能可移植性。接下来我们将深入研究这三个,因为它们非常重要!可以肯定地说,在成为我们理解如何实现有效且结构化的并行编程的基石之前,它们的重要性在很长一段时间内都被低估了。

计算机代写|C++作业代写C++代考|Program Using Tasks Not Threads

并行编程应该始终根据任务而不是线程来完成。我们在前言的末尾引用了 Edward Lee 对此进行的权威而深入的研究。2006 年,他观察到“要使并发编程成为主流,我们必须放弃线程作为编程模型。”

用线程表示的并行编程是将应用程序映射到我们碰巧运行的机器上特定数量的并行执行线程的练习。用任务表达的并行编程是一种展示并行性机会的练习,并允许运行时(例如,TBB 运行时)在运行时将任务映射到硬件上,而不会使我们的应用程序的逻辑复杂化。

线程表示在硬件线程上执行一个时间片的执行流,并且可以为未来的时间片分配其他硬件线程。线程方面的并行编程失败了,因为它们经常被用作线程(如在执行线程中)和线程(如在硬件线程中,例如处理器内核)之间的一一对应。硬件线程是一种物理能力,可用的硬件线程数量因机器而异,各种线程实现的一些细微特征也是如此。

相反,任务代表了并行的机会。可以根据需要利用细分任务的能力来在需要时填充可用线程。

考虑到这些定义,根据线程编写的程序必须将每个算法映射到特定的硬件和软件系统上。这不仅会让人分心,还会引发一系列问题,使并行编程更加困难、效率低下,而且可移植性也大大降低。

然而,根据任务编写的程序允许运行时机制,例如 TBB 运行时,将任务映射到运行时实际存在的硬件上。这消除了担心系统上可用的实际硬件线程数量的干扰。更重要的是,在实践中,这是唯一有效打开嵌套并行的方法。这是一项非常重要的能力,我们将在几章中重新讨论并强调嵌套并行的重要性。

统计代写请认准statistics-lab™. statistics-lab™为您的留学生涯保驾护航。

金融工程代写

金融工程是使用数学技术来解决金融问题。金融工程使用计算机科学、统计学、经济学和应用数学领域的工具和知识来解决当前的金融问题,以及设计新的和创新的金融产品。

非参数统计代写

非参数统计指的是一种统计方法,其中不假设数据来自于由少数参数决定的规定模型;这种模型的例子包括正态分布模型和线性回归模型。

广义线性模型代考

广义线性模型(GLM)归属统计学领域,是一种应用灵活的线性回归模型。该模型允许因变量的偏差分布有除了正态分布之外的其它分布。

术语 广义线性模型(GLM)通常是指给定连续和/或分类预测因素的连续响应变量的常规线性回归模型。它包括多元线性回归,以及方差分析和方差分析(仅含固定效应)。

有限元方法代写

有限元方法(FEM)是一种流行的方法,用于数值解决工程和数学建模中出现的微分方程。典型的问题领域包括结构分析、传热、流体流动、质量运输和电磁势等传统领域。

有限元是一种通用的数值方法,用于解决两个或三个空间变量的偏微分方程(即一些边界值问题)。为了解决一个问题,有限元将一个大系统细分为更小、更简单的部分,称为有限元。这是通过在空间维度上的特定空间离散化来实现的,它是通过构建对象的网格来实现的:用于求解的数值域,它有有限数量的点。边界值问题的有限元方法表述最终导致一个代数方程组。该方法在域上对未知函数进行逼近。[1] 然后将模拟这些有限元的简单方程组合成一个更大的方程系统,以模拟整个问题。然后,有限元通过变化微积分使相关的误差函数最小化来逼近一个解决方案。

tatistics-lab作为专业的留学生服务机构,多年来已为美国、英国、加拿大、澳洲等留学热门地的学生提供专业的学术服务,包括但不限于Essay代写,Assignment代写,Dissertation代写,Report代写,小组作业代写,Proposal代写,Paper代写,Presentation代写,计算机作业代写,论文修改和润色,网课代做,exam代考等等。写作范围涵盖高中,本科,研究生等海外留学全阶段,辐射金融,经济学,会计学,审计学,管理学等全球99%专业科目。写作团队既有专业英语母语作者,也有海外名校硕博留学生,每位写作老师都拥有过硬的语言能力,专业的学科背景和学术写作经验。我们承诺100%原创,100%专业,100%准时,100%满意。

随机分析代写

随机微积分是数学的一个分支,对随机过程进行操作。它允许为随机过程的积分定义一个关于随机过程的一致的积分理论。这个领域是由日本数学家伊藤清在第二次世界大战期间创建并开始的。

时间序列分析代写

随机过程,是依赖于参数的一组随机变量的全体,参数通常是时间。 随机变量是随机现象的数量表现,其时间序列是一组按照时间发生先后顺序进行排列的数据点序列。通常一组时间序列的时间间隔为一恒定值(如1秒,5分钟,12小时,7天,1年),因此时间序列可以作为离散时间数据进行分析处理。研究时间序列数据的意义在于现实中,往往需要研究某个事物其随时间发展变化的规律。这就需要通过研究该事物过去发展的历史记录,以得到其自身发展的规律。

回归分析代写

多元回归分析渐进(Multiple Regression Analysis Asymptotics)属于计量经济学领域,主要是一种数学上的统计分析方法,可以分析复杂情况下各影响因素的数学关系,在自然科学、社会和经济学等多个领域内应用广泛。

MATLAB代写

MATLAB 是一种用于技术计算的高性能语言。它将计算、可视化和编程集成在一个易于使用的环境中,其中问题和解决方案以熟悉的数学符号表示。典型用途包括:数学和计算算法开发建模、仿真和原型制作数据分析、探索和可视化科学和工程图形应用程序开发,包括图形用户界面构建MATLAB 是一个交互式系统,其基本数据元素是一个不需要维度的数组。这使您可以解决许多技术计算问题,尤其是那些具有矩阵和向量公式的问题,而只需用 C 或 Fortran 等标量非交互式语言编写程序所需的时间的一小部分。MATLAB 名称代表矩阵实验室。MATLAB 最初的编写目的是提供对由 LINPACK 和 EISPACK 项目开发的矩阵软件的轻松访问,这两个项目共同代表了矩阵计算软件的最新技术。MATLAB 经过多年的发展,得到了许多用户的投入。在大学环境中,它是数学、工程和科学入门和高级课程的标准教学工具。在工业领域,MATLAB 是高效研究、开发和分析的首选工具。MATLAB 具有一系列称为工具箱的特定于应用程序的解决方案。对于大多数 MATLAB 用户来说非常重要,工具箱允许您学习和应用专业技术。工具箱是 MATLAB 函数(M 文件)的综合集合,可扩展 MATLAB 环境以解决特定类别的问题。可用工具箱的领域包括信号处理、控制系统、神经网络、模糊逻辑、小波、仿真等。