数学代写|AM221 Convex optimization

Statistics-lab™可以为您提供harvard.edu AM221 Convex optimization凸优化课程的代写代考和辅导服务!

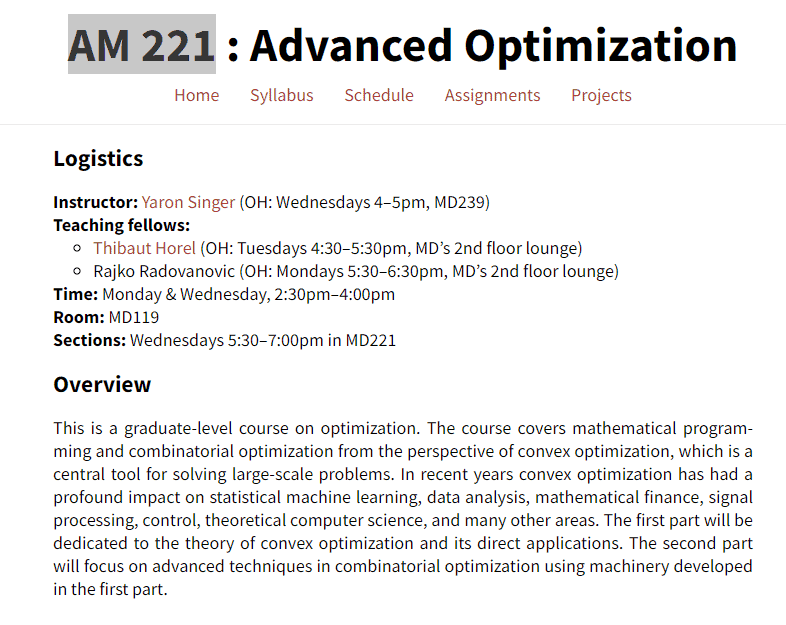

AM221 Convex optimization课程简介

This is a graduate-level course on optimization. The course covers mathematical programming and combinatorial optimization from the perspective of convex optimization, which is a central tool for solving large-scale problems. In recent years convex optimization has had a profound impact on statistical machine learning, data analysis, mathematical finance, signal processing, control, theoretical computer science, and many other areas. The first part will be dedicated to the theory of convex optimization and its direct applications. The second part will focus on advanced techniques in combinatorial optimization using machinery developed in the first part.

PREREQUISITES

Instructor: Yaron Singer (OH: Wednesdays 4-5pm, MD239)

Teaching fellows:

- Thibaut Horel (OH: Tuesdays 4:30-5:30pm, MD’s 2nd floor lounge)

- Rajko Radovanovic (OH: Mondays 5:30-6:30pm, MD’s 2nd floor lounge)

Time: Monday \& Wednesday, 2:30pm-4:00pm

Room: MD119

Sections: Wednesdays 5:30-7:00pm in MD221

AM221 Convex optimization HELP(EXAM HELP, ONLINE TUTOR)

2.7 Voronoi description of halfspace. Let $a$ and $b$ be distinct points in $\mathbf{R}^n$. Show that the set of all points that are closer (in Euclidean norm) to $a$ than $b$, i.e., $\left{x \mid|x-a|_2 \leq|x-b|_2\right}$, is a halfspace. Describe it explicitly as an inequality of the form $c^T x \leq d$. Draw a picture. Solution. Since a norm is always nonnegative, we have $|x-a|_2 \leq|x-b|_2$ if and only if $|x-a|_2^2 \leq|x-b|_2^2$, so

$$

\begin{aligned}

|x-a|_2^2 \leq|x-b|_2^2 & \Longleftrightarrow(x-a)^T(x-a) \leq(x-b)^T(x-b) \

& \Longleftrightarrow x^T x-2 a^T x+a^T a \leq x^T x-2 b^T x+b^T b \

& \Longleftrightarrow 2(b-a)^T x \leq b^T b-a^T a .

\end{aligned}

$$

Therefore, the set is indeed a halfspace. We can take $c=2(b-a)$ and $d=b^T b-a^T a$. This makes good geometric sense: the points that are equidistant to $a$ and $b$ are given by a hyperplane whose normal is in the direction $b-a$.

To illustrate this, we can consider the case of $n=2$. Let $a=(a_1,a_2)$ and $b=(b_1,b_2)$ be two distinct points in $\mathbf{R}^2$. Then the set of all points that are closer to $a$ than $b$ is given by

\left\{(x_1,x_2)\in\mathbf{R}^2\mid(x_1-a_1)^2+(x_2-a_2)^2\leq(x_1-b_1)^2+(x_2-b_2)^2\right\}.{(x1,x2)∈R2∣(x1−a1)2+(x2−a2)2≤(x1−b1)2+(x2−b2)2}.

Expanding the inequality, we get

2(b_1-a_1)x_1+2(b_2-a_2)x_2\leq b_1^2+b_2^2-a_1^2-a_2^2.2(b1−a1)x1+2(b2−a2)x2≤b12+b22−a12−a22.

Dividing both sides by $2\sqrt{(b_1-a_1)^2+(b_2-a_2)^2}$, we obtain

\frac{b_1-a_1}{\sqrt{(b_1-a_1)^2+(b_2-a_2)^2}}x_1+\frac{b_2-a_2}{\sqrt{(b_1-a_1)^2+(b_2-a_2)^2}}x_2\leq\frac{b_1^2+b_2^2-a_1^2-a_2^2}{2\sqrt{(b_1-a_1)^2+(b_2-a_2)^2}},(b1−a1)2+(b2−a2)2b1−a1x1+(b1−a1)2+(b2−a2)2b2−a2x2≤2(b1−a1)2+(b2−a2)2b12+b22−a12−a22,

which is the equation of a halfplane whose boundary is the perpendicular bisector of the segment connecting $a$ and $b$.

Here is a plot of the halfplane in the case where $a=(0,0)$ and $b=(1,2)$:

Which of the following sets $S$ are polyhedra? If possible, express $S$ in the form $S=$ ${x \mid A x \preceq b, F x=g}$.

(a) $S=\left{y_1 a_1+y_2 a_2 \mid-1 \leq y_1 \leq 1,-1 \leq y_2 \leq 1\right}$, where $a_1, a_2 \in \mathbf{R}^n$.

(b) $S=\left{x \in \mathbf{R}^n \mid x \succeq 0,1^T x=1, \sum_{i=1}^n x_i a_i=b_1, \sum_{i=1}^n x_i a_i^2=b_2\right}$, where $a_1, \ldots, a_n \in \mathbf{R}$ and $b_1, b_2 \in \mathbf{R}$.

(c) $S=\left{x \in \mathbf{R}^n \mid x \succeq 0, x^T y \leq 1\right.$ for all $y$ with $\left.|y|_2=1\right}$.

(d) $S=\left{x \in \mathbf{R}^n \mid x \succeq 0, x^T y \leq 1\right.$ for all $y$ with $\left.\sum_{i=1}^n\left|y_i\right|=1\right}$.

Solution.

(a) $S$ is a polyhedron. It is the parallelogram with corners $a_1+a_2, a_1-a_2,-a_1+a_2$, $-a_1-a_2$, as shown below for an example in $\mathbf{R}^2$.

For simplicity we assume that $a_1$ and $a_2$ are independent. We can express $S$ as the intersection of three sets:

- $S_1$ : the plane defined by $a_1$ and $a_2$

- $S_2=\left{z+y_1 a_1+y_2 a_2 \mid a_1^T z=a_2^T z=0,-1 \leq y_1 \leq 1\right}$. This is a slab parallel to $a_2$ and orthogonal to $S_1$

- $S_3=\left{z+y_1 a_1+y_2 a_2 \mid a_1^T z=a_2^T z=0,-1 \leq y_2 \leq 1\right}$. This is a slab parallel to $a_1$ and orthogonal to $S_1$

Each of these sets can be described with linear inequalities. - $S_1$ can be described as

$$

v_k^T x=0, k=1, \ldots, n-2

$$

where $v_k$ are $n-2$ independent vectors that are orthogonal to $a_1$ and $a_2$ (which form a basis for the nullspace of the matrix $\left[\begin{array}{ll}a_1 & a_2\end{array}\right]^T$ ). - Let $c_1$ be a vector in the plane defined by $a_1$ and $a_2$, and orthogonal to $a_2$. For example, we can take

$$

c_1=a_1-\frac{a_1^T a_2}{\left|a_2\right|_2^2} a_2 \text {. }

$$

Then $x \in S_2$ if and only if

$$

-\left|c_1^T a_1\right| \leq c_1^T x \leq\left|c_1^T a_1\right|

$$ - Similarly, let $c_2$ be a vector in the plane defined by $a_1$ and $a_2$, and orthogonal to $a_1, e . g_{.1}$

$$

c_2=a_2-\frac{a_2^T a_1}{\left|a_1\right|_2^2} a_1 .

$$

Then $x \in S_3$ if and only if

$$

-\left|c_2^T a_2\right| \leq c_2^T x \leq\left|c_2^T a_2\right|

$$

Putting it all together, we can describe $S$ as the solution set of $2 n$ linear inequalities

$$

\begin{aligned}

v_k^T x & \leq 0, k=1, \ldots, n-2 \

-v_k^T x & \leq 0, k=1, \ldots, n-2 \

c_1^T x & \leq\left|c_1^T a_1\right| \

-c_1^T x & \leq\left|c_1^T a_1\right| \

c_2^T x & \leq\left|c_2^T a_2\right| \

-c_2^T x & \leq\left|c_2^T a_2\right| .

\end{aligned}

$$

Textbooks

• An Introduction to Stochastic Modeling, Fourth Edition by Pinsky and Karlin (freely

available through the university library here)

• Essentials of Stochastic Processes, Third Edition by Durrett (freely available through

the university library here)

To reiterate, the textbooks are freely available through the university library. Note that

you must be connected to the university Wi-Fi or VPN to access the ebooks from the library

links. Furthermore, the library links take some time to populate, so do not be alarmed if

the webpage looks bare for a few seconds.

Statistics-lab™可以为您提供harvard.edu AM221 Convex optimization现代代数课程的代写代考和辅导服务! 请认准Statistics-lab™. Statistics-lab™为您的留学生涯保驾护航。