数学代写|MATH4320 stochastic porcesses

Statistics-lab™可以为您提供math.osu.edu MATH4320 stochastic porcesses随机过程课程的代写代考和辅导服务!

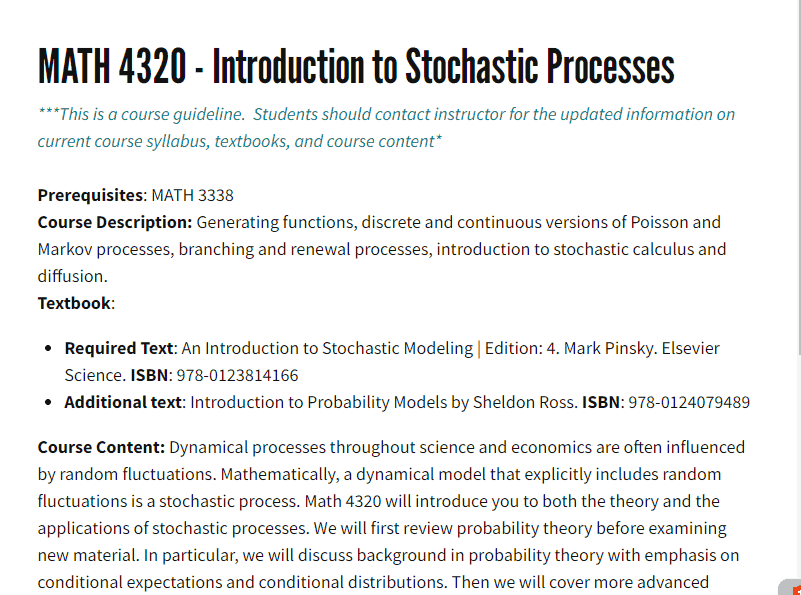

MATH4320 stochastic porcesses课程简介

Great, thanks for providing the course prerequisites and description. It seems like this course covers various topics related to stochastic processes and their applications in mathematics and statistics. Here’s a brief overview of what you can expect to learn in this course:

- Generating functions: You will learn how to use generating functions to solve problems in combinatorics and probability theory. This will include techniques for finding probabilities of events, calculating expected values, and determining the distribution of a random variable.

- Poisson processes: You will study discrete and continuous versions of Poisson processes, which are used to model the occurrence of rare events over time. This will include understanding the Poisson distribution, Poisson point processes, and Poisson random walks.

- Markov processes: You will learn about Markov processes, which are used to model systems that evolve over time with some degree of randomness. This will include understanding Markov chains, Markov processes in continuous time, and the concepts of stationary and limiting distributions.

- Branching processes: You will study branching processes, which are used to model the growth and development of populations over time. This will include understanding the branching process with immigration and the Galton-Watson process.

- Renewal processes: You will learn about renewal processes, which are used to model systems that exhibit periodic behavior. This will include understanding the renewal theorem and its applications.

- Stochastic calculus and diffusion: You will be introduced to stochastic calculus and diffusion, which are used to model systems that exhibit random fluctuations over time. This will include understanding the basic concepts of stochastic differential equations and the diffusion process.

PREREQUISITES

Course Content: Dynamical processes throughout science and economics are often influenced by random fluctuations. Mathematically, a dynamical model that explicitly includes random fluctuations is a stochastic process. Math 4320 will introduce you to both the theory and the applications of stochastic processes. We will first review probability theory before examining new material. In particular, we will discuss background in probability theory with emphasis on conditional expectations and conditional distributions. Then we will cover more advanced topics such as discrete-time Markov chains, Poisson process, continuous-time Markov chains. Grading \& Make-up Policy/Assignment \& Exam Details: Please consult your instructor’s syllabus regarding any and all grading/assignment guidelines.

MATH4320 stochastic porcesses HELP(EXAM HELP, ONLINE TUTOR)

Exercise 3. A box has slips of paper numbered from 1 to 10. A paper is drawn at random and let $X_1$ be the number drawn. This paper is replaced, and the papers are remixed. A second paper with number $X_2$ is drawn. Find the distributions of $\left(X_1, X_2\right)$. Are $X_1$ and $X_2$ independent?

Answer the same questions if the first number is not replaced before the second is drawn.

Since each paper is drawn at random, we can assume that the probability of drawing each paper is the same and equal to $1/10$. Let’s first consider the case where the first paper is replaced before drawing the second one.

In this case, the distribution of $X_1$ is given by:

P(X_1 = k) = \frac{1}{10},\quad k=1,2,\ldots,10P(X1=k)=101,k=1,2,…,10

Since the first paper is replaced, the probability of drawing the second paper with number $k$ is also $1/10$, independent of the value of $X_1$. Therefore, the joint distribution of $\left(X_1, X_2\right)$ is given by:

P(X_1=k,X_2=j) = \frac{1}{10^2},\quad k,j=1,2,\ldots,10P(X1=k,X2=j)=1021,k,j=1,2,…,10

We can also calculate the marginal distributions of $X_1$ and $X_2$ by summing the joint distribution over the appropriate indices:

P(X_1=k) = \sum_{j=1}^{10} P(X_1=k,X_2=j) = \frac{1}{10},\quad k=1,2,\ldots,10P(X1=k)=j=1∑10P(X1=k,X2=j)=101,k=1,2,…,10

P(X_2=j) = \sum_{k=1}^{10} P(X_1=k,X_2=j) = \frac{1}{10},\quad j=1,2,\ldots,10P(X2=j)=k=1∑10P(X1=k,X2=j)=101,j=1,2,…,10

Now, let’s consider the case where the first paper is not replaced before drawing the second one. In this case, the distribution of $X_1$ is the same as before, but the probability of drawing the second paper with number $k$ depends on the value of $X_1$. Specifically, if $X_1=k$, then the probability of drawing the second paper with number $j$ is $1/9$, since there are only $9$ papers left in the box.

Therefore, the joint distribution of $\left(X_1, X_2\right)$ is given by:

P(X_1=k,X_2=j) = \begin{cases} \frac{1}{10}\cdot\frac{1}{9},& k\neq j\\ \frac{1}{10},& k=j \end{cases}P(X1=k,X2=j)={101⋅91,101,k=jk=j

We can also calculate the marginal distributions of $X_1$ and $X_2$ as before:

P(X_1=k) = \sum_{j\neq k} P(X_1=k,X_2=j) + P(X_1=k,X_2=k) = \frac{1}{10},\quad k=1,2,\ldots,10P(X1=k)=j=k∑P(X1=k,X2=j)+P(X1=k,X2=k)=101,k=1,2,…,10

P(X_2=j) = \sum_{k\neq j} P(X_1=k,X_2=j) + P(X_1=j,X_2=j) = \frac{1}{10},\quad j=1,2,\ldots,10P(X2=j)=k=j∑P(X1=k,X2=j)+P(X1=j,X2=j)=101,j=1,2,…,10

Note that in this case, $X_1$ and $X_2$ are not independent, since the probability of drawing the second paper depends on the value of $X_1$. Specifically, if we know that $X_1=k$, then the probability of drawing $X_2=j$ is different depending on whether $j=k$ or $j\neq k$. Therefore, the joint distribution of $\left(X_1, X_2\right)$ cannot be factored into the product of the marginal distributions of $X_1

Exercise 4. Let $(\Omega, \mathcal{F}, \mathbb{P})$ be a probability space, and $X: \Omega \rightarrow \mathbb{N} \cup{\infty}$.

a. Show that $\mathbb{P}(X=\infty)>0$ implies that $\mathbb{E}[X]=\infty$.

b. Show by an example that the converse of this statement is false, i.e. it is possible for $\mathbb{P}(X=$ $\infty)=0$ and yet $\mathbb{E}[X]=\infty$.

a. To show that $\mathbb{P}(X=\infty)>0$ implies that $\mathbb{E}[X]=\infty$, we can use the definition of the expected value:

\mathbb{E}[X] = \sum_{n=0}^\infty n \mathbb{P}(X=n)E[X]=n=0∑∞nP(X=n)

If $\mathbb{P}(X=\infty)>0$, then we know that there exists a non-zero probability of $X$ taking the value of infinity. Therefore, we can write:

\mathbb{E}[X] = \sum_{n=0}^\infty n \mathbb{P}(X=n) \geq \sum_{n=N}^\infty n \mathbb{P}(X=n) \geq N \sum_{n=N}^\infty \mathbb{P}(X=n)E[X]=n=0∑∞nP(X=n)≥n=N∑∞nP(X=n)≥Nn=N∑∞P(X=n)

for any $N \in \mathbb{N}$, where we have used the fact that $n\geq N$ in the second inequality. Since $\sum_{n=N}^\infty \mathbb{P}(X=n)$ is a probability, it sums up to $1$, and thus we have:

\mathbb{E}[X] \geq N \sum_{n=N}^\infty \mathbb{P}(X=n) \geq N \mathbb{P}(X\geq N)E[X]≥Nn=N∑∞P(X=n)≥NP(X≥N)

for any $N \in \mathbb{N}$. If we take $N$ to be large enough so that $\mathbb{P}(X\geq N)>0$, then we have shown that $\mathbb{E}[X]=\infty$.

b. To show that the converse of the statement is false, we can consider the random variable $X$ defined by:

X(\omega) = \begin{cases} n,& \text{with probability } \frac{1}{n(n+1)},\quad n=1,2,\ldots\\ \infty,& \text{with probability } \frac{1}{n(n+1)},\quad n=1,2,\ldots \end{cases}X(ω)={n,∞,with probability n(n+1)1,n=1,2,…with probability n(n+1)1,n=1,2,…

Here, we construct a sequence of geometric distributions with parameter $p_n=1/(n(n+1))$. The probability of $X$ being finite is the sum of the probabilities of the individual outcomes, which is

\sum_{n=1}^\infty \frac{1}{n(n+1)} = 1.n=1∑∞n(n+1)1=1.

Textbooks

• An Introduction to Stochastic Modeling, Fourth Edition by Pinsky and Karlin (freely

available through the university library here)

• Essentials of Stochastic Processes, Third Edition by Durrett (freely available through

the university library here)

To reiterate, the textbooks are freely available through the university library. Note that

you must be connected to the university Wi-Fi or VPN to access the ebooks from the library

links. Furthermore, the library links take some time to populate, so do not be alarmed if

the webpage looks bare for a few seconds.

Statistics-lab™可以为您提供math.osu.edu MATH4320 stochastic porcesses随机过程课程的代写代考和辅导服务! 请认准Statistics-lab™. Statistics-lab™为您的留学生涯保驾护航。