计算机代写|数据库作业代写Database代考|NIT1201

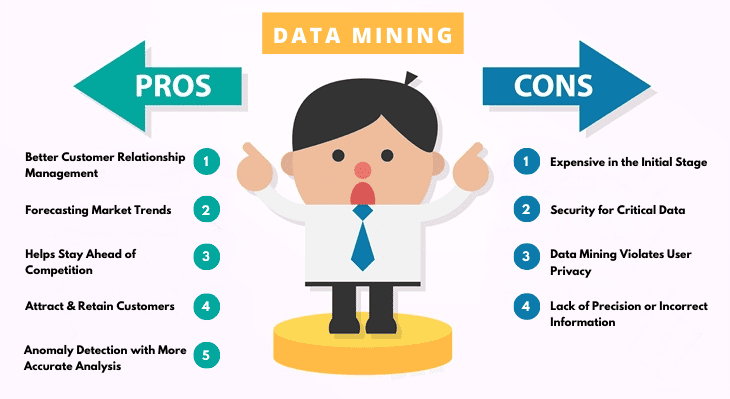

如果你也在 怎样代写数据库Database 这个学科遇到相关的难题,请随时右上角联系我们的24/7代写客服。数据库Database可以成为一种强大的工具,它可以做计算机程序最擅长的事情:存储、操作和显示数据。

数据库Database不仅在许多应用程序中发挥作用,而且经常发挥关键作用。如果数据没有正确存储,它可能会损坏,程序将无法有意义地使用它。如果数据组织不当,程序可能无法在合理的时间内找到所需的数据。

除非数据库安全有效地存储其数据,否则无论系统的其余部分设计得多么好,应用程序都将是无用的。

statistics-lab™ 为您的留学生涯保驾护航 在代写数据库Database方面已经树立了自己的口碑, 保证靠谱, 高质且原创的统计Statistics代写服务。我们的专家在代写数据库Database代写方面经验极为丰富,各种代写数据库Database相关的作业也就用不着说。

计算机代写|数据库作业代写Database代考|Oracle PKI Integration

Oracle Advanced Security includes Oracle PKI (public key infrastructure) integration for authentication and single sign-on. You can integrate Oracle-based applications with the PKI authentication and encryption framework, using the following tools:

- Oracle Wallet Manager creates an encrypted Oracle Wallet, used for digital certificates.

- Oracle Enterprise Login Assistant creates the obfuscated decrypted Oracle Wallet, used by Oracle applications for Secure Sockets Layer (SSL) encryption. The Oracle Wallet is then stored on the file system or Oracle Internet Directory.

Active Directory

Oracle customers with large user populations often require enterprise-level security and schemas management. Oracle security and administration are integrated with Windows 2000 through Active Directory, Microsoft’s directory service.

Oracle9i provides native authentication and single sign-on through Windows 2000 authentication mechanisms. Native authentication uses Kerberos security protocols on Windows 2000 and allows the operating system to perform user identification for Oracle databases. With native authentication enabled, users can access Oracle applications simply by logging into Windows. Single sign-on eliminates need for multiple security credentials and simplifies administration.

Oracle native authentication also supports Oracle9 $i$ enterprise users and roles. Traditionally, administrators must create a database user on every database for each Windows user. This often equates to thousands of different database users. Oracle enterprise user mappings allow many Windows users to access a database as a single global database user. These enterprise user mappings are stored in Active Directory. For example, entire organizational units in Active Directory can be mapped to one database user.

Oracle also stores enterprise role mappings in Active Directory. With such roles, a database privilege can be managed at the domain level through directories. This is accomplished by assigning Windows 2000 users and groups to Oracle enterprise roles registered in Active Directory. Enterprise users and roles reduce administrative overhead while increasing scalability of database solutions.

计算机代写|数据库作业代写Database代考|Oracle Net Naming with Active Directory

Oracle also uses Active Directory to improve management of database connectivity information. Traditionally, users reference databases with Oracle Net-style names resolved through the tnsnames . ora configuration file. This file has to be administered on each client computer.

Oracle Net Naming with Active Directory stores and resolves names through Active Directory. By centralizing such information in a directory, Oracle Net Naming with Active Directory eliminates administrative overhead and relieves users from configuring their individual client computers.

Various tools in Windows 2000, such as Windows Explorer and Active Directory Users and Computers, have been enhanced. Users can now connect to databases and test database connectivity from these tools.

Oracle tools have also been enhanced. Database Configuration Assistant automatically registers database objects with Active Directory. Oracle Net Manager, meanwhile, registers net service objects with the directory. These enhancements further simplify administration.

ORACLEMTSRecoveryService

Microsoft Transaction Server is used in the middle tier as an application server for $\mathrm{COM} / \mathrm{COM}+$ objects and transactions in distributed environments.

ORACLEMTSRecoveryService allows Oracle $9 i$ databases to be used as resource managers in Microsoft Transaction Server-coordinated transactions, providing strong integration between Oracle solutions and Microsoft Transaction Server. ORACLEMTSRecoveryService can operate with Oracle $9 i$ databases running on any operating system.

Oracle takes advantage of a native implementation and also stores recovery information in Oracle $9 i$ database itself. ORACLEMTSRecoveryService allows development in all industry-wide data access interfaces, including Oracle Objects for OLE (OO4O), Oracle Call Interface (OCI), ActiveX Data Objects (ADO), OLE DB, and Open Database Connectivity (ODBC). The Oracle APIs, OO4O and OCI, offer greatest efficiency.

数据库代考

计算机代写|数据库作业代写Database代考|Oracle PKI Integration

Oracle Advanced Security包括Oracle PKI(公钥基础设施)集成,用于身份验证和单点登录。您可以使用以下工具将基于oracle的应用程序与PKI认证和加密框架集成:

Oracle钱包管理器创建一个加密的Oracle钱包,用于数字证书。

Oracle企业登录助手创建混淆解密的Oracle钱包,用于Oracle应用程序的安全套接字层(SSL)加密。然后将Oracle钱包存储在文件系统或Oracle Internet目录中。

活动目录

拥有大量用户的Oracle客户通常需要企业级的安全性和模式管理。Oracle的安全和管理通过Active Directory(微软的目录服务)集成到Windows 2000中。

Oracle9i通过Windows 2000认证机制提供本地认证和单点登录。本机身份验证在Windows 2000上使用Kerberos安全协议,并允许操作系统对Oracle数据库执行用户标识。启用本地身份验证后,用户只需登录Windows即可访问Oracle应用程序。单点登录消除了对多个安全凭证的需求,并简化了管理。

Oracle本地认证还支持Oracle9 $i$企业用户和角色。传统上,管理员必须为每个Windows用户在每个数据库上创建一个数据库用户。这通常相当于数千个不同的数据库用户。Oracle企业用户映射允许多个Windows用户作为单个全局数据库用户访问数据库。这些企业用户映射存储在Active Directory中。例如,活动目录中的整个组织单位可以映射到一个数据库用户。

Oracle还将企业角色映射存储在Active Directory中。使用这些角色,可以通过目录在域级别管理数据库特权。这是通过将Windows 2000用户和组分配给在Active Directory中注册的Oracle企业角色来实现的。企业用户和角色减少了管理开销,同时提高了数据库解决方案的可伸缩性。

计算机代写|数据库作业代写Database代考|Oracle Net Naming with Active Directory

Oracle还使用Active Directory来改进数据库连接信息的管理。传统上,用户使用通过tnsnames解析的Oracle net风格名称来引用数据库。Ora配置文件。必须在每台客户端计算机上管理此文件。

Oracle网络命名与活动目录存储和解析名称通过活动目录。通过将这些信息集中在一个目录中,Oracle网络命名与活动目录消除了管理开销,并减轻了用户配置其个人客户端计算机的负担。

Windows 2000中的各种工具,如Windows资源管理器和Active Directory用户和计算机,都得到了增强。用户现在可以从这些工具连接到数据库并测试数据库连接性。

Oracle工具也得到了增强。数据库配置助手自动向Active Directory注册数据库对象。同时,Oracle Net Manager将Net服务对象注册到该目录中。这些增强功能进一步简化了管理。

ORACLEMTSRecoveryService

Microsoft Transaction Server在中间层用作分布式环境中$\ mathm {COM} / \ mathm {COM}+$对象和事务的应用程序服务器。

ORACLEMTSRecoveryService允许Oracle $ 9i $数据库在Microsoft Transaction Server协调的事务中用作资源管理器,提供了Oracle解决方案和Microsoft Transaction Server之间的强大集成。ORACLEMTSRecoveryService可以在任何操作系统上运行Oracle $ 9i $数据库。

Oracle利用了本地实现,并且还将恢复信息存储在Oracle $ 9i $数据库本身中。ORACLEMTSRecoveryService允许开发所有行业范围的数据访问接口,包括Oracle object for OLE (oo40)、Oracle Call Interface (OCI)、ActiveX data Objects (ADO)、OLE DB和Open Database Connectivity (ODBC)。Oracle api oo40和OCI提供了最高的效率。

统计代写请认准statistics-lab™. statistics-lab™为您的留学生涯保驾护航。

金融工程代写

金融工程是使用数学技术来解决金融问题。金融工程使用计算机科学、统计学、经济学和应用数学领域的工具和知识来解决当前的金融问题,以及设计新的和创新的金融产品。

非参数统计代写

非参数统计指的是一种统计方法,其中不假设数据来自于由少数参数决定的规定模型;这种模型的例子包括正态分布模型和线性回归模型。

广义线性模型代考

广义线性模型(GLM)归属统计学领域,是一种应用灵活的线性回归模型。该模型允许因变量的偏差分布有除了正态分布之外的其它分布。

术语 广义线性模型(GLM)通常是指给定连续和/或分类预测因素的连续响应变量的常规线性回归模型。它包括多元线性回归,以及方差分析和方差分析(仅含固定效应)。

有限元方法代写

有限元方法(FEM)是一种流行的方法,用于数值解决工程和数学建模中出现的微分方程。典型的问题领域包括结构分析、传热、流体流动、质量运输和电磁势等传统领域。

有限元是一种通用的数值方法,用于解决两个或三个空间变量的偏微分方程(即一些边界值问题)。为了解决一个问题,有限元将一个大系统细分为更小、更简单的部分,称为有限元。这是通过在空间维度上的特定空间离散化来实现的,它是通过构建对象的网格来实现的:用于求解的数值域,它有有限数量的点。边界值问题的有限元方法表述最终导致一个代数方程组。该方法在域上对未知函数进行逼近。[1] 然后将模拟这些有限元的简单方程组合成一个更大的方程系统,以模拟整个问题。然后,有限元通过变化微积分使相关的误差函数最小化来逼近一个解决方案。

tatistics-lab作为专业的留学生服务机构,多年来已为美国、英国、加拿大、澳洲等留学热门地的学生提供专业的学术服务,包括但不限于Essay代写,Assignment代写,Dissertation代写,Report代写,小组作业代写,Proposal代写,Paper代写,Presentation代写,计算机作业代写,论文修改和润色,网课代做,exam代考等等。写作范围涵盖高中,本科,研究生等海外留学全阶段,辐射金融,经济学,会计学,审计学,管理学等全球99%专业科目。写作团队既有专业英语母语作者,也有海外名校硕博留学生,每位写作老师都拥有过硬的语言能力,专业的学科背景和学术写作经验。我们承诺100%原创,100%专业,100%准时,100%满意。

随机分析代写

随机微积分是数学的一个分支,对随机过程进行操作。它允许为随机过程的积分定义一个关于随机过程的一致的积分理论。这个领域是由日本数学家伊藤清在第二次世界大战期间创建并开始的。

时间序列分析代写

随机过程,是依赖于参数的一组随机变量的全体,参数通常是时间。 随机变量是随机现象的数量表现,其时间序列是一组按照时间发生先后顺序进行排列的数据点序列。通常一组时间序列的时间间隔为一恒定值(如1秒,5分钟,12小时,7天,1年),因此时间序列可以作为离散时间数据进行分析处理。研究时间序列数据的意义在于现实中,往往需要研究某个事物其随时间发展变化的规律。这就需要通过研究该事物过去发展的历史记录,以得到其自身发展的规律。

回归分析代写

多元回归分析渐进(Multiple Regression Analysis Asymptotics)属于计量经济学领域,主要是一种数学上的统计分析方法,可以分析复杂情况下各影响因素的数学关系,在自然科学、社会和经济学等多个领域内应用广泛。

MATLAB代写

MATLAB 是一种用于技术计算的高性能语言。它将计算、可视化和编程集成在一个易于使用的环境中,其中问题和解决方案以熟悉的数学符号表示。典型用途包括:数学和计算算法开发建模、仿真和原型制作数据分析、探索和可视化科学和工程图形应用程序开发,包括图形用户界面构建MATLAB 是一个交互式系统,其基本数据元素是一个不需要维度的数组。这使您可以解决许多技术计算问题,尤其是那些具有矩阵和向量公式的问题,而只需用 C 或 Fortran 等标量非交互式语言编写程序所需的时间的一小部分。MATLAB 名称代表矩阵实验室。MATLAB 最初的编写目的是提供对由 LINPACK 和 EISPACK 项目开发的矩阵软件的轻松访问,这两个项目共同代表了矩阵计算软件的最新技术。MATLAB 经过多年的发展,得到了许多用户的投入。在大学环境中,它是数学、工程和科学入门和高级课程的标准教学工具。在工业领域,MATLAB 是高效研究、开发和分析的首选工具。MATLAB 具有一系列称为工具箱的特定于应用程序的解决方案。对于大多数 MATLAB 用户来说非常重要,工具箱允许您学习和应用专业技术。工具箱是 MATLAB 函数(M 文件)的综合集合,可扩展 MATLAB 环境以解决特定类别的问题。可用工具箱的领域包括信号处理、控制系统、神经网络、模糊逻辑、小波、仿真等。