统计代写|数据可视化代写Data visualization代考|DTSA5304

如果你也在 怎样代写数据可视化Data visualization这个学科遇到相关的难题,请随时右上角联系我们的24/7代写客服。

数据可视化是将信息转化为视觉背景的做法,如地图或图表,使数据更容易被人脑理解并从中获得洞察力。数据可视化的主要目标是使其更容易在大型数据集中识别模式、趋势和异常值。

statistics-lab™ 为您的留学生涯保驾护航 在代写数据可视化Data visualization方面已经树立了自己的口碑, 保证靠谱, 高质且原创的统计Statistics代写服务。我们的专家在代写数据可视化Data visualization代写方面经验极为丰富,各种代写数据可视化Data visualization相关的作业也就用不着说。

我们提供的数据可视化Data visualization及其相关学科的代写,服务范围广, 其中包括但不限于:

- Statistical Inference 统计推断

- Statistical Computing 统计计算

- Advanced Probability Theory 高等概率论

- Advanced Mathematical Statistics 高等数理统计学

- (Generalized) Linear Models 广义线性模型

- Statistical Machine Learning 统计机器学习

- Longitudinal Data Analysis 纵向数据分析

- Foundations of Data Science 数据科学基础

统计代写|数据可视化代写Data visualization代考|Related Work

First approaches in brain mapping used rigid models and spatial distributions. In [26], a stereotactic atlas is expressed in an orthogonal grid system, which is rescaled to a patient brain, assuming one-to-one correspondences of specific landmarks. Similar approaches are discussed in $[2,5,11]$ using elastic transformations. The variation in brain shape and geometry is of significant extent between different individuals of one species. Static rigid models are not sufficient to describe appropriately such inter-subject variabilities.

Deformable models were introduced as a means to deal with the high complexity of brain surfaces by providing atlases that can be elastically deformed to match a patient brain. Deformable models use snakes [20], B-spline surfaces [24], or other surface-based deformation algorithms $[8,9]$. Feature matching is performed by minimizing a cost function, which is based on an error measure defined by a sum measuring deformation and similarity. The definition of the cost function is crucial. Some approaches rely on segmentation of the main sulci guided by a user [4, 27, 29], while others automatically generate a structural description of the surface.

Level set methods, as described in [21], are widely used for convex shapes. These methods, based on local energy minimization, achieve shape recognition requiring little known information about the surface. Initialization must be done close to surface boundaries, and interactive seed placement is required. Several approaches have been proposed to perform automatically the seeding process and adapt the external propagation force [1], but small features can still be missed. Using a multiresolution representation of the cortical models, patient and atlas meshes are matched progressively by the method described in [16]. Folds are annotated according to size at a given resolution. The choice of the resolution is crucial. It is not guaranteed that same features are present at the same resolution for different brains.

Many other automatic approaches exist, including techniques using active ribbons [10, 13], graph representations [3, 22], and region growing [18]. A survey is provided in [28]. Even though some of the approaches provide good results, the highly non-convex shape of the cortical surface, in combination with inter-subject variability and feature-size variability, leads to problems and may prevent a correct feature recognition/segmentation and mapping without user intervention.

Our approach is an automated approach that can deal with highly non-convex shapes, since we segment the brain into cortical regions, and with feature-size as well as inter-subject variability, since it is based on discrete curvature behavior. Moreover, isosurface extraction, surface segmentation, and topology graphs are embedded in a graphical system supporting visual understanding.

统计代写|数据可视化代写Data visualization代考|Brain Mapping

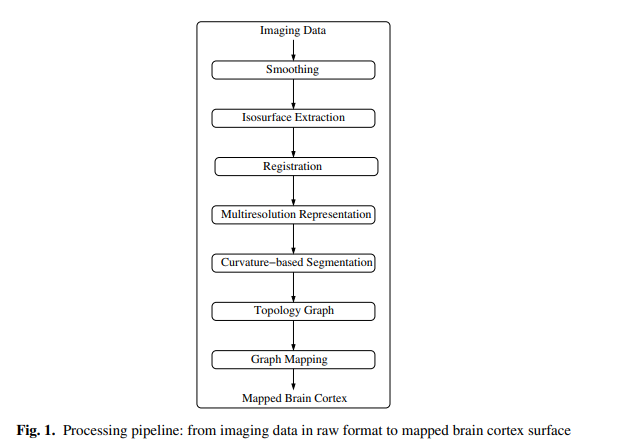

Our brain mapping approach is based on a pipeline of automated steps. Figure 1 illustrates the sequence of individual processing steps.

The input for our processing pipeline is discrete imaging data in some raw format. Typically, imaging techniques produce stacks of aligned images. If the images are not aligned, appropriate alignment tools must be applied [25]. Volumetric reconstruction results in a volume data set, a trivariate scalar field.

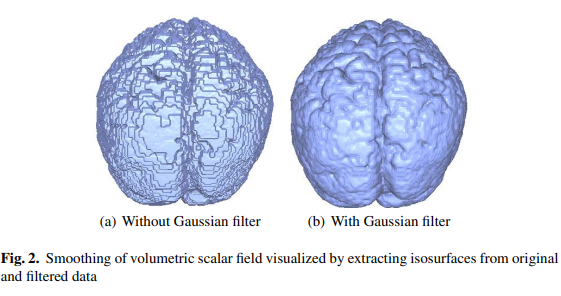

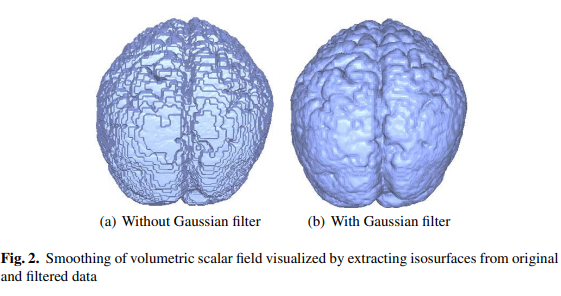

Depending on the used imaging technique, a scanned data set may contain more or less noise. We mainly operate on fMRI data sets, thus having to deal with significant noise levels. We use a three-dimensional discrete Gaussian smoothing filter, which eliminates high-frequency noise without affecting visibly the characteristics of the three-dimensional scalar field. The size of the Gaussian filter must be small. We use a $3 \times 3 \times 3$ mask locally to smooth every value of a rectilinear, regular hexahedral mesh. Figure 2 shows the effect of the smoothing filter applied to a three-dimensional scalar field by extracting isosurfaces from the original and filtered data set.

After this preprocessing step, we extract the geometry of the brain cortex from the volume data. The boundary of the brain cortex is obtained via an isosurface extraction step, as described in Sect. 4. If desired, isosurface extraction can be controlled and supervised in a fashion intuitive to neuroscientists.

Once the geometry of the brain cortices is available for both atlas brain and a user brain, the two surfaces can be registered. Since our brain mapping approach is feature-based, we perform the registration step by a simple and fast rigid body transformation. For an overview and a comparison of rigid body transformation methods, we refer to [6].

数据可视化代考

统计代写|数据可视化代写Data visualization代考|Related Work

最初的脑映射方法使用刚性模型和空间分布。在[26]中,立体定向图谱以正交网格系统表示,该网格系统被重新缩放到患者的大脑,假设特定地标的一对一对应。在$[2,5,11]$中讨论了使用弹性变换的类似方法。在同一物种的不同个体之间,大脑形状和几何形状的差异具有显著的程度。静态刚性模型不足以恰当地描述这种主体间的变化。

通过提供可弹性变形以匹配患者大脑的地图集,引入可变形模型作为处理大脑表面高度复杂性的一种手段。可变形模型使用蛇形[20]、b样条曲面[24]或其他基于曲面的变形算法[8,9]。特征匹配是通过最小化代价函数来实现的,代价函数是基于由测量变形和相似度的总和定义的误差度量。成本函数的定义至关重要。一些方法依赖于用户引导的主沟分割[4,27,29],而其他方法则自动生成表面的结构描述。

如[21]所述,水平集方法被广泛用于凸形状。这些方法以局部能量最小化为基础,实现了在已知曲面信息很少的情况下的形状识别。初始化必须在接近表面边界的地方完成,并且需要交互式种子放置。已经提出了几种方法来执行自动播种过程并适应外部传播力[1],但仍然可能遗漏小特征。使用皮层模型的多分辨率表示,患者和图谱网格通过[16]中描述的方法逐步匹配。在给定的分辨率下,根据大小对折叠进行注释。决议的选择至关重要。不能保证相同的特征在不同的大脑中以相同的分辨率呈现。

存在许多其他自动方法,包括使用活动带[10,13]、图形表示[3,22]和区域生长[18]的技术。[28]提供了一项调查。尽管一些方法提供了良好的结果,但皮质表面的高度非凸形状,加上主体间的可变性和特征尺寸的可变性,会导致问题,并可能在没有用户干预的情况下阻止正确的特征识别/分割和映射。

我们的方法是一种自动化的方法,可以处理高度非凸的形状,因为我们将大脑分割成皮质区域,并且具有特征大小和主体间的可变性,因为它是基于离散曲率行为。此外,等值面提取、曲面分割和拓扑图嵌入到图形系统中,支持视觉理解。

统计代写|数据可视化代写Data visualization代考|Brain Mapping

我们的大脑映射方法是基于自动化步骤的流水线。图1说明了各个处理步骤的顺序。

我们处理管道的输入是一些原始格式的离散成像数据。通常,成像技术产生一堆对齐的图像。如果图像没有对齐,则必须应用适当的对齐工具[25]。体积重建的结果是一个体积数据集,一个三元标量场。

根据所使用的成像技术,扫描的数据集可能包含或多或少的噪声。我们主要对fMRI数据集进行操作,因此必须处理显著的噪声水平。我们使用三维离散高斯平滑滤波器,在不明显影响三维标量场特性的情况下消除高频噪声。高斯滤波器的大小必须很小。我们使用$3 \ × 3 \ × 3$局部掩码来平滑一个直线型、正六面体网格的每个值。图2显示了通过从原始和过滤后的数据集中提取等值面,将平滑滤波器应用于三维标量场的效果。

在此预处理步骤之后,我们从体积数据中提取大脑皮层的几何形状。大脑皮层的边界是通过等值面提取步骤获得的,如第4节所述。如果需要,等值面提取可以以神经科学家直观的方式进行控制和监督。

一旦图谱脑和用户脑的大脑皮层的几何形状可用,这两个表面就可以被注册。由于我们的脑映射方法是基于特征的,我们通过一个简单而快速的刚体转换来执行注册步骤

统计代写请认准statistics-lab™. statistics-lab™为您的留学生涯保驾护航。

金融工程代写

金融工程是使用数学技术来解决金融问题。金融工程使用计算机科学、统计学、经济学和应用数学领域的工具和知识来解决当前的金融问题,以及设计新的和创新的金融产品。

非参数统计代写

非参数统计指的是一种统计方法,其中不假设数据来自于由少数参数决定的规定模型;这种模型的例子包括正态分布模型和线性回归模型。

广义线性模型代考

广义线性模型(GLM)归属统计学领域,是一种应用灵活的线性回归模型。该模型允许因变量的偏差分布有除了正态分布之外的其它分布。

术语 广义线性模型(GLM)通常是指给定连续和/或分类预测因素的连续响应变量的常规线性回归模型。它包括多元线性回归,以及方差分析和方差分析(仅含固定效应)。

有限元方法代写

有限元方法(FEM)是一种流行的方法,用于数值解决工程和数学建模中出现的微分方程。典型的问题领域包括结构分析、传热、流体流动、质量运输和电磁势等传统领域。

有限元是一种通用的数值方法,用于解决两个或三个空间变量的偏微分方程(即一些边界值问题)。为了解决一个问题,有限元将一个大系统细分为更小、更简单的部分,称为有限元。这是通过在空间维度上的特定空间离散化来实现的,它是通过构建对象的网格来实现的:用于求解的数值域,它有有限数量的点。边界值问题的有限元方法表述最终导致一个代数方程组。该方法在域上对未知函数进行逼近。[1] 然后将模拟这些有限元的简单方程组合成一个更大的方程系统,以模拟整个问题。然后,有限元通过变化微积分使相关的误差函数最小化来逼近一个解决方案。

tatistics-lab作为专业的留学生服务机构,多年来已为美国、英国、加拿大、澳洲等留学热门地的学生提供专业的学术服务,包括但不限于Essay代写,Assignment代写,Dissertation代写,Report代写,小组作业代写,Proposal代写,Paper代写,Presentation代写,计算机作业代写,论文修改和润色,网课代做,exam代考等等。写作范围涵盖高中,本科,研究生等海外留学全阶段,辐射金融,经济学,会计学,审计学,管理学等全球99%专业科目。写作团队既有专业英语母语作者,也有海外名校硕博留学生,每位写作老师都拥有过硬的语言能力,专业的学科背景和学术写作经验。我们承诺100%原创,100%专业,100%准时,100%满意。

随机分析代写

随机微积分是数学的一个分支,对随机过程进行操作。它允许为随机过程的积分定义一个关于随机过程的一致的积分理论。这个领域是由日本数学家伊藤清在第二次世界大战期间创建并开始的。

时间序列分析代写

随机过程,是依赖于参数的一组随机变量的全体,参数通常是时间。 随机变量是随机现象的数量表现,其时间序列是一组按照时间发生先后顺序进行排列的数据点序列。通常一组时间序列的时间间隔为一恒定值(如1秒,5分钟,12小时,7天,1年),因此时间序列可以作为离散时间数据进行分析处理。研究时间序列数据的意义在于现实中,往往需要研究某个事物其随时间发展变化的规律。这就需要通过研究该事物过去发展的历史记录,以得到其自身发展的规律。

回归分析代写

多元回归分析渐进(Multiple Regression Analysis Asymptotics)属于计量经济学领域,主要是一种数学上的统计分析方法,可以分析复杂情况下各影响因素的数学关系,在自然科学、社会和经济学等多个领域内应用广泛。

MATLAB代写

MATLAB 是一种用于技术计算的高性能语言。它将计算、可视化和编程集成在一个易于使用的环境中,其中问题和解决方案以熟悉的数学符号表示。典型用途包括:数学和计算算法开发建模、仿真和原型制作数据分析、探索和可视化科学和工程图形应用程序开发,包括图形用户界面构建MATLAB 是一个交互式系统,其基本数据元素是一个不需要维度的数组。这使您可以解决许多技术计算问题,尤其是那些具有矩阵和向量公式的问题,而只需用 C 或 Fortran 等标量非交互式语言编写程序所需的时间的一小部分。MATLAB 名称代表矩阵实验室。MATLAB 最初的编写目的是提供对由 LINPACK 和 EISPACK 项目开发的矩阵软件的轻松访问,这两个项目共同代表了矩阵计算软件的最新技术。MATLAB 经过多年的发展,得到了许多用户的投入。在大学环境中,它是数学、工程和科学入门和高级课程的标准教学工具。在工业领域,MATLAB 是高效研究、开发和分析的首选工具。MATLAB 具有一系列称为工具箱的特定于应用程序的解决方案。对于大多数 MATLAB 用户来说非常重要,工具箱允许您学习和应用专业技术。工具箱是 MATLAB 函数(M 文件)的综合集合,可扩展 MATLAB 环境以解决特定类别的问题。可用工具箱的领域包括信号处理、控制系统、神经网络、模糊逻辑、小波、仿真等。