cs代写|机器学习代写machine learning代考|Regression and optimization

如果你也在 怎样代写机器学习machine learning这个学科遇到相关的难题,请随时右上角联系我们的24/7代写客服。

机器学习(ML)是人工智能(AI)的一种类型,它允许软件应用程序在预测结果时变得更加准确,而无需明确编程。机器学习算法使用历史数据作为输入来预测新的输出值。

statistics-lab™ 为您的留学生涯保驾护航 在代写机器学习machine learning方面已经树立了自己的口碑, 保证靠谱, 高质且原创的统计Statistics代写服务。我们的专家在代写机器学习machine learning代写方面经验极为丰富,各种代写机器学习machine learning相关的作业也就用不着说。

我们提供的机器学习machine learning及其相关学科的代写,服务范围广, 其中包括但不限于:

- Statistical Inference 统计推断

- Statistical Computing 统计计算

- Advanced Probability Theory 高等概率论

- Advanced Mathematical Statistics 高等数理统计学

- (Generalized) Linear Models 广义线性模型

- Statistical Machine Learning 统计机器学习

- Longitudinal Data Analysis 纵向数据分析

- Foundations of Data Science 数据科学基础

cs代写|机器学习代写machine learning代考|Linear regression and gradient descent

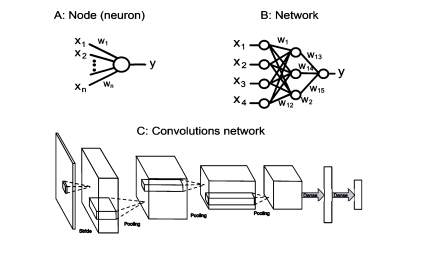

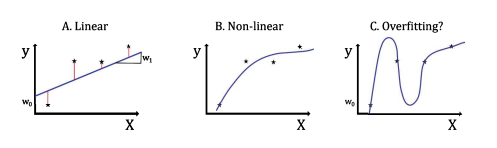

Linear regression is usually taught in high school, but my hope is that this book will provide a new appreciation for this subject and associated methods. It is the simplest form of machine learning, and while linear regression seems limited in scope, linear methods still have some practical relevance since many problems are at least locally approximately linear. Furthermore, we use them here to formalize machine learning methods and specifically to introduce some methods that we can generalize later to non-linear situation. Supervised machine learning is essentially regression, although the recent success of machine learning compared to previous approaches to modeling and regression is their applicability to high-dimensional data with non-linear relations, and the ability to scale these methods to complex models. Linear regression can be solved analytically. However, the non-linear extensions will usually not be analytically solvable. Hence, we will here introduce the formalization of iterative training methods that underly much of supervised learning.

To undertake discuss linear regression, we will follow an example of describing house prices. The table on the left in Figure $5.1$ lists the size in square feet and the corresponding asking prices of some houses. These data points are plotted in the graph on the right in Figure 5.1. The question is, can we predict from these data the likely asking price for houses with different sizes?

To do this prediction we make the assumption that the house price depend essentially on the size of the house in a linear way. That is, a house twice the size should cost twice the money. Of course, this linear model clearly does not capture all the dimensions of the problem. Some houses are old, others might be new. Some houses might need repair and other houses might have some special features. Of course, as everyone in the real estate business knows, it is also location that is very important. Thus, we should keep in mind that there might be unobserved, so-called latent dimensions in the data that might be important in explaining the relations. However, we ignore such hidden causes at this point and just use the linear model over size as our hypothesis.

The linear model of the relation between the house size and the asking price can be made mathematically explicit with the linear equation

$$

y\left(x ; w_{1}, w_{2}\right)=w_{1} x+w_{2}

$$

where $y$ is the asking price, $x$ is the size of the house, and $w_{1}$ and $w_{2}$ are model parameters. Note that $y$ is a function of $x$, and here we follow a notation where the parameters of a function are included after a semi-colon. If the parameters are given, then this function can be used to predict the price of a house for any size. This is the general theme of supervised learning; we assume a specific function with parameters that we can use to predict new data.

cs代写|机器学习代写machine learning代考|Error surface and challenges for gradient descent

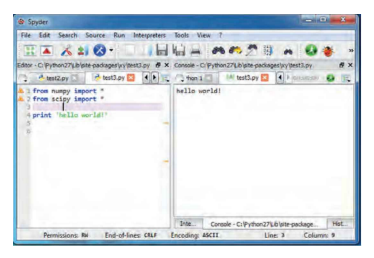

It is instructive to look at the precise numerical results and details when implementing the whole procedure. We first link our common NumPy and plot routines and then define the data given in the table in Fig. 5.1. This figure also shows a plot of these data.

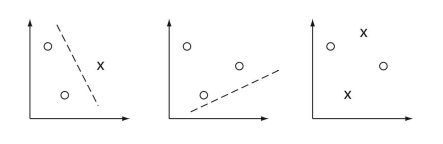

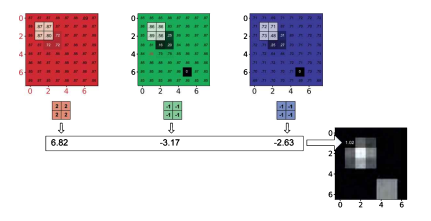

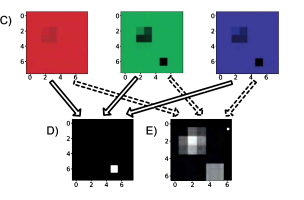

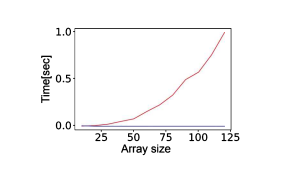

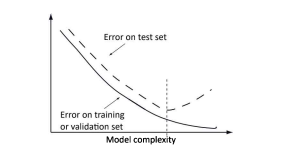

We now write the regression code as shown in Listing 5.2. First we set the starting values for the parameters $w_{1}$ and $w_{2}$, and we initialize an empty array to store the values of the loss function $L$ in each iteration. We also set the update (learning) rate $\alpha$ to a small value. We then perform ten iterations to update the parameters $w_{1}$ and $w_{2}$ with the gradient descent rule. Note that an index of an array with the value $-1$ indicates the last element in an Python array. The result of this program is shown in Fig. 5.2. The fit of the function shown in Fig. 5.2A does not look right at all. To see what is occurring it is good to plot the values of the loss function as shown in Fig. $5.2 B$. As can be seen, the loss function gets bigger, not smaller as we would have expected, and the values itself are extremely large.

The rising loss value is a hint that the learning rate is too large. The reason that this can happen is illustrated in Fig. 5.2C. This graph is a cartoon of a quadratic loss surface. When the update term is too large, the gradient can overshoot the minimum value. In such a case, the loss of the next step can be even larger since the slope at this point is also higher. In this way, every step can increase the loss value and the values will soon exceed the values representable in a computer.

So, let’s try it again with a much smaller learning rate of alpha $=0.00000001$ which was chosen after several trials to get what look like the best result. The results shown in Fig. $5.2$ look certainly much better although also not quite right. The fitted curve does not seem to balance the data points well, and while the loss values decrease at first rapidly, they seem to get stuck at a small value.

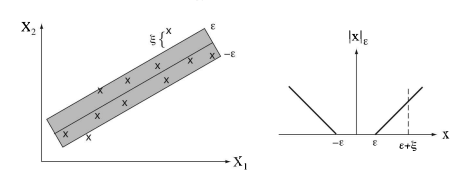

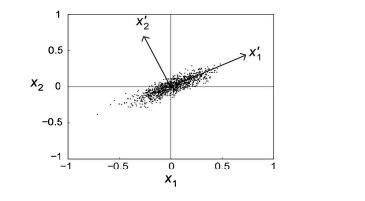

To look more closely at what is going on we can plot the loss function for several values around our expected values of the variable. This is shown in Fig. 5.2C. This reveals that the change of the loss function with respect to the parameter $w_{2}$ is large, but that changing the parameter $w_{1}$ on the same scale has little influence on the loss value. To fix this problem we would have to change the learning rate for each parameter, which is not practical in higher-dimensional models. There are much more sophisticated solutions such as Amari’s Natural Gradient, but a quick fix for many applications is to normalize the data so that the ranges are between 0 and 1 . Thus, by adding the code and setting the learning rate to alpha $=0.04$, we get the solution shown in Fig. 5.2. The solution is much better, although the learning path is still not optimal. However, this is a solution that is sufficient most of the time.

cs代写|机器学习代写machine learning代考|Advanced gradient optimization

Learning in machine learning means finding parameters of the model w that minimize the loss function. There are many methods to minimize a function, and each one would constitute a learning algorithm. However, the workhorse in machine learning is usual some form of a gradient descent algorithm that we encountered earlier. Formally, the basic gradient descent minimizes the sum of the loss values over all training examples, which is called a batch algorithm as all training examples build the batch for minimization. Let us assume we have $m$ training data, then gradient descent iterates the equation

$$

w_{i} \leftarrow w_{i}+\Delta w_{i}

$$

with

$$

\Delta w_{i}=-\frac{\alpha}{N} \sum_{k=1}^{N} \frac{\partial \mathcal{L}\left(y^{(i)}, \mathbf{x}^{(i)} \mid \mathbf{w}\right)}{\partial w_{i}}

$$

where $N$ is the number of training samples. We can also write this compactly for all parameters using vector notation and the Nabla operator $\nabla$ as

$$

\Delta \mathrm{w}=-\frac{\alpha}{N} \sum_{i=1}^{N} \nabla \mathcal{L}^{(i)}

$$

with

$\mathcal{L}\left(y^{(i)}, \mathbf{x}^{(i)} \mid \mathbf{w}\right)$

(5.10)

With a sufficiently small learning rate $\alpha$, this will result in a strictly monotonically decreasing learning curve. However, with many training data, a large number of training

examples have to be kept in memory. Also, batch learning seems unrealistic biologically or in situations where training examples only arrive over a period of time. So-called online algorithms that use the training data when they arrive are therefore often desirable. The online gradient descent would consider only one training example at a time,

$$

\Delta \mathbf{w}=-\alpha \nabla \mathcal{L}^{(i)}

$$

and then use another training example for another update. If the training examples appear randomly in such an example-wise training, then the training examples provide a random walk around the true gradient descent. This algorithms is hence called the stochastic gradient descent (SGD). It can be seen as an approximation of the basic gradient descent algorithm, and the randomness has some positive effects on the search path such as avoiding oscillations or getting stuck in local minima. In practice it is now common to use something in between, using so-called mini-batches of the training data to iterate using them. This is formally still a stochastic gradient descent, but it combines the advantages of a batch algorithm with the reality of limited memory capacities.

机器学习代写

cs代写|机器学习代写machine learning代考|Linear regression and gradient descent

线性回归通常在高中教授,但我希望这本书能为这个主题和相关方法提供新的认识。它是机器学习的最简单形式,虽然线性回归的范围似乎有限,但线性方法仍然具有一定的实际意义,因为许多问题至少在局部近似线性。此外,我们在这里使用它们来形式化机器学习方法,并专门介绍一些我们可以稍后推广到非线性情况的方法。监督机器学习本质上是回归,尽管与以前的建模和回归方法相比,机器学习最近的成功在于它们适用于具有非线性关系的高维数据,以及将这些方法扩展到复杂模型的能力。线性回归可以解析求解。但是,非线性扩展通常无法解析求解。因此,我们将在这里介绍迭代训练方法的形式化,这些方法是监督学习的基础。

为了讨论线性回归,我们将遵循一个描述房价的例子。图左表5.1列出了一些房屋的平方英尺大小和相应的要价。这些数据点绘制在图 5.1 右侧的图表中。问题是,我们能否从这些数据中预测不同大小房屋的可能要价?

为了做这个预测,我们假设房价基本上以线性方式取决于房子的大小。也就是说,两倍大的房子应该花两倍的钱。当然,这个线性模型显然没有捕捉到问题的所有维度。有些房子很旧,有些房子可能是新的。有些房屋可能需要维修,而其他房屋可能有一些特殊功能。当然,正如房地产行业的每个人都知道的那样,位置也是非常重要的。因此,我们应该记住,数据中可能存在未观察到的所谓的潜在维度,这可能对解释关系很重要。然而,我们此时忽略了这些隐藏的原因,只是使用超过大小的线性模型作为我们的假设。

房屋大小与要价之间关系的线性模型可以用线性方程在数学上明确

是(X;在1,在2)=在1X+在2

在哪里是是要价,X是房子的大小,并且在1和在2是模型参数。注意是是一个函数X,这里我们遵循一个符号,其中函数的参数包含在分号之后。如果给出了参数,那么这个函数可以用来预测任何大小的房子的价格。这是监督学习的总主题;我们假设一个带有参数的特定函数,我们可以用它来预测新数据。

cs代写|机器学习代写machine learning代考|Error surface and challenges for gradient descent

在实施整个过程时查看精确的数值结果和细节是有益的。我们首先链接我们常用的 NumPy 和绘图例程,然后定义图 5.1 中的表格中给出的数据。该图还显示了这些数据的图。

我们现在编写回归代码,如清单 5.2 所示。首先我们设置参数的起始值在1和在2,我们初始化一个空数组来存储损失函数的值大号在每次迭代中。我们还设置了更新(学习)率一个到一个很小的值。然后我们执行十次迭代来更新参数在1和在2使用梯度下降法则。请注意,具有值的数组的索引−1表示 Python 数组中的最后一个元素。该程序的结果如图 5.2 所示。图 5.2A 所示的函数拟合看起来一点也不正确。要查看发生了什么,最好绘制损失函数的值,如图所示。5.2乙. 可以看出,损失函数变得更大,而不是我们预期的更小,并且值本身非常大。

不断上升的损失值是学习率太大的暗示。发生这种情况的原因如图 5.2C 所示。该图是二次损失曲面的卡通图。当更新项太大时,梯度会超过最小值。在这种情况下,下一步的损失可能会更大,因为此时的斜率也更高。这样,每一步都可以增加损失值,并且该值很快就会超过计算机可表示的值。

所以,让我们用更小的 alpha 学习率再试一次=0.00000001这是经过几次试验后选择的,以获得看起来最好的结果。结果如图所示。5.2看起来肯定好多了,虽然也不太对。拟合曲线似乎不能很好地平衡数据点,虽然损失值起初迅速下降,但它们似乎卡在了一个很小的值上。

为了更仔细地观察正在发生的事情,我们可以围绕我们的变量预期值绘制几个值的损失函数。如图 5.2C 所示。这表明损失函数相对于参数的变化在2很大,但是改变参数在1在同一尺度上对loss值影响不大。为了解决这个问题,我们必须改变每个参数的学习率,这在高维模型中是不切实际的。有更复杂的解决方案,例如 Amari 的 Natural Gradient,但对于许多应用程序来说,一个快速的解决方法是标准化数据,使范围在 0 和 1 之间。因此,通过添加代码并将学习率设置为 alpha=0.04,我们得到如图 5.2 所示的解决方案。解决方案要好得多,尽管学习路径仍然不是最优的。但是,这是一种在大多数情况下就足够的解决方案。

cs代写|机器学习代写machine learning代考|Advanced gradient optimization

机器学习中的学习意味着找到模型 w 的参数以最小化损失函数。有很多方法可以最小化一个函数,每一种都构成一个学习算法。然而,机器学习中的主力通常是我们之前遇到的某种形式的梯度下降算法。形式上,基本梯度下降最小化所有训练示例的损失值的总和,这称为批处理算法,因为所有训练示例都构建批处理以进行最小化。让我们假设我们有米训练数据,然后梯度下降迭代方程

在一世←在一世+Δ在一世

和

Δ在一世=−一个ñ∑ķ=1ñ∂大号(是(一世),X(一世)∣在)∂在一世

在哪里ñ是训练样本的数量。我们还可以使用矢量符号和 Nabla 运算符为所有参数紧凑地编写它∇作为

Δ在=−一个ñ∑一世=1ñ∇大号(一世)

和

大号(是(一世),X(一世)∣在)

(5.10)

具有足够小的学习率一个,这将导致严格单调递减的学习曲线。但是,由于训练数据多,训练量大

示例必须保存在内存中。此外,批量学习在生物学上或在训练示例仅在一段时间内到达的情况下似乎不切实际。因此,通常需要在训练数据到达时使用所谓的在线算法。在线梯度下降一次只考虑一个训练样例,

Δ在=−一个∇大号(一世)

然后使用另一个训练示例进行另一个更新。如果训练样例在这种逐例训练中随机出现,那么训练样例会提供围绕真实梯度下降的随机游走。因此,这种算法被称为随机梯度下降 (SGD)。它可以看作是基本梯度下降算法的一种近似,随机性对搜索路径有一些积极影响,例如避免振荡或陷入局部最小值。在实践中,现在通常使用介于两者之间的东西,使用所谓的小批量训练数据来迭代使用它们。这在形式上仍然是随机梯度下降,但它结合了批处理算法的优点和内存容量有限的现实。

统计代写请认准statistics-lab™. statistics-lab™为您的留学生涯保驾护航。

金融工程代写

金融工程是使用数学技术来解决金融问题。金融工程使用计算机科学、统计学、经济学和应用数学领域的工具和知识来解决当前的金融问题,以及设计新的和创新的金融产品。

非参数统计代写

非参数统计指的是一种统计方法,其中不假设数据来自于由少数参数决定的规定模型;这种模型的例子包括正态分布模型和线性回归模型。

广义线性模型代考

广义线性模型(GLM)归属统计学领域,是一种应用灵活的线性回归模型。该模型允许因变量的偏差分布有除了正态分布之外的其它分布。

术语 广义线性模型(GLM)通常是指给定连续和/或分类预测因素的连续响应变量的常规线性回归模型。它包括多元线性回归,以及方差分析和方差分析(仅含固定效应)。

有限元方法代写

有限元方法(FEM)是一种流行的方法,用于数值解决工程和数学建模中出现的微分方程。典型的问题领域包括结构分析、传热、流体流动、质量运输和电磁势等传统领域。

有限元是一种通用的数值方法,用于解决两个或三个空间变量的偏微分方程(即一些边界值问题)。为了解决一个问题,有限元将一个大系统细分为更小、更简单的部分,称为有限元。这是通过在空间维度上的特定空间离散化来实现的,它是通过构建对象的网格来实现的:用于求解的数值域,它有有限数量的点。边界值问题的有限元方法表述最终导致一个代数方程组。该方法在域上对未知函数进行逼近。[1] 然后将模拟这些有限元的简单方程组合成一个更大的方程系统,以模拟整个问题。然后,有限元通过变化微积分使相关的误差函数最小化来逼近一个解决方案。

tatistics-lab作为专业的留学生服务机构,多年来已为美国、英国、加拿大、澳洲等留学热门地的学生提供专业的学术服务,包括但不限于Essay代写,Assignment代写,Dissertation代写,Report代写,小组作业代写,Proposal代写,Paper代写,Presentation代写,计算机作业代写,论文修改和润色,网课代做,exam代考等等。写作范围涵盖高中,本科,研究生等海外留学全阶段,辐射金融,经济学,会计学,审计学,管理学等全球99%专业科目。写作团队既有专业英语母语作者,也有海外名校硕博留学生,每位写作老师都拥有过硬的语言能力,专业的学科背景和学术写作经验。我们承诺100%原创,100%专业,100%准时,100%满意。

随机分析代写

随机微积分是数学的一个分支,对随机过程进行操作。它允许为随机过程的积分定义一个关于随机过程的一致的积分理论。这个领域是由日本数学家伊藤清在第二次世界大战期间创建并开始的。

时间序列分析代写

随机过程,是依赖于参数的一组随机变量的全体,参数通常是时间。 随机变量是随机现象的数量表现,其时间序列是一组按照时间发生先后顺序进行排列的数据点序列。通常一组时间序列的时间间隔为一恒定值(如1秒,5分钟,12小时,7天,1年),因此时间序列可以作为离散时间数据进行分析处理。研究时间序列数据的意义在于现实中,往往需要研究某个事物其随时间发展变化的规律。这就需要通过研究该事物过去发展的历史记录,以得到其自身发展的规律。

回归分析代写

多元回归分析渐进(Multiple Regression Analysis Asymptotics)属于计量经济学领域,主要是一种数学上的统计分析方法,可以分析复杂情况下各影响因素的数学关系,在自然科学、社会和经济学等多个领域内应用广泛。

MATLAB代写

MATLAB 是一种用于技术计算的高性能语言。它将计算、可视化和编程集成在一个易于使用的环境中,其中问题和解决方案以熟悉的数学符号表示。典型用途包括:数学和计算算法开发建模、仿真和原型制作数据分析、探索和可视化科学和工程图形应用程序开发,包括图形用户界面构建MATLAB 是一个交互式系统,其基本数据元素是一个不需要维度的数组。这使您可以解决许多技术计算问题,尤其是那些具有矩阵和向量公式的问题,而只需用 C 或 Fortran 等标量非交互式语言编写程序所需的时间的一小部分。MATLAB 名称代表矩阵实验室。MATLAB 最初的编写目的是提供对由 LINPACK 和 EISPACK 项目开发的矩阵软件的轻松访问,这两个项目共同代表了矩阵计算软件的最新技术。MATLAB 经过多年的发展,得到了许多用户的投入。在大学环境中,它是数学、工程和科学入门和高级课程的标准教学工具。在工业领域,MATLAB 是高效研究、开发和分析的首选工具。MATLAB 具有一系列称为工具箱的特定于应用程序的解决方案。对于大多数 MATLAB 用户来说非常重要,工具箱允许您学习和应用专业技术。工具箱是 MATLAB 函数(M 文件)的综合集合,可扩展 MATLAB 环境以解决特定类别的问题。可用工具箱的领域包括信号处理、控制系统、神经网络、模糊逻辑、小波、仿真等。