如果你也在 怎样代写强化学习Reinforcement Learning这个学科遇到相关的难题,请随时右上角联系我们的24/7代写客服。

强化学习是一种基于奖励期望行为和/或惩罚不期望行为的机器学习训练方法。一般来说,强化学习代理能够感知和解释其环境,采取行动并通过试验和错误学习。

statistics-lab™ 为您的留学生涯保驾护航 在代写强化学习Reinforcement Learning方面已经树立了自己的口碑, 保证靠谱, 高质且原创的统计Statistics代写服务。我们的专家在代写强化学习Reinforcement Learning代写方面经验极为丰富,各种代写强化学习Reinforcement Learning相关的作业也就用不着说。

我们提供的强化学习Reinforcement Learning及其相关学科的代写,服务范围广, 其中包括但不限于:

- Statistical Inference 统计推断

- Statistical Computing 统计计算

- Advanced Probability Theory 高等楖率论

- Advanced Mathematical Statistics 高等数理统计学

- (Generalized) Linear Models 广义线性模型

- Statistical Machine Learning 统计机器学习

- Longitudinal Data Analysis 纵向数据分析

- Foundations of Data Science 数据科学基础

统计代写|强化学习作业代写Reinforcement Learning代考|Off-Policy MC Control

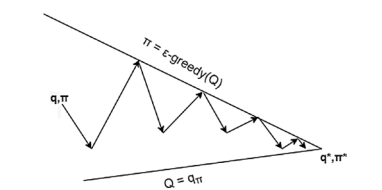

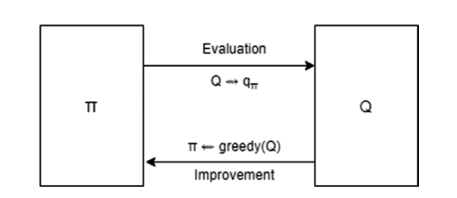

In GLIE, we saw that to explore enough, we needed to use $\varepsilon$-greedy policies so that all state actions are visited often enough in limit. The policy learned at the end of the loop is used to generate the episodes for the next iteration of the loop. We are using the same policy to explore as the one that is being maximized. Such an approach is called onpolicy where samples are generated from the same policy that is being optimized.

There is another approach in which the samples are generated using a policy that is more exploratory with a higher $\varepsilon$, while the policy being optimized is the one that may have a lower $\varepsilon$ or could even be a fully deterministic one. Such an approach of using a different policy to learn than the one being optimized is called off-policy learning. The policy being used to generate the samples is called the behavior policy, and the one being learned (maximized) is called the target policy. Let’s look at Figure $4-7$ for the pseudocode of the off-policy MC control algorithm.

统计代写|强化学习作业代写Reinforcement Learning代考|Temporal Difference Learning Methods

Refer to Figure 4-1 to study the backup diagrams of the DP and MC methods. In DP, we back up the values over only one step using values from the successor states to estimate the current state value. We also take an expectation over action probabilities based on the policy being followed and then from the $(s, a)$ pair to all possible rewards and successor states.

$$

v_{\pi}(s)=\sum_{a} \pi(a \mid s) \sum_{s^{\prime}, r} p\left(s^{\prime}, r \mid s, a\left[r+\gamma v_{\pi}\left(s^{\prime}\right)\right]\right.

$$

The value of a state $v_{\pi}(s)$ is estimated based on the current estimate of the successor states $v_{\pi}(s)$. This is known as bootstrapping. The estimate is based on another set of estimates. The two sums are the ones that are represented as branch-off nodes in the DP backup diagram in Figure 4-1. Compared to DP, MC is based on starting from a state and sampling the outcomes based on the current policy the agent is following. The value estimates are averages over multiple runs. In other words, the sum over model transition probabilities is replaced by averages, and hence the backup diagram for MC is a single long path from one state to the terminal state. The $\mathrm{MC}$ approach allowed us to build a scalable learning approach while removing the need to know the exact model dynamics. However, it created two issues: the MC approach works only for episodic environments, and the updates happen only at the end of the termination of an episode. DP had the advantage of using an estimate of the successor state to update the current state value without waiting for an episode to finish.

Temporal difference learning is an approach that combines the benefits of both DP and $\mathrm{MC}$, using bootstrapping from DP and the sample-based approach from $\mathrm{MC}$. The update equation for TD is as follows:

$$

V(s)=V(s)+\alpha\left[R+\gamma * V\left(s^{\prime}\right)-V(s)\right]

$$

The current estimate of the total return for state $S=s$, i.e., $G_{b}$, is now given by bootstrapping from the current estimate of the successor state $(s)$ shown in the sample run. In other words, $G_{t}$ in equation (4.2) is replaced by $R+\gamma * V(s)$, an estimate. Compared to this, in the MC method, $G_{t}$ was the discounted total return for the sample run.

统计代写|强化学习作业代写Reinforcement Learning代考|Temporal Difference Control

This section will start taking you into the realm of the real algorithms used in the RL world. In the remaining sections of the chapter, we will look at various methods used in TD learning. We will start with a simple one-step on-policy learning method called $S A R S A$. This will be followed by a powerful off-policy technique called $Q$-learning. We will study some foundational aspects of Q-learning in this chapter, and in the next chapter we will

integrate deep learning with Q-learning, giving us a powerful approach called Deep Q Networks (DQN). Using DQN, you will be able to train game-playing agents on an Atari simulator. In this chapter, we will also cover a variant of Q-learning called expected SARSA, another off-policy learning algorithm. We will then talk about the issue of maximization bias in Q-learning, taking us to double Q-learning. All the variants of Q-learning become very powerful when combined with deep learning to represent the state space, which will form the bulk of next chapter. Toward the end of this chapter, we will cover additional concepts such as experience replay, which make off-learning algorithms efficient with respect to the number of samples needed to learn an optimal policy. We will then talk about a powerful and a bit involved approach called $\operatorname{TD}(\lambda)$ that tries to combine $\mathrm{MC}$ and TD methods on a continuum. Finally, we will look at an environment that has continuous state space and how we can binarize the state values and apply the previously mentioned TD methods. The exercise will demonstrate the need for the approaches that we will take up in the next chapter, covering functional approximation and deep learning for state representation. After Chapters 5 and 6 on deep learning and DQN, we will show another approach called policy optimization that revolve around directly learning the policy without needing to find the optimal state/action values.

We have been using the $4 \times 4$ grid world so far. We will now look at a few more environments that will be used in the rest of the chapter. We will write the agents in an encapsulated way so that the same agent/algorithm could be applied in various environments without any changes.

The first environment we will use is a variant of the grid world; it is part of the Gym library called the cliff-walking environment. In this environment, we have a $4 \times 12$ grid world, with the bottom-left cell being the start state $S$ and the bottom-right state being the goal state $G$. The rest of the bottom row forms a cliff; stepping on it earns a reward of $-100$, and the agent is put back to start state again. Each time a step earns a reward of $-1$ until the agent reaches the goal state. Similar to the $4 \times 4$ grid world, the agent can take a step in any direction [UP, RIGHT, DOWN, LEFT]. The episode terminates when the agent reaches the goal state. Figure 4-10 depicts the setup.

强化学习代写

统计代写|强化学习作业代写Reinforcement Learning代考|Off-Policy MC Control

在 GLIE 中,我们看到要进行足够的探索,我们需要使用e- 贪婪策略,以便在有限的情况下经常访问所有状态操作。在循环结束时学习的策略用于为循环的下一次迭代生成情节。我们正在使用与最大化的策略相同的策略进行探索。这种方法称为 onpolicy,其中样本是从正在优化的同一策略生成的。

还有另一种方法,其中使用更具探索性的策略生成样本e,而正在优化的策略可能具有较低的e甚至可以是完全确定的。这种使用与优化策略不同的策略进行学习的方法称为离策略学习。用于生成样本的策略称为行为策略,正在学习(最大化)的策略称为目标策略。我们来看图4−7为off-policy MC控制算法的伪代码。

统计代写|强化学习作业代写Reinforcement Learning代考|Temporal Difference Learning Methods

参考图 4-1 学习 DP 和 MC 方法的备份图。在 DP 中,我们使用来自后继状态的值来估计当前状态值,只备份一个步骤中的值。我们还根据所遵循的政策对行动概率进行预期,然后从(s,一种)与所有可能的奖励和后续状态配对。

在圆周率(s)=∑一种圆周率(一种∣s)∑s′,rp(s′,r∣s,一种[r+C在圆周率(s′)]

一个国家的价值在圆周率(s)是根据继承国的当前估计来估计的在圆周率(s). 这称为自举。该估计基于另一组估计。这两个总和是在图 4-1 中的 DP 备份图中表示为分支节点的总和。与 DP 相比,MC 是基于从一个状态开始并根据代理遵循的当前策略对结果进行采样。价值估计是多次运行的平均值。换句话说,模型转移概率的总和被平均值取代,因此 MC 的备份图是从一个状态到终端状态的一条长路径。这米C方法使我们能够构建可扩展的学习方法,同时无需了解确切的模型动态。但是,它产生了两个问题:MC 方法仅适用于情节环境,并且更新仅在情节终止时发生。DP 的优势在于使用对后继状态的估计来更新当前状态值,而无需等待情节结束。

时间差异学习是一种结合了 DP 和米C,使用 DP 的引导和基于样本的方法米C. TD 的更新方程如下:

在(s)=在(s)+一种[R+C∗在(s′)−在(s)]

当前对 state 总回报的估计小号=s, IE,Gb, 现在通过从对后继状态的当前估计进行引导给出(s)在示例运行中显示。换句话说,G吨在等式(4.2)中被替换为R+C∗在(s),一个估计。与此相比,在 MC 方法中,G吨是样本运行的贴现总回报。

统计代写|强化学习作业代写Reinforcement Learning代考|Temporal Difference Control

本节将开始带您进入 RL 世界中使用的真实算法领域。在本章的其余部分中,我们将研究 TD 学习中使用的各种方法。我们将从一个简单的一步策略学习方法开始,称为小号一种R小号一种. 紧随其后的是一种强大的离策略技术,称为问-学习。我们将在本章中研究 Q 学习的一些基础方面,在下一章中我们将

将深度学习与 Q 学习相结合,为我们提供了一种称为深度 Q 网络 (DQN) 的强大方法。使用 DQN,您将能够在 Atari 模拟器上训练游戏代理。在本章中,我们还将介绍一种称为预期 SARSA 的 Q 学习变体,这是另一种离策略学习算法。然后,我们将讨论 Q-learning 中的最大化偏差问题,带我们进行双重 Q-learning。当与深度学习相结合来表示状态空间时,Q-learning 的所有变体都变得非常强大,这将构成下一章的大部分内容。在本章的最后,我们将介绍经验回放等其他概念,这些概念使离学习算法在学习最优策略所需的样本数量方面有效。然后我们将讨论一种强大且有点复杂的方法,称为运输署(λ)试图结合米C和连续统一体上的 TD 方法。最后,我们将研究一个具有连续状态空间的环境,以及我们如何对状态值进行二值化并应用前面提到的 TD 方法。该练习将展示我们将在下一章中采用的方法的必要性,涵盖状态表示的函数逼近和深度学习。在关于深度学习和 DQN 的第 5 章和第 6 章之后,我们将展示另一种称为策略优化的方法,它围绕直接学习策略而无需找到最佳状态/动作值。

我们一直在使用4×4网格世界到目前为止。现在,我们将看看本章其余部分将使用的更多环境。我们将以封装的方式编写代理,以便相同的代理/算法可以在各种环境中应用而无需任何更改。

我们将使用的第一个环境是网格世界的变体;它是健身房图书馆的一部分,称为悬崖步行环境。在这种环境下,我们有一个4×12网格世界,左下角的单元格是开始状态小号右下角的状态是目标状态G. 底行的其余部分形成悬崖;踩到它可以获得奖励−100,并且代理再次回到启动状态。每走一步就能获得奖励−1直到代理达到目标状态。类似于4×4在网格世界中,智能体可以向任何方向 [UP, RIGHT, DOWN, LEFT] 迈出一步。当代理达到目标状态时,情节终止。图 4-10 描述了设置。

统计代写请认准statistics-lab™. statistics-lab™为您的留学生涯保驾护航。

金融工程代写

金融工程是使用数学技术来解决金融问题。金融工程使用计算机科学、统计学、经济学和应用数学领域的工具和知识来解决当前的金融问题,以及设计新的和创新的金融产品。

非参数统计代写

非参数统计指的是一种统计方法,其中不假设数据来自于由少数参数决定的规定模型;这种模型的例子包括正态分布模型和线性回归模型。

广义线性模型代考

广义线性模型(GLM)归属统计学领域,是一种应用灵活的线性回归模型。该模型允许因变量的偏差分布有除了正态分布之外的其它分布。

术语 广义线性模型(GLM)通常是指给定连续和/或分类预测因素的连续响应变量的常规线性回归模型。它包括多元线性回归,以及方差分析和方差分析(仅含固定效应)。

有限元方法代写

有限元方法(FEM)是一种流行的方法,用于数值解决工程和数学建模中出现的微分方程。典型的问题领域包括结构分析、传热、流体流动、质量运输和电磁势等传统领域。

有限元是一种通用的数值方法,用于解决两个或三个空间变量的偏微分方程(即一些边界值问题)。为了解决一个问题,有限元将一个大系统细分为更小、更简单的部分,称为有限元。这是通过在空间维度上的特定空间离散化来实现的,它是通过构建对象的网格来实现的:用于求解的数值域,它有有限数量的点。边界值问题的有限元方法表述最终导致一个代数方程组。该方法在域上对未知函数进行逼近。[1] 然后将模拟这些有限元的简单方程组合成一个更大的方程系统,以模拟整个问题。然后,有限元通过变化微积分使相关的误差函数最小化来逼近一个解决方案。

tatistics-lab作为专业的留学生服务机构,多年来已为美国、英国、加拿大、澳洲等留学热门地的学生提供专业的学术服务,包括但不限于Essay代写,Assignment代写,Dissertation代写,Report代写,小组作业代写,Proposal代写,Paper代写,Presentation代写,计算机作业代写,论文修改和润色,网课代做,exam代考等等。写作范围涵盖高中,本科,研究生等海外留学全阶段,辐射金融,经济学,会计学,审计学,管理学等全球99%专业科目。写作团队既有专业英语母语作者,也有海外名校硕博留学生,每位写作老师都拥有过硬的语言能力,专业的学科背景和学术写作经验。我们承诺100%原创,100%专业,100%准时,100%满意。

随机分析代写

随机微积分是数学的一个分支,对随机过程进行操作。它允许为随机过程的积分定义一个关于随机过程的一致的积分理论。这个领域是由日本数学家伊藤清在第二次世界大战期间创建并开始的。

时间序列分析代写

随机过程,是依赖于参数的一组随机变量的全体,参数通常是时间。 随机变量是随机现象的数量表现,其时间序列是一组按照时间发生先后顺序进行排列的数据点序列。通常一组时间序列的时间间隔为一恒定值(如1秒,5分钟,12小时,7天,1年),因此时间序列可以作为离散时间数据进行分析处理。研究时间序列数据的意义在于现实中,往往需要研究某个事物其随时间发展变化的规律。这就需要通过研究该事物过去发展的历史记录,以得到其自身发展的规律。

回归分析代写

多元回归分析渐进(Multiple Regression Analysis Asymptotics)属于计量经济学领域,主要是一种数学上的统计分析方法,可以分析复杂情况下各影响因素的数学关系,在自然科学、社会和经济学等多个领域内应用广泛。

MATLAB代写

MATLAB 是一种用于技术计算的高性能语言。它将计算、可视化和编程集成在一个易于使用的环境中,其中问题和解决方案以熟悉的数学符号表示。典型用途包括:数学和计算算法开发建模、仿真和原型制作数据分析、探索和可视化科学和工程图形应用程序开发,包括图形用户界面构建MATLAB 是一个交互式系统,其基本数据元素是一个不需要维度的数组。这使您可以解决许多技术计算问题,尤其是那些具有矩阵和向量公式的问题,而只需用 C 或 Fortran 等标量非交互式语言编写程序所需的时间的一小部分。MATLAB 名称代表矩阵实验室。MATLAB 最初的编写目的是提供对由 LINPACK 和 EISPACK 项目开发的矩阵软件的轻松访问,这两个项目共同代表了矩阵计算软件的最新技术。MATLAB 经过多年的发展,得到了许多用户的投入。在大学环境中,它是数学、工程和科学入门和高级课程的标准教学工具。在工业领域,MATLAB 是高效研究、开发和分析的首选工具。MATLAB 具有一系列称为工具箱的特定于应用程序的解决方案。对于大多数 MATLAB 用户来说非常重要,工具箱允许您学习和应用专业技术。工具箱是 MATLAB 函数(M 文件)的综合集合,可扩展 MATLAB 环境以解决特定类别的问题。可用工具箱的领域包括信号处理、控制系统、神经网络、模糊逻辑、小波、仿真等。