如果你也在 怎样代写统计推断Statistical inference这个学科遇到相关的难题,请随时右上角联系我们的24/7代写客服。

统计推断是指从数据中得出关于种群或科学真理的结论的过程。进行推断的模式有很多,包括统计建模、面向数据的策略以及在分析中明确使用设计和随机化。

statistics-lab™ 为您的留学生涯保驾护航 在代写统计推断Statistical inference方面已经树立了自己的口碑, 保证靠谱, 高质且原创的统计Statistics代写服务。我们的专家在代写统计推断Statistical inference代写方面经验极为丰富,各种代写统计推断Statistical inference相关的作业也就用不着说。

我们提供的统计推断Statistical inference及其相关学科的代写,服务范围广, 其中包括但不限于:

- Statistical Inference 统计推断

- Statistical Computing 统计计算

- Advanced Probability Theory 高等概率论

- Advanced Mathematical Statistics 高等数理统计学

- (Generalized) Linear Models 广义线性模型

- Statistical Machine Learning 统计机器学习

- Longitudinal Data Analysis 纵向数据分析

- Foundations of Data Science 数据科学基础

统计代写|统计推断代写Statistical inference代考|Signal Recovery Problem

One of the basic problems in Signal Processing is the problem of recovering a signal $x \in \mathbf{R}^{n}$ from noisy observations

$$

y=A x+\eta

$$

of a linear image of the signal under a given sensing mapping $x \mapsto A x: \mathbf{R}^{n} \rightarrow \mathbf{R}^{m}$; in (1.1), $\eta$ is the observation error. Matrix $A$ in (1.1) is called sensing matrix.

Recovery problems of the outlined types arise in many applications, including, but by far not reducing to,

- communications, where $x$ is the signal sent by the transmitter, $y$ is the signal recorded by the receiver, and $A$ represents the communication channel (reflecting, e.g., dependencies of decays in the signals’ amplitude on the transmitter-receiver distances); $\eta$ here typically is modeled as the standard (zero mean, unit covariance matrix) $m$-dimensional Gaussian noise; ${ }^{1}$

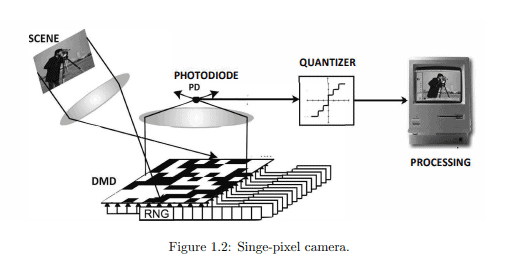

- image reconstruction, where the signal $x$ is an image – a $2 \mathrm{D}$ array in the usual photography, or a 3D array in tomography-and $y$ is data acquired by the imaging device. Here $\eta$ in many cases (although not always) can again be modeled as the standard Gaussian noise;

- linear regression, arising in a wide range of applications. In linear regression, one is given $m$ pairs “input $a^{i} \in \mathbf{R}^{n \text { ” }}$ to a “black box,” with output $y_{i} \in \mathbf{R}$. Sometimes we have reason to believe that the output is a corrupted by noise version of the “existing in nature,” but unobservable, “ideal output” $y_{i}^{*}=x^{T} a^{i}$ which is just a linear function of the input (this is called “linear regression model,” with inputs $a^{i}$ called “regressors”). Our goal is to convert actual observations $\left(a^{i}, y_{i}\right), 1 \leq i \leq m$, into estimates of the unknown “true” vector of parameters $x$. Denoting by $A$ the matrix with the rows $\left[a^{i}\right]^{T}$ and assembling individual observations $y_{i}$ into a single observation $y=\left[y_{1} ; \ldots ; y_{m}\right] \in \mathbf{R}^{m}$, we arrive at the problem of recovering vector $x$ from noisy observations of $A x$. Here again the most popular model for $\eta$ is the standard Gaussian noise.

统计代写|统计推断代写Statistical inference代考|Parametric and nonparametric cases

Recovering signal $x$ from observation $y$ would be easy if there were no observation noise $(\eta=0)$ and the rank of matrix $A$ were equal to the dimension $n$ of the signals. In this case, which arises only when $m \geq n$ (“more observations than unknown parameters”), and is typical in this range of $m$ and $n$, the desired $x$ would be the unique solution to the system of linear equations, and to find $x$ would be a simple problem of Linear Algebra. Aside from this trivial “enough observations, no noise” case, people over the years have looked at the following two versions of the recovery problem:

Parametric case: $m \gg n, \eta$ is nontrivial noise with zero mean, say, standard Gaussian. This is the classical statistical setup with the emphasis on how to use numerous available observations in order to suppress in the recovery, to the extent possible, the influence of observation noise.

Nonparametric case: $m \ll n .^{2}$ If addressed literally, this case seems to be senseless: when the number of observations is less that the number of unknown parameters, even in the noiseless case we arrive at the necessity to solve an undetermined (fewer equations than unknowns) system of linear equations. Linear Algebra says that if solvable, the system has infinitely many solutions. Moreover, the solution set (an affine subspace of positive dimension) is unbounded, meaning that the solutions are in no sense close to each other. A typical way to make the case of $m \ll n$ meaningful is to add to the observations (1.1) some a priori information about the signal. In traditional Nonparametric Statistics, this additional information is summarized in a bounded convex set $X \subset \mathbf{R}^{n}$, given to us in advance, known to contain the true signal $x$. This set usually is such that every signal $x \in X$ can be approximated by a linear combination of $s=1,2, \ldots, n$ vectors from a properly selected basis known to us in advance (“dictionary” in the slang of signal processing) within accuracy $\delta(s)$, where $\delta(s)$ is a function, known in advance, approaching 0 as $s \rightarrow \infty$. In this situation, with appropriate $A$ (e.g., just the unit matrix, as in the denoising problem), we can select some $s \leqslant m$ and try to recover $x$ as if it were a vector from the linear span $E_{s}$ of the first $s$ vectors of the outlined basis $[54,86,124,112,208]$. In the “ideal case,” $x \in E_{s}$, recovering $x$ in fact reduces to the case where the dimension of the signal is $s \ll m$ rather than $n \gg m$, and we arrive at the well-studied situation of recovering a signal of low (compared to the number of observations) dimension. In the “realistic case” of $x \delta(s)$-close to $E_{s}$, deviation of $x$ from $E_{s}$ results in an additional component in the recovery error (“bias”); a typical result of traditional Nonparametric Statistics quantifies the resulting error and minimizes it in $s[86,124,178,222,223,230,239]$. Of course, this outline of the traditional approach to “nonparametric” (with $n \gg m$ ) recovery problems is extremely sketchy, but it captures the most important fact in our context: with the traditional approach to nonparametric signal recovery, one assumes that after representing the signals by vectors of their coefficients in properly selected base, the $n$-dimensional signal to be recovered can be well approximated by an $s$-sparse (at most $s$ nonzero entries) signal, with $s \ll n$, and this sparse approximation can be obtained by zeroing out all but the first $s$ entries in the signal vector. The assumption just formulated indeed takes place for signals obtained by discretization of smooth uni- and multivariate functions, and this class of signals for several decades was the main, if not the only, focus of Nonparametric Statistics.

统计代写|统计推断代写Statistical inference代考|Compressed Sensing via ℓ1 minimization: Motivation

In principle there is nothing surprising in the fact that under reasonable assumption on the $m \times n$ sensing matrix $A$ we may hope to recover from noisy observations of $A x$ an $s$-sparse signal $x$, with $s \ll m$. Indeed, assume for the sake of simplicity that there are no observation errors, and let $\operatorname{Col}{j}[A]$ be $j$-th column in $A$. If we knew the locations $j{1}<j_{2}<\ldots<j_{s}$ of the nonzero entries in $x$, identifying $x$ could be reduced to solving the system of linear equations $\sum_{\ell=1}^{s} x_{i_{\ell}} \operatorname{Col}_{j \ell}[A]=y$ with $m$ equations and $s \ll m$ unknowns; assuming every $s$ columns in $A$ to be linearly independent (a quite unrestrictive assumption on a matrix with $m \geq s$ rows), the solution to the above system is unique, and is exactly the signal we are looking for. Of course, the assumption that we know the locations of nonzeros in $x$ makes the recovery problem completely trivial. However, it suggests the following course of action: given noiseless observation $y=A x$ of an s-sparse signal $x$, let us solve the combinatorial optimization problem

$$

\min {z}\left{|z|{0}: A z=y\right},

$$

where $|z|_{0}$ is the number of nonzero entries in $z$. Clearly, the problem has a solution with the value of the objective at most $s$. Moreover, it is immediately seen that if every $2 s$ columns in $A$ are linearly independent (which again is a very unrestrictive assumption on the matrix $A$ provided that $m \geq 2 s$ ), then the true signal $x$ is the unique optimal solution to $(1.2)$.

What was said so far can be extended to the case of noisy observations and “nearly $s$-sparse” signals $x$. For example, assuming that the observation error is “uncertainbut-bounded,” specifically some known norm $|\cdot|$ of this error does not exceed a given $\epsilon>0$, and that the true signal is s-sparse, we could solve the combinatorial optimization problem

$$

\min {z}\left{|z|{0}:|A z-y| \leq \epsilon\right} .

$$

Assuming that every $m \times 2 \mathrm{~s}$ submatrix $\bar{A}$ of $A$ is not just with linearly independent columns (i.e., with trivial kernel), but is reasonably well conditioned,

$$

|\bar{A} w| \geq C^{-1}|w|_{2}

$$

for all ( $2 s)$-dimensional vectors $w$, with some constant $C$, it is immediately seen that the true signal $x$ underlying the observation and the optimal solution $\widehat{x}$ of (1.3) are close to each other within accuracy of order of $\epsilon:|x-\widehat{x}|_{2} \leq 2 C \epsilon$. It is easily seen that the resulting error bound is basically as good as it could be.

统计推断代考

统计代写|统计推断代写Statistical inference代考|Signal Recovery Problem

信号处理的基本问题之一是恢复信号的问题X∈Rn从嘈杂的观察中

是=一个X+这

给定传感映射下信号的线性图像X↦一个X:Rn→R米; 在(1.1)中,这是观察误差。矩阵一个(1.1)中的称为传感矩阵。

概述类型的恢复问题出现在许多应用程序中,包括但到目前为止不归结为:

- 通讯,在哪里X是发射机发送的信号,是是接收器记录的信号,并且一个表示通信信道(反映,例如,信号幅度衰减对发射机-接收机距离的依赖性);这这里通常被建模为标准(零均值,单位协方差矩阵)米-维高斯噪声;1

- 图像重建,其中信号X是一个图像——一个2D通常摄影中的阵列,或断层扫描中的 3D 阵列 – 和是是成像设备获取的数据。这里这在许多情况下(尽管并非总是如此)可以再次建模为标准高斯噪声;

- 线性回归,在广泛的应用中出现。在线性回归中,给出一个米对“输入一个一世∈Rn ” 到一个“黑匣子”,输出是一世∈R. 有时我们有理由相信输出是“存在于自然界”但不可观察的“理想输出”的噪声版本是一世∗=X吨一个一世这只是输入的线性函数(这称为“线性回归模型”,输入一个一世称为“回归器”)。我们的目标是转换实际观察结果(一个一世,是一世),1≤一世≤米, 估计未知的“真实”参数向量X. 表示一个具有行的矩阵[一个一世]吨并收集个人观察结果是一世一次观察是=[是1;…;是米]∈R米,我们得到了恢复向量的问题X从嘈杂的观察一个X. 这里又是最受欢迎的模型这是标准高斯噪声。

统计代写|统计推断代写Statistical inference代考|Parametric and nonparametric cases

恢复信号X从观察是如果没有观察噪音会很容易(这=0)和矩阵的秩一个等于维度n的信号。在这种情况下,只有当米≥n(“比未知参数更多的观察”),并且在这个范围内是典型的米和n, 所需X将是线性方程组的唯一解,并且找到X将是一个简单的线性代数问题。除了这个琐碎的“足够的观察,没有噪音”的案例之外,多年来人们已经研究了以下两个版本的恢复问题:

参数案例:米≫n,这是具有零均值的非平凡噪声,例如标准高斯噪声。这是经典的统计设置,重点是如何使用大量可用的观察结果,以便在恢复过程中尽可能抑制观察噪声的影响。

非参数案例:米≪n.2如果从字面上讲,这种情况似乎是毫无意义的:当观察的数量少于未知参数的数量时,即使在无噪声的情况下,我们也需要求解一个未确定的(方程少于未知数)线性方程组。线性代数说,如果可解,则系统有无限多的解。此外,解集(正维的仿射子空间)是无界的,这意味着解在任何意义上都不会彼此靠近。一个典型的方式来制作案例米≪n有意义的是将一些关于信号的先验信息添加到观察(1.1)中。在传统的非参数统计中,这些附加信息被汇总在一个有界凸集中X⊂Rn,提前给我们,已知包含真实信号X. 这组通常是这样的,每个信号X∈X可以通过以下的线性组合来近似s=1,2,…,n向量来自我们预先知道的正确选择的基础(信号处理的俚语中的“字典”)在精度范围内d(s), 在哪里d(s)是一个函数,预先知道,接近 0 为s→∞. 在这种情况下,适当的一个(例如,只是单位矩阵,就像在去噪问题中一样),我们可以选择一些s⩽米并尝试恢复X好像它是来自线性跨度的向量和s第一个s概述基的向量[54,86,124,112,208]. 在“理想情况”下,X∈和s, 恢复X实际上简化为信号的维数为s≪米而不是n≫米,我们达到了恢复低(与观察次数相比)维度的信号的充分研究的情况。在“现实案例”中Xd(s)-相近和s, 偏差X从和s导致恢复错误中的附加组件(“偏差”);传统非参数统计的典型结果量化了产生的误差并将其最小化s[86,124,178,222,223,230,239]. 当然,这个对“非参数”的传统方法的概述(与n≫米)恢复问题非常粗略,但它捕获了我们上下文中最重要的事实:使用传统的非参数信号恢复的方法,人们假设在正确选择的基础中代表其系数的向量表示信号后n要恢复的维信号可以很好地近似为s-稀疏(最多s非零条目)信号,与s≪n,并且可以通过将除第一个以外的所有内容归零来获得此稀疏近似s信号向量中的条目。刚刚提出的假设确实适用于通过平滑单变量和多变量函数离散化获得的信号,并且几十年来这类信号是非参数统计的主要焦点,如果不是唯一的焦点。

统计代写|统计推断代写Statistical inference代考|Compressed Sensing via ℓ1 minimization: Motivation

原则上,在合理的假设下,这一事实并不令人惊讶。米×n传感矩阵一个我们可能希望从嘈杂的观察中恢复过来一个X一个s-稀疏信号X, 和s≪米. 事实上,为了简单起见,假设没有观察错误,并让科尔j[一个]是j- 第列一个. 如果我们知道地点j1<j2<…<js中的非零条目X, 识别X可以简化为求解线性方程组∑ℓ=1sX一世ℓ科尔jℓ[一个]=是和米方程和s≪米未知数;假设每个s中的列一个是线性独立的(对矩阵的一个非常无限制的假设米≥s行),上述系统的解决方案是独一无二的,正是我们正在寻找的信号。当然,假设我们知道非零点的位置X使恢复问题变得微不足道。但是,它建议采取以下行动:给定无噪音观察是=一个Xs-稀疏信号的X,让我们解决组合优化问题

\min {z}\left{|z|{0}: A z=y\right},\min {z}\left{|z|{0}: A z=y\right},

在哪里|和|0是非零条目的数量和. 显然,问题最多有一个目标值的解决方案s. 此外,立即可以看出,如果每个2s中的列一个是线性独立的(这又是对矩阵的一个非常无限制的假设一个前提是米≥2s),那么真实信号X是唯一的最优解(1.2).

到目前为止所说的可以扩展到嘈杂观察的情况,并且“几乎s-稀疏”信号X. 例如,假设观察误差是“不确定但有界的”,特别是一些已知的范数|⋅|这个错误不超过给定的ε>0,并且真实信号是s稀疏的,我们可以解决组合优化问题

\min {z}\left{|z|{0}:|A zy| \leq \epsilon\right} 。\min {z}\left{|z|{0}:|A zy| \leq \epsilon\right} 。

假设每个米×2 s子矩阵一个¯的一个不仅具有线性独立的列(即具有平凡的内核),而且具有相当好的条件,

|一个¯在|≥C−1|在|2

对所有人 (2s)维向量在, 有一些常数C,立即可以看出真实信号X观察和最优解的基础X^(1.3)的顺序精度内彼此接近ε:|X−X^|2≤2Cε. 很容易看出,由此产生的误差界限基本上是尽可能好的。

统计代写请认准statistics-lab™. statistics-lab™为您的留学生涯保驾护航。

金融工程代写

金融工程是使用数学技术来解决金融问题。金融工程使用计算机科学、统计学、经济学和应用数学领域的工具和知识来解决当前的金融问题,以及设计新的和创新的金融产品。

非参数统计代写

非参数统计指的是一种统计方法,其中不假设数据来自于由少数参数决定的规定模型;这种模型的例子包括正态分布模型和线性回归模型。

广义线性模型代考

广义线性模型(GLM)归属统计学领域,是一种应用灵活的线性回归模型。该模型允许因变量的偏差分布有除了正态分布之外的其它分布。

术语 广义线性模型(GLM)通常是指给定连续和/或分类预测因素的连续响应变量的常规线性回归模型。它包括多元线性回归,以及方差分析和方差分析(仅含固定效应)。

有限元方法代写

有限元方法(FEM)是一种流行的方法,用于数值解决工程和数学建模中出现的微分方程。典型的问题领域包括结构分析、传热、流体流动、质量运输和电磁势等传统领域。

有限元是一种通用的数值方法,用于解决两个或三个空间变量的偏微分方程(即一些边界值问题)。为了解决一个问题,有限元将一个大系统细分为更小、更简单的部分,称为有限元。这是通过在空间维度上的特定空间离散化来实现的,它是通过构建对象的网格来实现的:用于求解的数值域,它有有限数量的点。边界值问题的有限元方法表述最终导致一个代数方程组。该方法在域上对未知函数进行逼近。[1] 然后将模拟这些有限元的简单方程组合成一个更大的方程系统,以模拟整个问题。然后,有限元通过变化微积分使相关的误差函数最小化来逼近一个解决方案。

tatistics-lab作为专业的留学生服务机构,多年来已为美国、英国、加拿大、澳洲等留学热门地的学生提供专业的学术服务,包括但不限于Essay代写,Assignment代写,Dissertation代写,Report代写,小组作业代写,Proposal代写,Paper代写,Presentation代写,计算机作业代写,论文修改和润色,网课代做,exam代考等等。写作范围涵盖高中,本科,研究生等海外留学全阶段,辐射金融,经济学,会计学,审计学,管理学等全球99%专业科目。写作团队既有专业英语母语作者,也有海外名校硕博留学生,每位写作老师都拥有过硬的语言能力,专业的学科背景和学术写作经验。我们承诺100%原创,100%专业,100%准时,100%满意。

随机分析代写

随机微积分是数学的一个分支,对随机过程进行操作。它允许为随机过程的积分定义一个关于随机过程的一致的积分理论。这个领域是由日本数学家伊藤清在第二次世界大战期间创建并开始的。

时间序列分析代写

随机过程,是依赖于参数的一组随机变量的全体,参数通常是时间。 随机变量是随机现象的数量表现,其时间序列是一组按照时间发生先后顺序进行排列的数据点序列。通常一组时间序列的时间间隔为一恒定值(如1秒,5分钟,12小时,7天,1年),因此时间序列可以作为离散时间数据进行分析处理。研究时间序列数据的意义在于现实中,往往需要研究某个事物其随时间发展变化的规律。这就需要通过研究该事物过去发展的历史记录,以得到其自身发展的规律。

回归分析代写

多元回归分析渐进(Multiple Regression Analysis Asymptotics)属于计量经济学领域,主要是一种数学上的统计分析方法,可以分析复杂情况下各影响因素的数学关系,在自然科学、社会和经济学等多个领域内应用广泛。

MATLAB代写

MATLAB 是一种用于技术计算的高性能语言。它将计算、可视化和编程集成在一个易于使用的环境中,其中问题和解决方案以熟悉的数学符号表示。典型用途包括:数学和计算算法开发建模、仿真和原型制作数据分析、探索和可视化科学和工程图形应用程序开发,包括图形用户界面构建MATLAB 是一个交互式系统,其基本数据元素是一个不需要维度的数组。这使您可以解决许多技术计算问题,尤其是那些具有矩阵和向量公式的问题,而只需用 C 或 Fortran 等标量非交互式语言编写程序所需的时间的一小部分。MATLAB 名称代表矩阵实验室。MATLAB 最初的编写目的是提供对由 LINPACK 和 EISPACK 项目开发的矩阵软件的轻松访问,这两个项目共同代表了矩阵计算软件的最新技术。MATLAB 经过多年的发展,得到了许多用户的投入。在大学环境中,它是数学、工程和科学入门和高级课程的标准教学工具。在工业领域,MATLAB 是高效研究、开发和分析的首选工具。MATLAB 具有一系列称为工具箱的特定于应用程序的解决方案。对于大多数 MATLAB 用户来说非常重要,工具箱允许您学习和应用专业技术。工具箱是 MATLAB 函数(M 文件)的综合集合,可扩展 MATLAB 环境以解决特定类别的问题。可用工具箱的领域包括信号处理、控制系统、神经网络、模糊逻辑、小波、仿真等。