如果你也在 怎样代写Generalized linear model这个学科遇到相关的难题,请随时右上角联系我们的24/7代写客服。

广义线性模型(GLiM,或GLM)是John Nelder和Robert Wedderburn在1972年提出的一种高级统计建模技术。它是一个包括许多其他模型的总称,它允许响应变量y具有正态分布以外的误差分布。

statistics-lab™ 为您的留学生涯保驾护航 在代写Generalized linear model方面已经树立了自己的口碑, 保证靠谱, 高质且原创的统计Statistics代写服务。我们的专家在代写Generalized linear model代写方面经验极为丰富,各种代写Generalized linear model相关的作业也就用不着说。

我们提供的Generalized linear model及其相关学科的代写,服务范围广, 其中包括但不限于:

- Statistical Inference 统计推断

- Statistical Computing 统计计算

- Advanced Probability Theory 高等概率论

- Advanced Mathematical Statistics 高等数理统计学

- (Generalized) Linear Models 广义线性模型

- Statistical Machine Learning 统计机器学习

- Longitudinal Data Analysis 纵向数据分析

- Foundations of Data Science 数据科学基础

统计代写|Generalized linear model代考广义线性模型代写|Basic Probability

Statistics is based entirely on a branch of mathematics called probability, which is concerned with the likelihood of outcomes for an event. In probability theory, mathematicians use information about

all possible outcomes for an event in order to determine the likelihood of any given outcome. The first section of this chapter will explain the basics of probability before advancing to the foundational issues of the rest of the textbook.

Imagine an event repeated 100 times. Each of these repetitions – called a trial – would be recorded. Mathematically, the formula for probability (abbreviated $p$ ) is:

$$

p=\frac{\text { Number of trials with the same outcome }}{\text { Total number of trials }}

$$

(Formula 6.1)

To calculate the probability of any given outcome, it is necessary to count the number of trials that resulted in that particular outcome and divide it by the total number of trials. Thus, if the 100 trials consisted of coin flips – which have two outcomes, heads and tails – and 50 trials resulted in “heads” and 50 trials resulted in “tails,” the probability of the coin landing heads side up would then be $\frac{50}{100}$ ” To simplify this notation, it is standard to reduce the fraction to its simplest form or to convert it to a decimal: $1 / 2$ or $.50$ in this example.

This is called the empirical probability because it is based on empirical data collected from actual trials. Some readers will notice that this is the same method and formula for calculating the relative frequency in a frequency table (see Chapter 3). Therefore, empirical probabilities have the same mathematical properties as relative frequencies. That is, probability values always range from 0 to 1 . A probability of zero indicates that the outcome never occurred, and a probability value of 1 indicates that the outcome occurred for every trial (and that no other outcome occurred). Also, all probabilities for a trial must add up to 1 – just as all relative frequencies in a dataset must add up to 1 .

Interpreting probability statistics only requires an understanding of percentages, fractions, and decimals. In the coin-flipping example, a probability of $.50$ indicates that we can expect that half of those trials would result in an outcome of “heads.” Similarly, the chances that any particular trial will result in an outcome of “heads” is $50 \%$. Mathematically, it really doesn’t matter whether probability values are expressed as percentages (e.g., $50 \%$ ), fractions (e.g., 1/2), or decimals (e.g., .50). Statisticians, though, prefer to express probabilities as decimals, and this textbook will stick to that convention.

统计代写|Generalized linear model代考广义线性模型代写|The Logic of Inferential Statistics and the CLT

The beginning of this book, especially Chapter 4, discussed descriptive statistics, which is the branch of statistics concerned with describing data that have been collected. Descriptive statistics are indispensable for understanding data, but they are often of limited usefulness because most social scientists wish to make conclusions about the entire population of interest – not just the subjects in the sample that provided data to the researcher. For example, in a study of bipolar mood disorders, Kupka et al. (2007) collected data from 507 patients to examine how frequently they were in manic, hypomanic, and depressed mood states. The descriptive statistics that Kupka et al. provided are interesting – but they are of limited use if they only apply to the 507 people in the study. The authors of this study – and the vast majority of researchers – want to apply their conclusions to the entire population of people they are studying, even people who are not in the sample. The process of drawing conclusions about the entire population based on data from a sample is called generalization, and it requires inferential statistics in order to be possible. Inferential statistics is the branch of statistics that builds on the foundation of probability in order to make generalizations.

The logic of inferential statistics is diagrammed in Figure 6.6. It starts with a population, which, for a continuous, interval- or ratio-level variable, has an unknown mean and unknown standard deviation (represented as the circle in the top left of Figure 6.6). A researcher then draws a random sample from the population and uses the techniques discussed in previous chapters to calculate a sample mean and create a sample histogram. The problem with using a single sample to learn about the population is that there is no way of knowing whether that sample is typical – or representative – of the population from which it is drawn. It is possible (although not likely if the

sampling method was truly random) that the sample consists of several outliers that distort the sample mean and standard deviation.

The solution to this conundrum is to take multiple random samples with the same $n$ from the population. Because of natural random variation in which population members are selected for a sample, we expect some slight variation in mean values from sample to sample. This natural variation across samples is called sampling error. With several samples that all have the same sample size, it becomes easier to judge whether any particular sample is typical or unusual because we can see which samples differ from the others (and therefore probably have more sampling error). However, this still does not tell the researcher anything about the population. Further steps are needed to make inferences about the population from sample data.

After finding a mean for each sample, it is necessary to create a separate histogram (in the middle of Figure 6.6) that consists solely of sample means. The histogram of means from a series of samples is called a sampling distribution of means. Sampling distributions can be created from

other sample statistics (e.g., standard deviations, medians, ranges), but for the purposes of this chapter it is only necessary to talk about a sampling distribution of means.

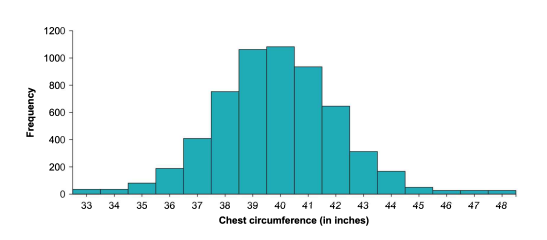

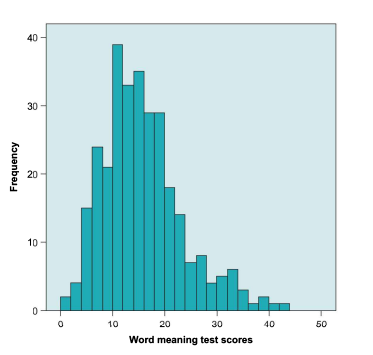

Because a sampling distribution of means is produced by a purely random process, its properties are governed by the same principles of probability that govern other random outcomes, such as dice throws and coin tosses. Therefore, there are some regular predictions that can be made about the properties of the sampling distribution as the number of contributing samples increases. First, with a large sample size within each sample, the shape of the sampling distribution of means is a normal distribution. Given the convergence to a normal distribution, as shown in Figures 6.4a-6.4d, this should not be surprising. What is surprising to some people is that this convergence towards a normal distribution occurs regardless of the shape of the population, as long as the $\mathrm{n}$ for each sample is at least 25 and all samples have the same sample size. This is the main principle of the CLT.

统计代写|Generalized linear model代考广义线性模型代写|Summary

The basics of probability form the foundation of inferential statistics. Probability is the branch of mathematics concerned with estimating the likelihood of outcomes of trials. There are two types of probabilities that can be estimated. The first is the empirical probability, which is calculated by conducting a large number of trials and finding the proportion of trials that resulted in each outcome, using Formula 6.1. The second type of probability is the theoretical probability, which is calculated by dividing the number of methods of obtaining an outcome by the total number of possible outcomes. Adding together the probabilities of two different events will produce the probability that either one will occur. Multiplying the probabilities of two events together will produce the joint probability, which is the likelihood that the two events will occur at the same time or in succession.

With a small or moderate number of trials, there may be discrepancies between the empirical and theoretical probabilities. However, as the number of trials increases, the empirical probability converges to the value of the theoretical probability. Additionally, it is possible to build a histogram of outcomes of trials from multiple events; dividing the number of trials that resulted in each outcome by the total number of trials produces an empirical probability distribution. As the number of trials increases, this empirical probability distribution gradually converges to the theoretical probability distribution, which is a histogram of the theoretical probabilities.

If an outcome is produced by adding together the results of multiple independent events, the theoretical probability distribution will be normally distributed. Additionally, with a large number of trials, the empirical probability distribution will also be normally distributed. This is a result of the CLT.

The CLT states that a sampling distribution of means will be normally distributed if the size of each sample is at least 25. As a result of the CLT, it is possible to make inferences about the population based on sample data – a process called generalization. Additionally, the mean of the sample means converges to the population mean as the number of samples in a sampling distribution increases. Likewise, the standard deviation of means in the sampling distribution (called the standard error) converges on the value of $\frac{\sigma}{\sqrt{n}}$.

广义线性模型代写

统计代写|Generalized linear model代考广义线性模型代写|Basic Probability

统计学完全基于称为概率的数学分支,它与事件结果的可能性有关。在概率论中,数学家使用关于

事件的所有可能结果,以确定任何给定结果的可能性。本章的第一部分将解释概率的基础知识,然后再讨论教科书其余部分的基础问题。

想象一个事件重复了 100 次。这些重复中的每一个——称为试验——都会被记录下来。在数学上,概率公式(缩写为p) 是:

p= 相同结果的试验次数 试验总数

(公式 6.1)

为了计算任何给定结果的概率,有必要计算导致该特定结果的试验次数并将其除以试验总数。因此,如果 100 次试验包括抛硬币——有两种结果,正面和反面——并且 50 次试验产生“正面”,50 次试验产生“反面”,那么硬币正面朝上的概率为50100” 为了简化这个符号,将分数简化为最简单的形式或将其转换为小数是标准的:1/2或者.50在这个例子中。

这被称为经验概率,因为它基于从实际试验中收集的经验数据。有些读者会注意到,这与计算频率表中相对频率的方法和公式相同(参见第 3 章)。因此,经验概率与相对频率具有相同的数学性质。也就是说,概率值的范围总是从 0 到 1 。概率为零表示结果从未发生,概率值为 1 表示每次试验都发生了结果(并且没有发生其他结果)。此外,试验的所有概率加起来必须为 1 – 就像数据集中的所有相对频率加起来必须为 1 一样。

解释概率统计只需要了解百分比、分数和小数。在掷硬币的例子中,概率为.50表明我们可以预期其中一半的试验会产生“正面”的结果。同样,任何特定试验导致“正面”结果的可能性是50%. 在数学上,概率值是否表示为百分比实际上并不重要(例如,50%)、分数(例如,1/2)或小数(例如,0.50)。不过,统计学家更喜欢将概率表示为小数,而这本教科书将坚持这一惯例。

统计代写|Generalized linear model代考广义线性模型代写|The Logic of Inferential Statistics and the CLT

本书的开头,尤其是第 4 章,讨论了描述性统计,这是与描述已收集数据有关的统计分支。描述性统计对于理解数据是必不可少的,但它们的用处通常有限,因为大多数社会科学家希望对整个感兴趣的人群做出结论——而不仅仅是样本中向研究人员提供数据的受试者。例如,在双相情绪障碍的研究中,Kupka 等人。(2007) 收集了 507 名患者的数据,以检查他们处于躁狂、轻躁狂和抑郁情绪状态的频率。Kupka 等人的描述性统计数据。提供的内容很有趣——但如果它们仅适用于研究中的 507 人,则它们的用途有限。这项研究的作者——以及绝大多数研究人员——希望将他们的结论应用于他们正在研究的整个人群,甚至是不在样本中的人。根据样本数据得出关于整个人口的结论的过程称为泛化,它需要推论统计才能成为可能。推论统计是统计的一个分支,它建立在概率的基础上以进行概括。

推论统计的逻辑如图 6.6 所示。它从一个总体开始,对于一个连续的、区间或比率水平的变量,该总体具有未知的平均值和未知的标准差(表示为图 6.6 左上角的圆圈)。然后,研究人员从总体中抽取一个随机样本,并使用前面章节中讨论的技术来计算样本均值并创建样本直方图。使用单个样本来了解总体的问题在于,无法知道该样本是否是其所来自的总体的典型(或代表性)。这是可能的(虽然不太可能,如果

抽样方法是真正随机的)样本由几个异常值组成,这些异常值扭曲了样本均值和标准偏差。

解决这个难题的方法是随机抽取多个相同的样本n从人口。由于为样本选择人口成员的自然随机变化,我们预计样本之间的平均值会略有变化。这种跨样本的自然变化称为抽样误差。对于具有相同样本量的多个样本,判断任何特定样本是典型的还是异常的变得更容易,因为我们可以看到哪些样本与其他样本不同(因此可能有更多的抽样误差)。然而,这仍然没有告诉研究人员有关人口的任何信息。需要进一步的步骤来从样本数据中推断出总体。

在找到每个样本的均值后,有必要创建一个单独的直方图(在图 6.6 的中间),它仅由样本均值组成。来自一系列样本的均值直方图称为均值的抽样分布。抽样分布可以从

其他样本统计数据(例如,标准差、中位数、范围),但为了本章的目的,只需要讨论均值的抽样分布。

因为均值的抽样分布是由纯随机过程产生的,所以它的属性受支配其他随机结果(例如掷骰子和掷硬币)的相同概率原则支配。因此,随着贡献样本数量的增加,可以对采样分布的属性进行一些常规预测。首先,每个样本内的样本量很大,均值的抽样分布的形状是正态分布。鉴于收敛到正态分布,如图 6.4a-6.4d 所示,这应该不足为奇。令一些人惊讶的是,无论人口的形状如何,这种趋向正态分布的收敛都会发生,只要n每个样本至少有 25 个,并且所有样本都具有相同的样本量。这是 CLT 的主要原则。

统计代写|Generalized linear model代考广义线性模型代写|Summary

概率的基础构成了推理统计的基础。概率是与估计试验结果的可能性有关的数学分支。有两种类型的概率可以估计。第一个是经验概率,它是通过进行大量试验并使用公式 6.1 找到导致每个结果的试验比例来计算的。第二种概率是理论概率,它是通过将获得结果的方法数除以可能结果的总数来计算的。将两个不同事件的概率相加将产生其中任何一个事件发生的概率。将两个事件的概率相乘将产生联合概率,

对于少量或中等数量的试验,经验概率和理论概率之间可能存在差异。然而,随着试验次数的增加,经验概率会收敛到理论概率的值。此外,可以从多个事件中构建试验结果的直方图;将导致每个结果的试验次数除以试验总数产生经验概率分布。随着试验次数的增加,这种经验概率分布逐渐收敛到理论概率分布,即理论概率的直方图。

如果一个结果是通过将多个独立事件的结果相加而产生的,那么理论上的概率分布将是正态分布的。此外,随着大量试验,经验概率分布也将呈正态分布。这是 CLT 的结果。

CLT 指出,如果每个样本的大小至少为 25,则均值的抽样分布将呈正态分布。由于 CLT,可以根据样本数据对总体进行推断——这一过程称为泛化。此外,随着抽样分布中样本数量的增加,样本均值的均值会收敛到总体均值。同样,抽样分布中均值的标准差(称为标准误差)收敛于σn.

统计代写请认准statistics-lab™. statistics-lab™为您的留学生涯保驾护航。

金融工程代写

金融工程是使用数学技术来解决金融问题。金融工程使用计算机科学、统计学、经济学和应用数学领域的工具和知识来解决当前的金融问题,以及设计新的和创新的金融产品。

非参数统计代写

非参数统计指的是一种统计方法,其中不假设数据来自于由少数参数决定的规定模型;这种模型的例子包括正态分布模型和线性回归模型。

广义线性模型代考

广义线性模型(GLM)归属统计学领域,是一种应用灵活的线性回归模型。该模型允许因变量的偏差分布有除了正态分布之外的其它分布。

术语 广义线性模型(GLM)通常是指给定连续和/或分类预测因素的连续响应变量的常规线性回归模型。它包括多元线性回归,以及方差分析和方差分析(仅含固定效应)。

有限元方法代写

有限元方法(FEM)是一种流行的方法,用于数值解决工程和数学建模中出现的微分方程。典型的问题领域包括结构分析、传热、流体流动、质量运输和电磁势等传统领域。

有限元是一种通用的数值方法,用于解决两个或三个空间变量的偏微分方程(即一些边界值问题)。为了解决一个问题,有限元将一个大系统细分为更小、更简单的部分,称为有限元。这是通过在空间维度上的特定空间离散化来实现的,它是通过构建对象的网格来实现的:用于求解的数值域,它有有限数量的点。边界值问题的有限元方法表述最终导致一个代数方程组。该方法在域上对未知函数进行逼近。[1] 然后将模拟这些有限元的简单方程组合成一个更大的方程系统,以模拟整个问题。然后,有限元通过变化微积分使相关的误差函数最小化来逼近一个解决方案。

tatistics-lab作为专业的留学生服务机构,多年来已为美国、英国、加拿大、澳洲等留学热门地的学生提供专业的学术服务,包括但不限于Essay代写,Assignment代写,Dissertation代写,Report代写,小组作业代写,Proposal代写,Paper代写,Presentation代写,计算机作业代写,论文修改和润色,网课代做,exam代考等等。写作范围涵盖高中,本科,研究生等海外留学全阶段,辐射金融,经济学,会计学,审计学,管理学等全球99%专业科目。写作团队既有专业英语母语作者,也有海外名校硕博留学生,每位写作老师都拥有过硬的语言能力,专业的学科背景和学术写作经验。我们承诺100%原创,100%专业,100%准时,100%满意。

随机分析代写

随机微积分是数学的一个分支,对随机过程进行操作。它允许为随机过程的积分定义一个关于随机过程的一致的积分理论。这个领域是由日本数学家伊藤清在第二次世界大战期间创建并开始的。

时间序列分析代写

随机过程,是依赖于参数的一组随机变量的全体,参数通常是时间。 随机变量是随机现象的数量表现,其时间序列是一组按照时间发生先后顺序进行排列的数据点序列。通常一组时间序列的时间间隔为一恒定值(如1秒,5分钟,12小时,7天,1年),因此时间序列可以作为离散时间数据进行分析处理。研究时间序列数据的意义在于现实中,往往需要研究某个事物其随时间发展变化的规律。这就需要通过研究该事物过去发展的历史记录,以得到其自身发展的规律。

回归分析代写

多元回归分析渐进(Multiple Regression Analysis Asymptotics)属于计量经济学领域,主要是一种数学上的统计分析方法,可以分析复杂情况下各影响因素的数学关系,在自然科学、社会和经济学等多个领域内应用广泛。

MATLAB代写

MATLAB 是一种用于技术计算的高性能语言。它将计算、可视化和编程集成在一个易于使用的环境中,其中问题和解决方案以熟悉的数学符号表示。典型用途包括:数学和计算算法开发建模、仿真和原型制作数据分析、探索和可视化科学和工程图形应用程序开发,包括图形用户界面构建MATLAB 是一个交互式系统,其基本数据元素是一个不需要维度的数组。这使您可以解决许多技术计算问题,尤其是那些具有矩阵和向量公式的问题,而只需用 C 或 Fortran 等标量非交互式语言编写程序所需的时间的一小部分。MATLAB 名称代表矩阵实验室。MATLAB 最初的编写目的是提供对由 LINPACK 和 EISPACK 项目开发的矩阵软件的轻松访问,这两个项目共同代表了矩阵计算软件的最新技术。MATLAB 经过多年的发展,得到了许多用户的投入。在大学环境中,它是数学、工程和科学入门和高级课程的标准教学工具。在工业领域,MATLAB 是高效研究、开发和分析的首选工具。MATLAB 具有一系列称为工具箱的特定于应用程序的解决方案。对于大多数 MATLAB 用户来说非常重要,工具箱允许您学习和应用专业技术。工具箱是 MATLAB 函数(M 文件)的综合集合,可扩展 MATLAB 环境以解决特定类别的问题。可用工具箱的领域包括信号处理、控制系统、神经网络、模糊逻辑、小波、仿真等。