统计代写|广义线性模型代写generalized linear model代考|STAT3022

如果你也在 怎样代写广义线性模型generalized linear model这个学科遇到相关的难题,请随时右上角联系我们的24/7代写客服。

广义线性模型(GLiM,或GLM)是John Nelder和Robert Wedderburn在1972年制定的一种高级统计建模技术。它是一个包含许多其他模型的总称,它允许响应变量y具有除正态分布以外的误差分布。

statistics-lab™ 为您的留学生涯保驾护航 在代写广义线性模型generalized linear model方面已经树立了自己的口碑, 保证靠谱, 高质且原创的统计Statistics代写服务。我们的专家在代写广义线性模型generalized linear model代写方面经验极为丰富,各种代写广义线性模型generalized linear model相关的作业也就用不着说。

我们提供的广义线性模型generalized linear model及其相关学科的代写,服务范围广, 其中包括但不限于:

- Statistical Inference 统计推断

- Statistical Computing 统计计算

- Advanced Probability Theory 高等概率论

- Advanced Mathematical Statistics 高等数理统计学

- (Generalized) Linear Models 广义线性模型

- Statistical Machine Learning 统计机器学习

- Longitudinal Data Analysis 纵向数据分析

- Foundations of Data Science 数据科学基础

统计代写|广义线性模型代写generalized linear model代考|Correspondence Analysis

The analysis of the hair-eye color data in the previous section revealed how hair and eye color are dependent. But this does not tell us how they are dependent. To study this, we can use a kind of residual analysis for contingency tables called correspondence analysis.

Compute the Pearson residuals $r_{P}$ and write them in the matrix form $R_{i j}$, where $i=1, \ldots, r$ and $j=1, \ldots, c$, according to the structure of the data. Perform the singular value decomposition:

$$

R_{r \times c}=U_{r \times w} D_{w \times w} V_{w \times c}^{T}

$$

where $r$ is the number of rows, $c$ is the number of columns and $w=\min (r, c) . U$ and $V$ are called the right and left singular vectors, respectively. $D$ is a diagonal matrix with sorted elements $d_{i}$, called singular values. Another way of writing this is:

$$

R_{i j}=\sum_{k=1}^{w} U_{i k} d_{k} V_{j k}

$$

As with eigendecompositions, it is not uncommon for the first few singular values to be much larger than the rest. Suppose that the first two dominate so that:

$$

R_{i j} \approx U_{i 1} d_{1} V_{j 1}+U_{i 2} d_{2} V_{j 2}

$$

We usually absorb the $d$ s into $U$ and $V$ for plotting purposes so that we can assess the relative contribution of the components. Thus:

$$

\begin{aligned}

R_{i j} & \approx\left(U_{i 1} \sqrt{d_{1}}\right) \times\left(V_{j 1} \sqrt{d_{1}}\right)+\left(U_{i 2} \sqrt{d_{2}}\right) \times\left(V_{j 2} \sqrt{d_{2}}\right) \

& \equiv U_{i 1} V_{j 1}+U_{i 2} V_{j 2}

\end{aligned}

$$

where in the latter expression we have redefined the $U \mathrm{~s}$ and $V \mathrm{~s}$ to include the $\sqrt{d}$.

统计代写|广义线性模型代写generalized linear model代考|Matched Pairs

\begin{aligned}

&\text { In the typical two-way contingency tables, we display accumulated information } \

&\text { about two categorical measures on the same object. In matched pairs, we observe } \

&\text { one measure on two matched objects. } \

&\text { In Stuart (1955), data on the vision of a sample of women is presented. The left } \

&\text { and right eye performance is graded into four categories: } \

&\text { data (eyegrade) } \

&\text { (ct c- xtabs }(y \sim \text { right+left, eyegrade)) } \

&\text { right best second third worst } \

&\text { best } 1520 \quad 266 \quad 124 \quad 66 \

&\text { second } 234 \text { left } 1512 \quad 432 \quad 78 \

&\text { third } 117 \quad 362 \quad 1772 \quad 205 \

&\text { worst } 36 \quad 82 \quad 179 \quad 492

\end{aligned}

If we check for independence:

summary (et)

Call: xtabs (formula – y right + left, data – eyegrade)

Number of cases in table: 7477

Number of factors: 2

Test for independence of all factors:

Chisq – 8097, df – 9, p-value $=0$

We are not surprised to find strong evidence of dependence. Most people’s eyes are similar. A more interesting hypothesis for such matched pair data is symmetry. Is $p_{i j}=p_{j i}$ ? We can fit such a model by defining a factor where the levels represent the symmetric pairs for the off-diagonal elements. There is only one observation for each level down the diagonal:

(symfac <- factor (apply (eyegrade $[, 2: 3], 1$, function (x) paste (sort $(x)$,

$\rightarrow$ collapse=” ” “))))

[1] best-best best-second best-third best-worst

[5] best-second second-second second-third second-worst

[9] best-third second-third third-third third-worst

10 Levels: best-best best-second best-third … worst-worst

We now fit this model:

mods <- glm(y symfac, eyegrade, familympoisson)

c (deviance (mods), df . residual (mods))

[1] $19.2496 .000$

pchisq (deviance (mods), df . residual (mods), lower=F)

[1] $0.0037629$

Here, we see evidence of a lack of symmetry. It is worth checking the residuals:

round (xtabs (residuals (mods) right+left, eyegrade), 3)

round (xtabs (residuals (mods) righ left

We see that the residuals above the diagonal are mostly positive, while they are mostly negative below the diagonal. So there are generally more poor left, good right eye combinations than the reverse. Furthermore, we can compute the marginals:

margin table $(c t, 1)$

right

$\begin{array}{lrr}\text { best second third } & \text { worst } \ 1976 & 2256 & 2456\end{array}$

统计代写|广义线性模型代写generalized linear model代考|Ordinal Variables

Some variables have a natural order. We can use the methods for nominal variables described earlier in this chapter, but more information can be extracted by taking advantage of the structure of the data. Sometimes we might identify a particular ordinal variable as the response. In such cases, the methods of Section $7.4$ can be used. However, sometimes we are interested in modeling the association between ordinal variables. Here the use of scores can be helpful.

Consider a two-way table where both variables are ordinal. We may assign scores $u_{i}$ and $v_{j}$ to the rows and columns such that $u_{1} \leq u_{2} \leq \cdots \leq u_{I}$ and $v_{1} \leq v_{2} \leq \cdots \leq v_{J}$. The assignment of scores requires some judgment. If you have no particular prefer-

ence, even spacing allows for the simplest interpretation. If you have an interval scale, for example, $0-10$ years old, 10-20 years old, $20-40$ years old and so on, midpoints are often used. It is a good idea to check that the inference is robust to the assignment of scores by trying some reasonable alternative choices. If your qualitative conclusions are changed, this is an indication that you cannot make any strong finding.

Now fit the linear-by-linear association model:

$$

\log E Y_{i j}=\log \mu_{i j}=\log n p_{i j}=\log n+\alpha_{i}+\beta_{j}+\gamma u_{i} v_{j}

$$

So $\gamma=0$ means independence while $\gamma$ represents the amount of association and can be positive or negative. $\gamma$ is rather like an (unscaled) correlation coefficient. Consider underlying (latent) continuous variables which are discretized by the cutpoints $u_{i}$ and $v_{j}$. We can then identify $\gamma$ with the correlation coefficient of the latent variables.

Consider an example drawn from a subset of the 1996 American National Election Study (Rosenstone et al. (1997)). Using just the data on party affiliation and level of education, we can construct a two-way table:

data (nes96)

xtabs ( PID + educ, nes96)

\begin{tabular}{lrrrrrrrr}

\multicolumn{8}{c}{ educ } \

PID & MS & HSdrop HS Coll cCdeg & BAdeg MAdeg \

strDem & 5 & 19 & 59 & 38 & 17 & 40 & 22 \

weakDem & 4 & 10 & 49 & 36 & 17 & 41 & 23 \

indDem & 1 & 4 & 28 & 15 & 13 & 27 & 20 \

indind & 0 & 3 & 12 & 9 & 3 & 6 & 4 \

indRep & 2 & 7 & 23 & 16 & 8 & 22 & 16 \

weakRep & 0 & 5 & 35 & 40 & 15 & 38 & 17 \

strRep & 1 & 4 & 42 & 33 & 17 & 53 & 25

\end{tabular}

Both variables are ordinal in this example. We need to convert this to a dataframe with one count per line to enable model fitting.

广义线性模型代考

统计代写|广义线性模型代写generalized linear model代考|Correspondence Analysis

上一节中对头发-眼睛颜色数据的分析揭示了头发和眼睛颜色是如何相互依赖的。但这并没有告诉我们他们是如何依赖的。为了研究这一点,我们可以对列联表使用一种称为对应分析的残差分析。

计算皮尔逊残差r磷并将它们写成矩阵形式R一世j, 在哪里一世=1,…,r和j=1,…,C,根据数据结构。执行奇异值分解:

Rr×C=在r×在D在×在在在×C吨

在哪里r是行数,C是列数和在=分钟(r,C).在和在分别称为右奇异向量和左奇异向量。D是具有排序元素的对角矩阵d一世,称为奇异值。另一种写法是:

R一世j=∑ķ=1在在一世ķdķ在jķ

与特征分解一样,前几个奇异值远大于其余奇异值的情况并不少见。假设前两个占主导地位,因此:

R一世j≈在一世1d1在j1+在一世2d2在j2

我们通常吸收d进入在和在用于绘图目的,以便我们可以评估组件的相对贡献。因此:

R一世j≈(在一世1d1)×(在j1d1)+(在一世2d2)×(在j2d2) ≡在一世1在j1+在一世2在j2

在后一个表达式中,我们重新定义了在 s和在 s包括d.

统计代写|广义线性模型代写generalized linear model代考|Matched Pairs

在典型的双向列联表中,我们显示累积信息 关于同一对象的两个分类度量。在配对中,我们观察到 对两个匹配的对象进行一次测量。 在 Stuart (1955) 中,提供了关于女性样本视力的数据。左边 右眼表现分为四类: 数据(眼级) (ct c-xtabs (是∼ 右+左,眼级)) 对 最好 第二 第三 最差 最好的 152026612466 第二 234 剩下 151243278 第三 1173621772205 最坏的 3682179492

如果我们检查独立性:

summary (et)

Call: xtabs (formula – y right + left, data – eyegrade)

表中的案例数:7477

因素数:2

测试所有因素的独立性:

Chisq – 8097, df – 9、p值=0

我们对发现依赖的有力证据并不感到惊讶。大多数人的眼睛都是相似的。这种配对数据的一个更有趣的假设是对称性。是p一世j=pj一世? 我们可以通过定义一个因子来拟合这样的模型,其中水平表示非对角元素的对称对。对角线下的每一层只有一个观察值:

(symfac <- factor (apply (eyegrade[,2:3],1, 函数 (x) 粘贴(排序(X),

→collapse=” ” “)))))

[1] 最佳-最佳-最佳-第二-最佳-第三–最差

[5] 最佳-第二-第二-第二-第三-第二-最差

[9] 最佳-第三-第二-第三-第三-第三第三最差

10 个级别:最好最好最好第二最好第三…最差

我们现在拟合这个模型:

mods <- glm(y symfac, eyegrade, familympoisson)

c (deviance (mods), df .residual (mods ))

[1]19.2496.000

pchisq (deviance (mods), df .residual (mods), lower=F)

[1]0.0037629

在这里,我们看到了缺乏对称性的证据。值得检查残差:

round (xtabs(residuals (mods) right+left, eyegrade), 3)

round (xtabs(residuals (mods) right left

我们看到对角线以上的残差大多为正,而大部分为正对角线下方的负数。因此,通常左眼和右眼的组合比相反的差。此外,我们可以计算边际:

margin table(C吨,1)

正确的

最好的第二第三 最坏的 197622562456

统计代写|广义线性模型代写generalized linear model代考|Ordinal Variables

一些变量具有自然顺序。我们可以使用本章前面描述的名义变量的方法,但是可以通过利用数据的结构来提取更多信息。有时我们可能会确定一个特定的序数变量作为响应。在这种情况下,本节的方法7.4可以使用。然而,有时我们有兴趣对序数变量之间的关联进行建模。在这里,使用分数可能会有所帮助。

考虑一个双向表,其中两个变量都是有序的。我们可以分配分数在一世和在j到行和列,使得在1≤在2≤⋯≤在我和在1≤在2≤⋯≤在Ĵ. 分数的分配需要一些判断。如果你没有特别的偏好——

因此,即使间距允许最简单的解释。例如,如果您有一个区间尺度,0−10岁,10-20岁,20−40岁等,经常使用中点。通过尝试一些合理的替代选择来检查推理对分数分配是否稳健是一个好主意。如果您的定性结论发生变化,这表明您无法做出任何强有力的发现。

现在拟合线性关联模型:

日志和是一世j=日志μ一世j=日志np一世j=日志n+一个一世+bj+C在一世在j

所以C=0意味着独立,而C表示关联的量,可以是正数或负数。C更像是一个(未缩放的)相关系数。考虑由切点离散化的潜在(潜在)连续变量在一世和在j. 然后我们可以识别C与潜在变量的相关系数。

考虑一个取自 1996 年美国全国选举研究(Rosenstone 等人(1997))子集的例子。仅使用党派和教育程度的数据,我们可以构建一个双向表:

data (nes96)

xtabs ( PID + educ, nes96)

\begin{tabular}{lrrrrrrrr} \multicolumn{8}{c}{ educ} \ PID & MS & HSdrop HS Coll cCdeg & BAdeg MAdeg \ strDem & 5 & 19 & 59 & 38 & 17 & 40 & 22 \weakDem & 4 & 10 & 49 & 36 & 17 & 41 & 23 \ indDem & 1 & 4 & 28 & 15 & 13 & 27 & 20 \ indind & 0 & 3 & 12 & 9 & 3 & 6 & 4 \ indRep & 2 & 7 & 23 & 16 & 8 & 22 & 16 \weakRep & 0 & 5 & 35 & 40 & 15 & 38 & 17 \ strRep & 1 & 4 & 42 & 33 & 17 & 53 & 25 \end{tabular}\begin{tabular}{lrrrrrrrr} \multicolumn{8}{c}{ educ} \ PID & MS & HSdrop HS Coll cCdeg & BAdeg MAdeg \ strDem & 5 & 19 & 59 & 38 & 17 & 40 & 22 \weakDem & 4 & 10 & 49 & 36 & 17 & 41 & 23 \ indDem & 1 & 4 & 28 & 15 & 13 & 27 & 20 \ indind & 0 & 3 & 12 & 9 & 3 & 6 & 4 \ indRep & 2 & 7 & 23 & 16 & 8 & 22 & 16 \weakRep & 0 & 5 & 35 & 40 & 15 & 38 & 17 \ strRep & 1 & 4 & 42 & 33 & 17 & 53 & 25 \end{tabular}

在此示例中,这两个变量都是有序的。我们需要将其转换为每行一个计数的数据帧以启用模型拟合。

统计代写请认准statistics-lab™. statistics-lab™为您的留学生涯保驾护航。

金融工程代写

金融工程是使用数学技术来解决金融问题。金融工程使用计算机科学、统计学、经济学和应用数学领域的工具和知识来解决当前的金融问题,以及设计新的和创新的金融产品。

非参数统计代写

非参数统计指的是一种统计方法,其中不假设数据来自于由少数参数决定的规定模型;这种模型的例子包括正态分布模型和线性回归模型。

广义线性模型代考

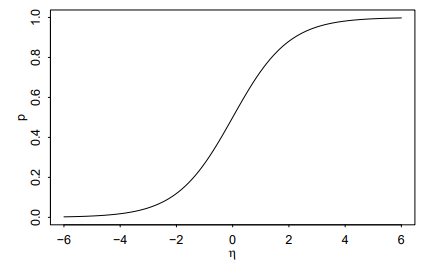

广义线性模型(GLM)归属统计学领域,是一种应用灵活的线性回归模型。该模型允许因变量的偏差分布有除了正态分布之外的其它分布。

术语 广义线性模型(GLM)通常是指给定连续和/或分类预测因素的连续响应变量的常规线性回归模型。它包括多元线性回归,以及方差分析和方差分析(仅含固定效应)。

有限元方法代写

有限元方法(FEM)是一种流行的方法,用于数值解决工程和数学建模中出现的微分方程。典型的问题领域包括结构分析、传热、流体流动、质量运输和电磁势等传统领域。

有限元是一种通用的数值方法,用于解决两个或三个空间变量的偏微分方程(即一些边界值问题)。为了解决一个问题,有限元将一个大系统细分为更小、更简单的部分,称为有限元。这是通过在空间维度上的特定空间离散化来实现的,它是通过构建对象的网格来实现的:用于求解的数值域,它有有限数量的点。边界值问题的有限元方法表述最终导致一个代数方程组。该方法在域上对未知函数进行逼近。[1] 然后将模拟这些有限元的简单方程组合成一个更大的方程系统,以模拟整个问题。然后,有限元通过变化微积分使相关的误差函数最小化来逼近一个解决方案。

tatistics-lab作为专业的留学生服务机构,多年来已为美国、英国、加拿大、澳洲等留学热门地的学生提供专业的学术服务,包括但不限于Essay代写,Assignment代写,Dissertation代写,Report代写,小组作业代写,Proposal代写,Paper代写,Presentation代写,计算机作业代写,论文修改和润色,网课代做,exam代考等等。写作范围涵盖高中,本科,研究生等海外留学全阶段,辐射金融,经济学,会计学,审计学,管理学等全球99%专业科目。写作团队既有专业英语母语作者,也有海外名校硕博留学生,每位写作老师都拥有过硬的语言能力,专业的学科背景和学术写作经验。我们承诺100%原创,100%专业,100%准时,100%满意。

随机分析代写

随机微积分是数学的一个分支,对随机过程进行操作。它允许为随机过程的积分定义一个关于随机过程的一致的积分理论。这个领域是由日本数学家伊藤清在第二次世界大战期间创建并开始的。

时间序列分析代写

随机过程,是依赖于参数的一组随机变量的全体,参数通常是时间。 随机变量是随机现象的数量表现,其时间序列是一组按照时间发生先后顺序进行排列的数据点序列。通常一组时间序列的时间间隔为一恒定值(如1秒,5分钟,12小时,7天,1年),因此时间序列可以作为离散时间数据进行分析处理。研究时间序列数据的意义在于现实中,往往需要研究某个事物其随时间发展变化的规律。这就需要通过研究该事物过去发展的历史记录,以得到其自身发展的规律。

回归分析代写

多元回归分析渐进(Multiple Regression Analysis Asymptotics)属于计量经济学领域,主要是一种数学上的统计分析方法,可以分析复杂情况下各影响因素的数学关系,在自然科学、社会和经济学等多个领域内应用广泛。

MATLAB代写

MATLAB 是一种用于技术计算的高性能语言。它将计算、可视化和编程集成在一个易于使用的环境中,其中问题和解决方案以熟悉的数学符号表示。典型用途包括:数学和计算算法开发建模、仿真和原型制作数据分析、探索和可视化科学和工程图形应用程序开发,包括图形用户界面构建MATLAB 是一个交互式系统,其基本数据元素是一个不需要维度的数组。这使您可以解决许多技术计算问题,尤其是那些具有矩阵和向量公式的问题,而只需用 C 或 Fortran 等标量非交互式语言编写程序所需的时间的一小部分。MATLAB 名称代表矩阵实验室。MATLAB 最初的编写目的是提供对由 LINPACK 和 EISPACK 项目开发的矩阵软件的轻松访问,这两个项目共同代表了矩阵计算软件的最新技术。MATLAB 经过多年的发展,得到了许多用户的投入。在大学环境中,它是数学、工程和科学入门和高级课程的标准教学工具。在工业领域,MATLAB 是高效研究、开发和分析的首选工具。MATLAB 具有一系列称为工具箱的特定于应用程序的解决方案。对于大多数 MATLAB 用户来说非常重要,工具箱允许您学习和应用专业技术。工具箱是 MATLAB 函数(M 文件)的综合集合,可扩展 MATLAB 环境以解决特定类别的问题。可用工具箱的领域包括信号处理、控制系统、神经网络、模糊逻辑、小波、仿真等。

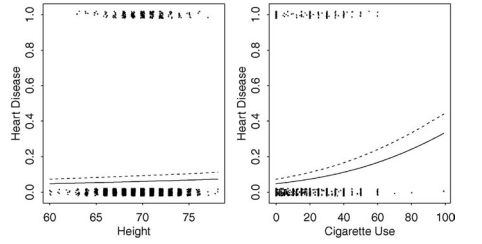

疾病”, peh=”.”)

疾病”, peh=”.”)