如果你也在 怎样代写金融计量经济学Financial Econometrics这个学科遇到相关的难题,请随时右上角联系我们的24/7代写客服。

金融计量学是将统计方法应用于金融市场数据。金融计量学是金融经济学的一个分支,在经济学领域。研究领域包括资本市场、金融机构、公司财务和公司治理。

statistics-lab™ 为您的留学生涯保驾护航 在代写金融计量经济学Financial Econometrics方面已经树立了自己的口碑, 保证靠谱, 高质且原创的统计Statistics代写服务。我们的专家在代写金融计量经济学Financial Econometrics代写方面经验极为丰富,各种代写金融计量经济学Financial Econometrics相关的作业也就用不着说。

我们提供的金融计量经济学Financial Econometrics及其相关学科的代写,服务范围广, 其中包括但不限于:

- Statistical Inference 统计推断

- Statistical Computing 统计计算

- Advanced Probability Theory 高等概率论

- Advanced Mathematical Statistics 高等数理统计学

- (Generalized) Linear Models 广义线性模型

- Statistical Machine Learning 统计机器学习

- Longitudinal Data Analysis 纵向数据分析

- Foundations of Data Science 数据科学基础

金融代写|金融计量经济学Financial Econometrics代考|Basic Setup and Notation

Wé bégin with thé simplé linéar régréssión mơdél in thé crooss-séction sêtting with $p$ independent variables.

A Theory-Based Lasso for Time-Series Data

5

$$

y_{i}=\beta_{0}+\beta_{1} x_{1 i}+\beta_{2} x_{2 i}+\cdots+\beta_{p} x_{p i}+\varepsilon_{i}

$$

In traditional least squares regression, estimated parameters are chosen to minimise the residual sum of squares (RSS):

$$

R S S=\sum_{i=1}^{n}\left(y_{i}-\beta_{0}-\sum_{j=1}^{p} \beta_{j} x_{i j}\right)^{2}

$$

The problem arises when $p$ is relatively large. If the model is too complex or flexible, the parameters estimated using the training dataset do not perform well with future datasets. This is where regularisation is key. By adding a shrinkage quantity to RSS, regularisation shrinks parameter estimates towards zero. A very popular regularised regression method is the lasso, introduced by Frank and Friedman (1993) and Tibshirani (1996). Instead of minimising the RSS, the lasso minimises

$$

R S S+\lambda \sum_{j=1}^{p}\left|\beta_{j}\right|

$$

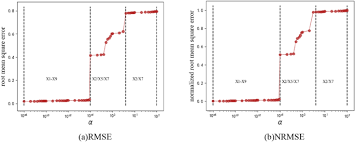

where $\lambda$ is the tuning parameter that determines how much model complexity is penalised. At one extreme, if $\lambda=0$, the penalty term disappears, and lasso estimates are the same as OLS. At the other extreme, as $\lambda \rightarrow \infty$, the penalty term grows and estimated coefficients approach zero.

Choosing the tuning parameter, $\lambda$, is critical. We discuss below both the most popular method of tuning parameter choice, cross-validation, and the theory-derived ‘rigorous lasso’ approach.

We note here that our paper is concerned primarily with prediction and model selection with dependent data rather than causal inference. Estimates from regularised regression cannot be readily interpreted as causal, and statistical inference on these coefficients is complicated and an active area of research. ${ }^{1}$

We are interested in applying the lasso in a single-equation time series framework, where the number of predictors may be large, either because the set of contemporaneous regressors is inherently large (as in a nowcasting application), and/or because the model has many lags.

金融代写|金融计量经济学Financial Econometrics代考|Literature Review

The literature on lag selection in VAR and ARMA models is very rich. Lütkepohl (2005) notes that fitting a $\operatorname{VAR}(R)$ model to a VAR $(R)$ process yields a better outcome in terms of mean square error than fitting a $\operatorname{VAR}(R+i)$ model, because the latter results in inferior forecasts than the former, especially when the sample size is small. In practice, the order of data generating process (DGP) is. of course, unknown and we face a trade-off between out-of-sample prediction performance and model consistency. This suggests that it is advisable to avoid fitting VAR models with unnecessarily large orders. Hence, if an upper bound on the true order is known or suspected, the usual next step is to set up significance tests. In a causality context, Wald tests are useful. The likelihood ratio (LR) test can also be used to compare maximum log-likelihoods over the unrestricted and restricted parameter space.

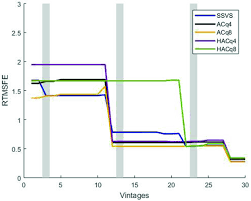

If the focus is on forecasting, information criteria are typically favoured. In this vein, Akaike $(1969,1971)$ proposed using 1-step ahead forecast mean squared error (MSE) to select the VAR order, which led to the final prediction error (FPE) criterion. Akaike’s information criterion (AIC), proposed by Akaike (1974), led to (almost) the same outcome through different reasoning. AIC, defined as $-2 \times \log$-likelihood $+$ $2 \times$ no. of regressors, is approximately equal to FPE in moderate and large sample sizes $(T)$.

Two further information criteria are popular in applied work: Hannan-Quinn criterion (Hannan and Quinn 1979) and Bayesian information criterion (Schwarz 1978). These criteria perform better than AIC and FPE in terms of order selection consistency under certain conditions. However, AIC and FPE have better small sample properties, and models based on these criteria produce superior forecasts despite not estimating the orders correctly (Lütkepohl 2005). Further, the popular information criteria (AIC, BIC, Hannan-Quinn) tend to underfit the model in terms of lag order selection in a small- $t$ context (Lütkepohl 2005).

Although applications of lasso in a time series context are an active area of research, most analyses have focused solely on the use of lasso in lag selection. For example, Hsu et al. (2008) adopt the lasso for VAR subset selection. The authors compare predictive performance of two-dimensional VAR(5) models and US macroeconomic data based on information criteria (AIC, BIC), lasso, and combinations of the two. The findings indicate that the lasso performs better than conventional selection methods in terms of prediction mean squared errors in small samples. In a related application, Nardi and Rinaldo (2011) show that the lasso estimator is model selection consistent when fitting an autoregressive model, where the maximal lag is allowed to increase with sample size. The authors note that the advantage of the lasso with growing $R$ in an $\mathrm{AR}(R)$ model is that the ‘fitted model will be chosen among all possible AR models whose maximal lag is between 1 and $[. . .] \log (\mathrm{n})^{\prime}$ (Nardi and Rinaldo 2011).

金融代写|金融计量经济学Financial Econometrics代考|High-Dimensional Data and Sparsity

The high-dimensional linear model is:

$$

y_{i}=\boldsymbol{x}{i}^{\prime} \boldsymbol{\beta}+\varepsilon{i}

$$

Our initial exposition assumes independence, and to emphasise independence we index observations by $i$. Predictors are indexed by $j$. We have up to $p=\operatorname{dim}(\boldsymbol{\beta})$ potential predictors. $p$ can be very large, potentially even larger than the number of observations $n$. For simplicity we assume that all variables have already been meancentered and rescaled to have unit variance, i.e., $\sum_{i} y_{i}=0$ and $\frac{1}{n} \sum_{i} y_{i}^{2}=1$, and similarly for the predictors $x_{i j}$.

If we simply use OLS to estimate the model and $p$ is large, the result is very poor performance: we overfit badly and classical hypothesis testing leads to many false positives. If $p>n$, OLS is not even identified.

How to proceed depend on what we believe the ‘true model’ is. Does the true model (DGP) include a very large number of regressors? In other words, is the set of predictors that enter the model ‘dense’? Or does the true model consist of a small number of regressors $s$, and all the other $p-s$ regressors do not enter (or equivalently, have zero coefficients)? In other words, is the set of predictors that enter the model ‘sparse’?

In this paper, we focus primarily on the ‘sparse’ case and in particular an estimator that is particularly well-suited to the sparse setting, namely the lasso introduced by Tibshirani (1996).

In the exact sparsity case of the $p$ potential regressors, only $s$ regressors belong in the model, wherre

$$

s:=\sum_{j=1}^{p} \mathbb{1}\left{\beta_{j} \neq 0\right} \ll n

$$

In other words, most of the true coefficients $\beta_{j}$ are actually zero. The problem facing the researcher is that which are zeros and which are not is unknown.

We can also use the weaker assumption of approximate sparsity: some of the $\beta_{j}$ coefficients are well-approximated by zero, and the approximation error is sufficiently ‘small’. The discussion and methods we present in this paper typically carry over to the approximately sparse case, and for the most part we will use the term ‘sparse’ to refer to either setting.

The sparse high-dimensional model accommodates situations that are very familiar to researchers and that typically presented them with difficult problems where traditional statistical methods would perform badly. These include hoth settings where the number $p$ of observed potential predictors is very large and the researcher does not know which ones to use, and settings where the number of observed variables is small but the number of potential predictors in the model is large because of interactions and other non-linearities, model uncertainty, temporal and spatial effects, etc.

金融计量经济学代考

金融代写|金融计量经济学Financial Econometrics代考|Basic Setup and Notation

我们从横截面设置中的简单线性回归模型开始p自变量。

时间序列数据的基于理论的套索

5

是一世=b0+b1X1一世+b2X2一世+⋯+bpXp一世+e一世

在传统的最小二乘回归中,选择估计参数以最小化残差平方和 (RSS):

R小号小号=∑一世=1n(是一世−b0−∑j=1pbjX一世j)2

问题出现时p比较大。如果模型过于复杂或过于灵活,则使用训练数据集估计的参数在未来的数据集上表现不佳。这就是正则化是关键的地方。通过向 RSS 添加收缩量,正则化将参数估计收缩到零。一种非常流行的正则化回归方法是由 Frank 和 Friedman (1993) 和 Tibshirani (1996) 引入的套索。套索不是最小化 RSS,而是最小化

R小号小号+λ∑j=1p|bj|

在哪里λ是决定模型复杂度受到惩罚的调整参数。在一种极端情况下,如果λ=0,惩罚项消失,lasso 估计与 OLS 相同。在另一个极端,如λ→∞,惩罚项增长,估计系数接近零。

选择调整参数,λ, 很关键。我们在下面讨论了最流行的参数选择方法、交叉验证和理论派生的“严格套索”方法。

我们在这里注意到,我们的论文主要关注依赖数据的预测和模型选择,而不是因果推理。正则化回归的估计不能轻易解释为因果关系,对这些系数的统计推断很复杂,是一个活跃的研究领域。1

我们有兴趣在单方程时间序列框架中应用套索,其中预测变量的数量可能很大,或者是因为同时期回归变量的集合本身就很大(如在临近预报应用程序中),和/或因为模型具有许多滞后。

金融代写|金融计量经济学Financial Econometrics代考|Literature Review

关于 VAR 和 ARMA 模型中滞后选择的文献非常丰富。Lütkepohl (2005) 指出,拟合曾是(R)模型到 VAR(R)过程在均方误差方面产生比拟合更好的结果曾是(R+一世)模型,因为后者导致比前者更差的预测,尤其是在样本量较小的情况下。在实践中,数据生成过程(DGP)的顺序是。当然,未知,我们面临样本外预测性能和模型一致性之间的权衡。这表明建议避免用不必要的大订单拟合 VAR 模型。因此,如果已知或怀疑真实订单的上限,通常下一步是设置显着性检验。在因果关系中,Wald 检验很有用。似然比 (LR) 检验还可用于比较不受限制和受限制参数空间上的最大对数似然。

如果重点是预测,则通常倾向于使用信息标准。在这种情况下,赤池(1969,1971)提出使用 1 步提前预测均方误差 (MSE) 来选择 VAR 阶数,从而得出最终预测误差 (FPE) 标准。Akaike 的信息标准 (AIC) 由 Akaike (1974) 提出,通过不同的推理导致(几乎)相同的结果。AIC,定义为−2×日志-可能性+ 2×不。回归量,在中等和大样本量中大约等于 FPE(吨).

两个进一步的信息标准在应用工作中很流行:Hannan-Quinn 标准 (Hannan and Quinn 1979) 和贝叶斯信息标准 (Schwarz 1978)。在某些条件下,这些标准在订单选择一致性方面表现优于 AIC 和 FPE。然而,AIC 和 FPE 具有更好的小样本属性,并且基于这些标准的模型产生了更好的预测,尽管没有正确估计订单(Lütkepohl 2005)。此外,流行的信息标准(AIC、BIC、Hannan-Quinn)在小范围内的滞后阶选择方面倾向于欠拟合模型。吨背景(Lütkepohl 2005)。

尽管 lasso 在时间序列环境中的应用是一个活跃的研究领域,但大多数分析只关注在滞后选择中使用 lasso。例如,Hsu 等人。(2008) 采用 lasso 进行 VAR 子集选择。作者比较了基于信息标准(AIC、BIC)、套索和两者组合的二维 VAR(5) 模型和美国宏观经济数据的预测性能。研究结果表明,就小样本的预测均方误差而言,lasso 比传统的选择方法表现更好。在相关应用中,Nardi 和 Rinaldo (2011) 表明,在拟合自回归模型时,套索估计器的模型选择是一致的,其中允许最大滞后随样本量的增加而增加。作者指出,套索的优势随着增长R在一个一个R(R)模型是’拟合模型将在最大滞后在 1 到 1 之间的所有可能的 AR 模型中选择[…]日志(n)′(纳尔迪和里纳尔多 2011)。

金融代写|金融计量经济学Financial Econometrics代考|High-Dimensional Data and Sparsity

高维线性模型为:

是一世=X一世′b+e一世

我们最初的阐述假定了独立性,为了强调独立性,我们通过以下方式索引观察结果一世. 预测变量由j. 我们有多达p=暗淡(b)潜在的预测因素。p可能非常大,甚至可能大于观察次数n. 为简单起见,我们假设所有变量都已经被均值中心化并重新调整为具有单位方差,即∑一世是一世=0和1n∑一世是一世2=1,对于预测变量也是如此X一世j.

如果我们简单地使用 OLS 来估计模型并且p很大,结果是性能很差:我们过度拟合,经典假设检验导致许多误报。如果p>n, OLS 甚至没有被识别。

如何进行取决于我们认为“真正的模式”是什么。真实模型 (DGP) 是否包含大量回归变量?换句话说,进入模型的预测变量集是否“密集”?或者真实模型是否包含少量回归变量s, 和所有其他p−s回归器不输入(或等效地,系数为零)?换句话说,进入模型的预测变量集是否“稀疏”?

在本文中,我们主要关注“稀疏”情况,特别是特别适合稀疏设置的估计器,即 Tibshirani (1996) 引入的套索。

在精确的稀疏情况下p潜在的回归者,只有s回归量属于模型,在哪里

s:=\sum_{j=1}^{p} \mathbb{1}\left{\beta_{j} \neq 0\right} \ll ns:=\sum_{j=1}^{p} \mathbb{1}\left{\beta_{j} \neq 0\right} \ll n

换句话说,大多数真实系数bj实际上是零。研究人员面临的问题是哪些是零,哪些不是是未知的。

我们还可以使用近似稀疏性的较弱假设:一些bj系数很好地近似为零,并且近似误差足够“小”。我们在本文中提出的讨论和方法通常会延续到近似稀疏的情况,并且在大多数情况下,我们将使用术语“稀疏”来指代任一设置。

稀疏的高维模型适应了研究人员非常熟悉的情况,并且通常会给他们带来传统统计方法表现不佳的难题。这些包括热设置,其中数字p观察到的潜在预测变量非常大,研究人员不知道使用哪些变量,以及观察变量数量较少但模型中潜在预测变量数量很大的设置,因为相互作用和其他非线性,模型不确定性、时空效应等。

统计代写请认准statistics-lab™. statistics-lab™为您的留学生涯保驾护航。

金融工程代写

金融工程是使用数学技术来解决金融问题。金融工程使用计算机科学、统计学、经济学和应用数学领域的工具和知识来解决当前的金融问题,以及设计新的和创新的金融产品。

非参数统计代写

非参数统计指的是一种统计方法,其中不假设数据来自于由少数参数决定的规定模型;这种模型的例子包括正态分布模型和线性回归模型。

广义线性模型代考

广义线性模型(GLM)归属统计学领域,是一种应用灵活的线性回归模型。该模型允许因变量的偏差分布有除了正态分布之外的其它分布。

术语 广义线性模型(GLM)通常是指给定连续和/或分类预测因素的连续响应变量的常规线性回归模型。它包括多元线性回归,以及方差分析和方差分析(仅含固定效应)。

有限元方法代写

有限元方法(FEM)是一种流行的方法,用于数值解决工程和数学建模中出现的微分方程。典型的问题领域包括结构分析、传热、流体流动、质量运输和电磁势等传统领域。

有限元是一种通用的数值方法,用于解决两个或三个空间变量的偏微分方程(即一些边界值问题)。为了解决一个问题,有限元将一个大系统细分为更小、更简单的部分,称为有限元。这是通过在空间维度上的特定空间离散化来实现的,它是通过构建对象的网格来实现的:用于求解的数值域,它有有限数量的点。边界值问题的有限元方法表述最终导致一个代数方程组。该方法在域上对未知函数进行逼近。[1] 然后将模拟这些有限元的简单方程组合成一个更大的方程系统,以模拟整个问题。然后,有限元通过变化微积分使相关的误差函数最小化来逼近一个解决方案。

tatistics-lab作为专业的留学生服务机构,多年来已为美国、英国、加拿大、澳洲等留学热门地的学生提供专业的学术服务,包括但不限于Essay代写,Assignment代写,Dissertation代写,Report代写,小组作业代写,Proposal代写,Paper代写,Presentation代写,计算机作业代写,论文修改和润色,网课代做,exam代考等等。写作范围涵盖高中,本科,研究生等海外留学全阶段,辐射金融,经济学,会计学,审计学,管理学等全球99%专业科目。写作团队既有专业英语母语作者,也有海外名校硕博留学生,每位写作老师都拥有过硬的语言能力,专业的学科背景和学术写作经验。我们承诺100%原创,100%专业,100%准时,100%满意。

随机分析代写

随机微积分是数学的一个分支,对随机过程进行操作。它允许为随机过程的积分定义一个关于随机过程的一致的积分理论。这个领域是由日本数学家伊藤清在第二次世界大战期间创建并开始的。

时间序列分析代写

随机过程,是依赖于参数的一组随机变量的全体,参数通常是时间。 随机变量是随机现象的数量表现,其时间序列是一组按照时间发生先后顺序进行排列的数据点序列。通常一组时间序列的时间间隔为一恒定值(如1秒,5分钟,12小时,7天,1年),因此时间序列可以作为离散时间数据进行分析处理。研究时间序列数据的意义在于现实中,往往需要研究某个事物其随时间发展变化的规律。这就需要通过研究该事物过去发展的历史记录,以得到其自身发展的规律。

回归分析代写

多元回归分析渐进(Multiple Regression Analysis Asymptotics)属于计量经济学领域,主要是一种数学上的统计分析方法,可以分析复杂情况下各影响因素的数学关系,在自然科学、社会和经济学等多个领域内应用广泛。

MATLAB代写

MATLAB 是一种用于技术计算的高性能语言。它将计算、可视化和编程集成在一个易于使用的环境中,其中问题和解决方案以熟悉的数学符号表示。典型用途包括:数学和计算算法开发建模、仿真和原型制作数据分析、探索和可视化科学和工程图形应用程序开发,包括图形用户界面构建MATLAB 是一个交互式系统,其基本数据元素是一个不需要维度的数组。这使您可以解决许多技术计算问题,尤其是那些具有矩阵和向量公式的问题,而只需用 C 或 Fortran 等标量非交互式语言编写程序所需的时间的一小部分。MATLAB 名称代表矩阵实验室。MATLAB 最初的编写目的是提供对由 LINPACK 和 EISPACK 项目开发的矩阵软件的轻松访问,这两个项目共同代表了矩阵计算软件的最新技术。MATLAB 经过多年的发展,得到了许多用户的投入。在大学环境中,它是数学、工程和科学入门和高级课程的标准教学工具。在工业领域,MATLAB 是高效研究、开发和分析的首选工具。MATLAB 具有一系列称为工具箱的特定于应用程序的解决方案。对于大多数 MATLAB 用户来说非常重要,工具箱允许您学习和应用专业技术。工具箱是 MATLAB 函数(M 文件)的综合集合,可扩展 MATLAB 环境以解决特定类别的问题。可用工具箱的领域包括信号处理、控制系统、神经网络、模糊逻辑、小波、仿真等。