计算机代写|强化学习代写Reinforcement learning代考|COMP579

如果你也在 怎样代写强化学习reinforence learning这个学科遇到相关的难题,请随时右上角联系我们的24/7代写客服。

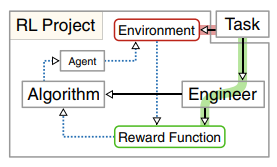

强化学习是一种基于奖励期望行为和/或惩罚不期望行为的机器学习训练方法。一般来说,强化学习代理能够感知和解释其环境,采取行动并通过试验和错误学习。

statistics-lab™ 为您的留学生涯保驾护航 在代写强化学习reinforence learning方面已经树立了自己的口碑, 保证靠谱, 高质且原创的统计Statistics代写服务。我们的专家在代写强化学习reinforence learning代写方面经验极为丰富,各种代写强化学习reinforence learning相关的作业也就用不着说。

我们提供的强化学习reinforence learning及其相关学科的代写,服务范围广, 其中包括但不限于:

- Statistical Inference 统计推断

- Statistical Computing 统计计算

- Advanced Probability Theory 高等概率论

- Advanced Mathematical Statistics 高等数理统计学

- (Generalized) Linear Models 广义线性模型

- Statistical Machine Learning 统计机器学习

- Longitudinal Data Analysis 纵向数据分析

- Foundations of Data Science 数据科学基础

计算机代写|强化学习代写Reinforcement learning代考|Mathematics

Mathematical logic is another foundation of deep reinforcement learning. Discrete optimization and graph theory are of great importance for the formalization of reinforcement learning, as we will see in Sect. 2.2.2 on Markov decision processes. Mathematical formalizations have enabled the development of efficient planning and optimization algorithms that are at the core of current progress.

Planning and optimization are an important part of deep reinforcement learning. They are also related to the field of operations research, although there the emphasis is on (non-sequential) combinatorial optimization problems. In AI, planning and optimization are used as building blocks for creating learning systems for sequential, high-dimensional, problems that can include visual, textual, or auditory input.

The field of symbolic reasoning is based on logic, and it is one of the earliest success stories in artificial intelligence. Out of work in symbolic reasoning came heuristic search [34], expert systems, and theorem proving systems. Wellknown systems are the STRIPS planner [17], the Mathematica computer algebra system [13], the logic programming language PROLOG [14], and also systems such as SPARQL for semantic (web) reasoning [3, 7].

Symbolic AI focuses on reasoning in discrete domains, such as decision trees, planning, and games of strategy, such as chess and checkers. Symbolic AI has driven success in methods to search the web, to power online social networks, and to power online commerce. These highly successful technologies are the basis of much of our modern society and economy. In 2011 the highest recognition in computer science, the Turing award, was awarded to Judea Pearl for work in causal reasoning (Fig. 1.9). ${ }^2$ Pearl later published an influential book to popularize the field [35].

Another area of mathematics that has played a large role in deep reinforcement learning is the field of continuous (numerical) optimization. Continuous methods are important, for example, in efficient gradient descent and backpropagation methods that are at the heart of current deep learning algorithms.

计算机代写|强化学习代写Reinforcement learning代考|Engineering

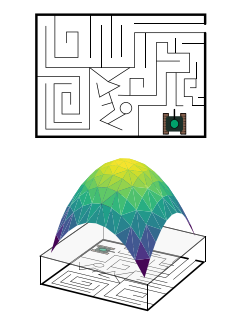

In engineering, the field of reinforcement learning is better known as optimal control. The theory of optimal control of dynamical systems was developed by Richard Bellman and Lev Pontryagin [8]. Optimal control theory originally focused on dynamical systems, and the technology and methods relate to continuous optimization methods such as used in robotics (see Fig. $1.10$ for an illustration of optimal control at work in docking two space vehicles). Optimal control theory is of central importance to many problems in engineering.

To this day reinforcement learning and optimal control use a different terminology and notation. States and actions are denoted as $s$ and $a$ in state-oriented reinforcement learning, where the engineering world of optimal control uses $x$ and $u$. In this book the former notation is used.

Biology has a profound influence on computer science. Many nature-inspired optimization algorithms have been developed in artificial intelligence. An important nature-inspired school of thought is connectionist AI.

Mathematical logic and engineering approach intelligence as a top-down deductive process; observable effects in the real world follow from the application of theories and the laws of nature, and intelligence follows deductively from theory. In contrast, connectionism approaches intelligence in a bottom-up fashion. Connectionist intelligence emerges out of many low-level interactions. Intelligence follows inductively from practice. Intelligence is embodied: the bees in bee hives, the ants in ant colonies, and the neurons in the brain all interact, and out of the connections and interactions arises behavior that we recognize as intelligent [11].

Examples of the connectionist approach to intelligence are nature-inspired algorithms such as ant colony optimization [15], swarm intelligence [11, 26], evolutionary algorithms $[4,18,23]$, robotic intelligence [12], and, last but not least, artificial neural networks and deep learning [19, 21, 30].

It should be noted that both the symbolic and the connectionist school of AI have been very successful. After the enormous economic impact of search and symbolic AI (Google, Facebook, Amazon, Netflix), much of the interest in AI in the last two decades has been inspired by the success of the connectionist approach in computer language and vision. In 2018 the Turing award was awarded to three key researchers in deep learning: Bengio, Hinton, and LeCun (Fig. 1.11). Their most famous paper on deep learning may well be [30].

强化学习代写

计算机代写|强化学习代写Reinforcement learning代考|Mathematics

数理逻辑是深度强化学习的另一个基础。正如我们将在第 1 节中看到的,离散优化和图论对于强化学习的形式化非常重要。2.2.2 关于马尔可夫决策过程。数学形式化使得高效规划和优化算法的开发成为可能,这些算法是当前进展的核心。

规划和优化是深度强化学习的重要组成部分。它们也与运筹学领域有关,尽管那里的重点是(非顺序的)组合优化问题。在 AI 中,规划和优化被用作创建学习系统的构建块,用于解决顺序的、高维的问题,这些问题可能包括视觉、文本或听觉输入。

符号推理领域以逻辑为基础,是人工智能领域最早的成功案例之一。在符号推理的工作之外出现了启发式搜索 [34]、专家系统和定理证明系统。众所周知的系统有 STRIPS 规划器 [17]、Mathematica 计算机代数系统 [13]、逻辑编程语言 PROLOG [14],以及用于语义(网络)推理的 SPARQL 等系统 [3、7]。

符号人工智能专注于离散领域的推理,例如决策树、规划和战略游戏,例如国际象棋和西洋跳棋。符号人工智能推动了网络搜索、在线社交网络和在线商务方法的成功。这些非常成功的技术是我们现代社会和经济的基础。2011 年计算机科学的最高荣誉图灵奖授予 Judea Pearl,表彰其在因果推理方面的工作(图 1.9)。2Pearl 后来出版了一本有影响力的书来普及该领域 [35]。

在深度强化学习中发挥重要作用的另一个数学领域是连续(数值)优化领域。连续方法很重要,例如,在作为当前深度学习算法核心的高效梯度下降和反向传播方法中。

计算机代写|强化学习代写Reinforcement learning代考|Engineering

在工程学中,强化学习领域被称为最优控制。动力系统最优控制理论由 Richard Bellman 和 Lev Pontryagin [8] 开发。最优控制理论最初侧重于动力系统,其技术和方法涉及连续优化方法,例如机器人技术中使用的方法(见图 1)。1.10用于说明在对接两个太空飞行器时的最佳控制)。最优控制理论对于工程中的许多问题都至关重要。

时至今日,强化学习和最优控制使用不同的术语和符号。状态和动作表示为秒和一种在面向状态的强化学习中,最优控制的工程世界使用X和在. 在本书中使用前一种符号。

生物学对计算机科学有着深远的影响。在人工智能中已经开发了许多受自然启发的优化算法。一个重要的受自然启发的思想流派是联结主义 AI。

数理逻辑和工程学将智能视为自上而下的演绎过程;现实世界中可观察到的效果来自理论的应用和自然法则,而智能则来自理论的演绎。相比之下,联结主义以自下而上的方式接近智力。连接主义智能出现在许多低层次的互动中。智慧是从实践中归纳得出的。智能是具体化的:蜂巢中的蜜蜂、蚁群中的蚂蚁和大脑中的神经元都相互作用,并且在连接和相互作用中产生了我们认为是智能的行为 [11]。

智能连接主义方法的例子是受自然启发的算法,例如蚁群优化 [15]、群体智能 [11、26]、进化算法[4,18,23]、机器人智能 [12],最后但同样重要的是,人工神经网络和深度学习 [19、21、30]。

应该指出的是,AI 的符号学派和联结学派都非常成功。在搜索和符号 AI(谷歌、Facebook、亚马逊、Netflix)对经济产生巨大影响之后,过去二十年对 AI 的兴趣大多受到计算机语言和视觉中连接主义方法的成功启发。2018 年,图灵奖授予了深度学习领域的三位主要研究人员:Bengio、Hinton 和 LeCun(图 1.11)。他们最著名的深度学习论文很可能是 [30]。

统计代写请认准statistics-lab™. statistics-lab™为您的留学生涯保驾护航。

金融工程代写

金融工程是使用数学技术来解决金融问题。金融工程使用计算机科学、统计学、经济学和应用数学领域的工具和知识来解决当前的金融问题,以及设计新的和创新的金融产品。

非参数统计代写

非参数统计指的是一种统计方法,其中不假设数据来自于由少数参数决定的规定模型;这种模型的例子包括正态分布模型和线性回归模型。

广义线性模型代考

广义线性模型(GLM)归属统计学领域,是一种应用灵活的线性回归模型。该模型允许因变量的偏差分布有除了正态分布之外的其它分布。

术语 广义线性模型(GLM)通常是指给定连续和/或分类预测因素的连续响应变量的常规线性回归模型。它包括多元线性回归,以及方差分析和方差分析(仅含固定效应)。

有限元方法代写

有限元方法(FEM)是一种流行的方法,用于数值解决工程和数学建模中出现的微分方程。典型的问题领域包括结构分析、传热、流体流动、质量运输和电磁势等传统领域。

有限元是一种通用的数值方法,用于解决两个或三个空间变量的偏微分方程(即一些边界值问题)。为了解决一个问题,有限元将一个大系统细分为更小、更简单的部分,称为有限元。这是通过在空间维度上的特定空间离散化来实现的,它是通过构建对象的网格来实现的:用于求解的数值域,它有有限数量的点。边界值问题的有限元方法表述最终导致一个代数方程组。该方法在域上对未知函数进行逼近。[1] 然后将模拟这些有限元的简单方程组合成一个更大的方程系统,以模拟整个问题。然后,有限元通过变化微积分使相关的误差函数最小化来逼近一个解决方案。

tatistics-lab作为专业的留学生服务机构,多年来已为美国、英国、加拿大、澳洲等留学热门地的学生提供专业的学术服务,包括但不限于Essay代写,Assignment代写,Dissertation代写,Report代写,小组作业代写,Proposal代写,Paper代写,Presentation代写,计算机作业代写,论文修改和润色,网课代做,exam代考等等。写作范围涵盖高中,本科,研究生等海外留学全阶段,辐射金融,经济学,会计学,审计学,管理学等全球99%专业科目。写作团队既有专业英语母语作者,也有海外名校硕博留学生,每位写作老师都拥有过硬的语言能力,专业的学科背景和学术写作经验。我们承诺100%原创,100%专业,100%准时,100%满意。

随机分析代写

随机微积分是数学的一个分支,对随机过程进行操作。它允许为随机过程的积分定义一个关于随机过程的一致的积分理论。这个领域是由日本数学家伊藤清在第二次世界大战期间创建并开始的。

时间序列分析代写

随机过程,是依赖于参数的一组随机变量的全体,参数通常是时间。 随机变量是随机现象的数量表现,其时间序列是一组按照时间发生先后顺序进行排列的数据点序列。通常一组时间序列的时间间隔为一恒定值(如1秒,5分钟,12小时,7天,1年),因此时间序列可以作为离散时间数据进行分析处理。研究时间序列数据的意义在于现实中,往往需要研究某个事物其随时间发展变化的规律。这就需要通过研究该事物过去发展的历史记录,以得到其自身发展的规律。

回归分析代写

多元回归分析渐进(Multiple Regression Analysis Asymptotics)属于计量经济学领域,主要是一种数学上的统计分析方法,可以分析复杂情况下各影响因素的数学关系,在自然科学、社会和经济学等多个领域内应用广泛。

MATLAB代写

MATLAB 是一种用于技术计算的高性能语言。它将计算、可视化和编程集成在一个易于使用的环境中,其中问题和解决方案以熟悉的数学符号表示。典型用途包括:数学和计算算法开发建模、仿真和原型制作数据分析、探索和可视化科学和工程图形应用程序开发,包括图形用户界面构建MATLAB 是一个交互式系统,其基本数据元素是一个不需要维度的数组。这使您可以解决许多技术计算问题,尤其是那些具有矩阵和向量公式的问题,而只需用 C 或 Fortran 等标量非交互式语言编写程序所需的时间的一小部分。MATLAB 名称代表矩阵实验室。MATLAB 最初的编写目的是提供对由 LINPACK 和 EISPACK 项目开发的矩阵软件的轻松访问,这两个项目共同代表了矩阵计算软件的最新技术。MATLAB 经过多年的发展,得到了许多用户的投入。在大学环境中,它是数学、工程和科学入门和高级课程的标准教学工具。在工业领域,MATLAB 是高效研究、开发和分析的首选工具。MATLAB 具有一系列称为工具箱的特定于应用程序的解决方案。对于大多数 MATLAB 用户来说非常重要,工具箱允许您学习和应用专业技术。工具箱是 MATLAB 函数(M 文件)的综合集合,可扩展 MATLAB 环境以解决特定类别的问题。可用工具箱的领域包括信号处理、控制系统、神经网络、模糊逻辑、小波、仿真等。