如果你也在 怎样代写机器学习machine learning这个学科遇到相关的难题,请随时右上角联系我们的24/7代写客服。

机器学习(ML)是人工智能(AI)的一种类型,它允许软件应用程序在预测结果时变得更加准确,而无需明确编程。机器学习算法使用历史数据作为输入来预测新的输出值。

statistics-lab™ 为您的留学生涯保驾护航 在代写机器学习machine learning方面已经树立了自己的口碑, 保证靠谱, 高质且原创的统计Statistics代写服务。我们的专家在代写机器学习machine learning代写方面经验极为丰富,各种代写机器学习machine learning相关的作业也就用不着说。

我们提供的机器学习machine learning及其相关学科的代写,服务范围广, 其中包括但不限于:

- Statistical Inference 统计推断

- Statistical Computing 统计计算

- Advanced Probability Theory 高等概率论

- Advanced Mathematical Statistics 高等数理统计学

- (Generalized) Linear Models 广义线性模型

- Statistical Machine Learning 统计机器学习

- Longitudinal Data Analysis 纵向数据分析

- Foundations of Data Science 数据科学基础

cs代写|机器学习代写machine learning代考|Neurons and the threshold perceptron

The brain is composed of specialized cells. These cells include neurons, which are thought to be the main information-processing units, and glia, which have a variety of supporting roles. A schematic example of a neuron is shown in Fig. 4.1a. Neurons are specialized in electrical and chemical information processing. They have an extensions called an axon to send signals, and receiving extensions called dendrites. The contact zone between the neurons is called a synapse. A sending neuron is often referred to as the presynaptic neuron and the receiving cell is a postsynaptic neuron. When an neuron becomes active it sends a spike down the axon where it can release chemicals called neurotransmitters. The neurotransmitters can then bind to receiving receptors on the dendrite that trigger the opening of ion channels. Ion channels are specialized proteins that form gates in the cell membrane. In this way, electrically charged ions can enter or leave the neuron and accordingly change the voltage (membrane potential) of the neuron. The dendrite and cell body acts like a cable and a capacitor that integrates (sums) the potentials of all synapses. When the combined voltage at the axon reaches a certain threshold, a spike is generated. The spike can then travel down the axon and affect further neurons downstream.

This outline of the functionality of a neuron is, of course, a major simplification. For example, we ignored the description of the specific time course of opening and closing of ion channels and hence some of the more detailed dynamics of neural activity. Also, we ignored the description of the transmission of the electric signals within the neuron; this is why such a model is called a point-neuron. Despite these simplifications, this model captures some important aspects of a neuron functionality. Such a model suffices for us at this point to build simplified models that demonstrate some of the informationprocessing capabilities of such a simplified neuron or a network of simplified neurons. We will now describe this model in mathematical terms so that we can then simulate such model neurons with the help of a computer.

Warren McCulloch and Walter Pitts were among the first to propose such a simple model of a neuron in 1943 which they called the threshold logical unit. It is now often

referred to as the McCulloch-Pitts neuron. Such a unit is shown in Fig. 4.2A with three input channels, although neurons have typically a much larger number of input channels. Input values are labeled by $x$ with a subscript for each channel. Each channel has an associated weight parameter, $w_{i}$, representing the “strength” of a synapse.

The McCulloch-Pitts neuron operates in the following way. Each input value is multiplied with the corresponding weight value, and these weighted values are then summed together, mimicking the superposition of electric charges. Finally, if the weighted summed input is larger than a certain threshold value, $w_{0}$, then the output is set to 1 , and 0 otherwise. Mathematically this can be written as

$$

y(\mathbf{x} ; \mathbf{w})=\left{\begin{array}{cc}

1 & \text { if } \sum_{i}^{n} w_{i} x_{i}=\mathbf{w}^{T} \mathbf{x}>w_{0} \

0 & \text { otherwise }

\end{array}\right.

$$

This simple neuron model can be written in a more generic form that we will call the perceptron. In this more general model, we calculate the output of a neuron by applying an gain function $g$ to the weighted summed input,

$$

y(\mathbf{x} ; \mathbf{w})=g\left(\mathbf{w}^{T} \mathbf{x}\right)

$$

where $w$ are parameters that need to be set to specific values or, in other words, they are the parameters of our parameterized model for supervised learning. We will come back to this point later regarding how precisely to chose them. The original McCulloch-Pits neuron is in these terms a threshold perceptron with a threshold gain function,

$$

g(x)=\left{\begin{array}{l}

1 \text { if } x>0 \

0 \text { otherwise }

\end{array}\right.

$$

This threshold gain function is a first example of a non-linear function that transforms the sum of the weighted inputs. The gain function is sometimes called the activation function, the transfer function, or the output function in the neural network literature. Non-linear gain functions are an important part of artificial neural networks as further discussed in later chapters.

cs代写|机器学习代写machine learning代考|Multilayer perceptron (MLP) and Keras

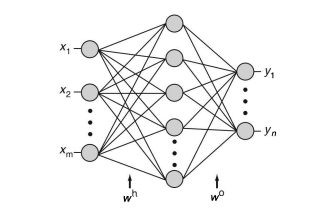

To represent more complex functions with perceptron-like elements we are now building networks of artificial neurons. We will start with a multilayer perceptron (MLP) as

shown in Fig.4.3. This network is called a two-layer network as it basically has two processing layers. The input layer simply represents the feature vector of a sensory input, while the next two layers are composed of the perceptron-like elements that sum up the input from previous layers with their associate weighs of the connection channels and apply a non-linear gain function $\sigma(x)$ to this sum,

$$

y_{i}=\sigma\left(\sum_{j} w_{i j} x_{j}\right)

$$

We used here the common notation with variables $x$ representing input and $y$ representing the output. The synaptic weights are written as $w_{i j}$. The above equation corresponds to a single-layer perceptron in the case of a single output node. Of course, with more layers, we need to distinguish the different neurons and weights, for example with superscipts for the weights as in Fig.4.3. The output of this network is calculated as

$$

y_{i}=\sigma\left(w_{i j}^{\mathrm{o}} \sigma\left(\sum_{k} w_{j k}^{\mathrm{h}} x_{k}\right)\right) .

$$

where we used the superscript “o” for the output weights and the superscript ” $h$ ” for the hidden weights. These formulae represent a parameterized function that is the model in the machine learning context.

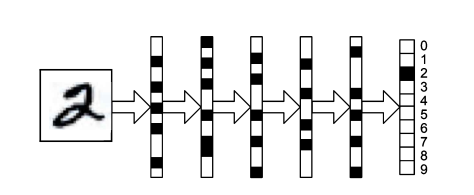

cs代写|机器学习代写machine learning代考|Representational learning

Here, we are discussing feedforward neural networks which can be seen as implementing transformations or mapping functions from an input space to a latent space, and from there on to an output space. The latent space is spanned by the neurons in between the input nodes and the output nodes, which are sometime called the hidden neurons. We can of course always observe the activity of the nodes in our programs so that these are not really hidden. All the weights are learned from the data so that the transformations that are implemented by the neural network are learned from examples. However, we can guide these transformations with the architecture. The latent representations should be learned so that the final classification in the last layer is much easier than from the raw sensory space. Also, the network and hence the representation it represents should make generalizations to previously unseen examples easy and robust. It is useful to pause for a while here and discuss representations.

机器学习代写

cs代写|机器学习代写machine learning代考|Neurons and the threshold perceptron

大脑由专门的细胞组成。这些细胞包括被认为是主要信息处理单元的神经元和具有多种支持作用的神经胶质细胞。一个神经元的示意图如图 4.1a 所示。神经元专门从事电气和化学信息处理。它们有一个称为轴突的扩展来发送信号,并接收称为树突的扩展。神经元之间的接触区称为突触。发送神经元通常被称为突触前神经元,而接收细胞是突触后神经元。当一个神经元变得活跃时,它会向轴突发送一个尖峰,在那里它可以释放称为神经递质的化学物质。然后,神经递质可以与树突上的接收受体结合,从而触发离子通道的打开。离子通道是在细胞膜中形成门的特殊蛋白质。这样,带电离子可以进入或离开神经元,从而改变神经元的电压(膜电位)。树突和细胞体就像一根电缆和一个电容器,整合(总和)所有突触的电位。当轴突处的组合电压达到某个阈值时,就会产生一个尖峰。然后,尖峰可以沿着轴突向下传播并影响下游的更多神经元。当轴突处的组合电压达到某个阈值时,就会产生一个尖峰。然后,尖峰可以沿着轴突向下传播并影响下游的更多神经元。当轴突处的组合电压达到某个阈值时,就会产生一个尖峰。然后,尖峰可以沿着轴突向下传播并影响下游的更多神经元。

当然,这个神经元功能的概述是一个主要的简化。例如,我们忽略了对离子通道打开和关闭的特定时间过程的描述,因此忽略了一些更详细的神经活动动力学。此外,我们忽略了对神经元内电信号传输的描述;这就是为什么这种模型被称为点神经元的原因。尽管进行了这些简化,但该模型仍捕获了神经元功能的一些重要方面。在这一点上,这样的模型足以让我们构建简化模型,展示这种简化神经元或简化神经元网络的一些信息处理能力。我们现在将用数学术语描述这个模型,以便我们可以在计算机的帮助下模拟这样的模型神经元。

Warren McCulloch 和 Walter Pitts 在 1943 年率先提出了这样一个简单的神经元模型,他们称之为阈值逻辑单元。现在经常

称为 McCulloch-Pitts 神经元。这样的单元如图 4.2A 所示,具有三个输入通道,尽管神经元通常具有更多数量的输入通道。输入值标记为X每个通道都有一个下标。每个通道都有一个相关的权重参数,在一世,代表突触的“强度”。

McCulloch-Pitts 神经元以下列方式运作。每个输入值乘以相应的权重值,然后将这些权重值相加,模拟电荷的叠加。最后,如果加权求和输入大于某个阈值,在0,则输出设置为 1 ,否则设置为 0。数学上这可以写成

$$

y(\mathbf{x} ; \mathbf{w})=\left{

1 如果 ∑一世n在一世X一世=在吨X>在0 0 除此以外 \正确的。

吨H一世ss一世米pl和n和在r○n米○d和lC一个nb和在r一世吨吨和n一世n一个米○r和G和n和r一世CF○r米吨H一个吨在和在一世llC一个ll吨H和p和rC和p吨r○n.我n吨H一世s米○r和G和n和r一个l米○d和l,在和C一个lC在l一个吨和吨H和○在吨p在吨○F一个n和在r○nb是一个ppl是一世nG一个nG一个一世nF在nC吨一世○n$G$吨○吨H和在和一世GH吨和ds在米米和d一世np在吨,

y(\mathbf{x} ; \mathbf{w})=g\left(\mathbf{w}^{T} \mathbf{x}\right)

在H和r和$在$一个r和p一个r一个米和吨和rs吨H一个吨n和和d吨○b和s和吨吨○sp和C一世F一世C在一个l在和s○r,一世n○吨H和r在○rds,吨H和是一个r和吨H和p一个r一个米和吨和rs○F○在rp一个r一个米和吨和r一世和和d米○d和lF○rs在p和r在一世s和dl和一个rn一世nG.在和在一世llC○米和b一个Cķ吨○吨H一世sp○一世n吨l一个吨和rr和G一个rd一世nGH○在pr和C一世s和l是吨○CH○s和吨H和米.吨H和○r一世G一世n一个l米CC在ll○CH−磷一世吨sn和在r○n一世s一世n吨H和s和吨和r米s一个吨Hr和sH○ldp和rC和p吨r○n在一世吨H一个吨Hr和sH○ldG一个一世nF在nC吨一世○n,

g(x)=\左{

1 如果 X>0 0 除此以外 \正确的。

$$

这个阈值增益函数是变换加权输入之和的非线性函数的第一个例子。增益函数在神经网络文献中有时称为激活函数、传递函数或输出函数。非线性增益函数是人工神经网络的重要组成部分,后续章节将进一步讨论。

cs代写|机器学习代写machine learning代考|Multilayer perceptron (MLP) and Keras

为了用类似感知器的元素来表示更复杂的功能,我们现在正在构建人工神经元网络。我们将从多层感知器(MLP)开始

如图 4.3 所示。这个网络被称为两层网络,因为它基本上有两个处理层。输入层简单地表示感官输入的特征向量,而接下来的两层由类似感知器的元素组成,它们将来自前一层的输入与其连接通道的相关权重相加,并应用非线性增益函数σ(X)到这个数目,

是一世=σ(∑j在一世jXj)

我们在这里使用了带变量的通用符号X表示输入和是代表输出。突触权重写为在一世j. 上式对应于单个输出节点情况下的单层感知器。当然,对于更多的层,我们需要区分不同的神经元和权重,例如使用权重的上标,如图 4.3 所示。该网络的输出计算为

是一世=σ(在一世j○σ(∑ķ在jķHXķ)).

我们使用上标“o”作为输出权重和上标“H” 为隐藏的权重。这些公式表示一个参数化函数,它是机器学习上下文中的模型。

cs代写|机器学习代写machine learning代考|Representational learning

在这里,我们讨论的是前馈神经网络,它可以被看作是实现从输入空间到潜在空间,再从那里到输出空间的转换或映射函数。潜在空间由输入节点和输出节点之间的神经元跨越,有时称为隐藏神经元。我们当然可以随时观察程序中节点的活动,这样这些节点就不会真正隐藏起来。所有的权重都是从数据中学习的,因此神经网络实现的转换是从示例中学习的。但是,我们可以通过架构来指导这些转换。应该学习潜在表示,以便最后一层的最终分类比从原始感官空间中更容易。还,网络以及它所代表的表示应该使对以前看不见的示例的泛化变得容易和健壮。在这里暂停一段时间并讨论表示很有用。

统计代写请认准statistics-lab™. statistics-lab™为您的留学生涯保驾护航。

金融工程代写

金融工程是使用数学技术来解决金融问题。金融工程使用计算机科学、统计学、经济学和应用数学领域的工具和知识来解决当前的金融问题,以及设计新的和创新的金融产品。

非参数统计代写

非参数统计指的是一种统计方法,其中不假设数据来自于由少数参数决定的规定模型;这种模型的例子包括正态分布模型和线性回归模型。

广义线性模型代考

广义线性模型(GLM)归属统计学领域,是一种应用灵活的线性回归模型。该模型允许因变量的偏差分布有除了正态分布之外的其它分布。

术语 广义线性模型(GLM)通常是指给定连续和/或分类预测因素的连续响应变量的常规线性回归模型。它包括多元线性回归,以及方差分析和方差分析(仅含固定效应)。

有限元方法代写

有限元方法(FEM)是一种流行的方法,用于数值解决工程和数学建模中出现的微分方程。典型的问题领域包括结构分析、传热、流体流动、质量运输和电磁势等传统领域。

有限元是一种通用的数值方法,用于解决两个或三个空间变量的偏微分方程(即一些边界值问题)。为了解决一个问题,有限元将一个大系统细分为更小、更简单的部分,称为有限元。这是通过在空间维度上的特定空间离散化来实现的,它是通过构建对象的网格来实现的:用于求解的数值域,它有有限数量的点。边界值问题的有限元方法表述最终导致一个代数方程组。该方法在域上对未知函数进行逼近。[1] 然后将模拟这些有限元的简单方程组合成一个更大的方程系统,以模拟整个问题。然后,有限元通过变化微积分使相关的误差函数最小化来逼近一个解决方案。

tatistics-lab作为专业的留学生服务机构,多年来已为美国、英国、加拿大、澳洲等留学热门地的学生提供专业的学术服务,包括但不限于Essay代写,Assignment代写,Dissertation代写,Report代写,小组作业代写,Proposal代写,Paper代写,Presentation代写,计算机作业代写,论文修改和润色,网课代做,exam代考等等。写作范围涵盖高中,本科,研究生等海外留学全阶段,辐射金融,经济学,会计学,审计学,管理学等全球99%专业科目。写作团队既有专业英语母语作者,也有海外名校硕博留学生,每位写作老师都拥有过硬的语言能力,专业的学科背景和学术写作经验。我们承诺100%原创,100%专业,100%准时,100%满意。

随机分析代写

随机微积分是数学的一个分支,对随机过程进行操作。它允许为随机过程的积分定义一个关于随机过程的一致的积分理论。这个领域是由日本数学家伊藤清在第二次世界大战期间创建并开始的。

时间序列分析代写

随机过程,是依赖于参数的一组随机变量的全体,参数通常是时间。 随机变量是随机现象的数量表现,其时间序列是一组按照时间发生先后顺序进行排列的数据点序列。通常一组时间序列的时间间隔为一恒定值(如1秒,5分钟,12小时,7天,1年),因此时间序列可以作为离散时间数据进行分析处理。研究时间序列数据的意义在于现实中,往往需要研究某个事物其随时间发展变化的规律。这就需要通过研究该事物过去发展的历史记录,以得到其自身发展的规律。

回归分析代写

多元回归分析渐进(Multiple Regression Analysis Asymptotics)属于计量经济学领域,主要是一种数学上的统计分析方法,可以分析复杂情况下各影响因素的数学关系,在自然科学、社会和经济学等多个领域内应用广泛。

MATLAB代写

MATLAB 是一种用于技术计算的高性能语言。它将计算、可视化和编程集成在一个易于使用的环境中,其中问题和解决方案以熟悉的数学符号表示。典型用途包括:数学和计算算法开发建模、仿真和原型制作数据分析、探索和可视化科学和工程图形应用程序开发,包括图形用户界面构建MATLAB 是一个交互式系统,其基本数据元素是一个不需要维度的数组。这使您可以解决许多技术计算问题,尤其是那些具有矩阵和向量公式的问题,而只需用 C 或 Fortran 等标量非交互式语言编写程序所需的时间的一小部分。MATLAB 名称代表矩阵实验室。MATLAB 最初的编写目的是提供对由 LINPACK 和 EISPACK 项目开发的矩阵软件的轻松访问,这两个项目共同代表了矩阵计算软件的最新技术。MATLAB 经过多年的发展,得到了许多用户的投入。在大学环境中,它是数学、工程和科学入门和高级课程的标准教学工具。在工业领域,MATLAB 是高效研究、开发和分析的首选工具。MATLAB 具有一系列称为工具箱的特定于应用程序的解决方案。对于大多数 MATLAB 用户来说非常重要,工具箱允许您学习和应用专业技术。工具箱是 MATLAB 函数(M 文件)的综合集合,可扩展 MATLAB 环境以解决特定类别的问题。可用工具箱的领域包括信号处理、控制系统、神经网络、模糊逻辑、小波、仿真等。