数学代写|凸优化作业代写Convex Optimization代考|IE3078

如果你也在 怎样代写凸优化Convex optimization 这个学科遇到相关的难题,请随时右上角联系我们的24/7代写客服。凸优化Convex optimization由于在大规模资源分配、信号处理和机器学习等领域的广泛应用,人们对凸优化的兴趣越来越浓厚。本书旨在解决凸优化问题的算法的最新和可访问的发展。

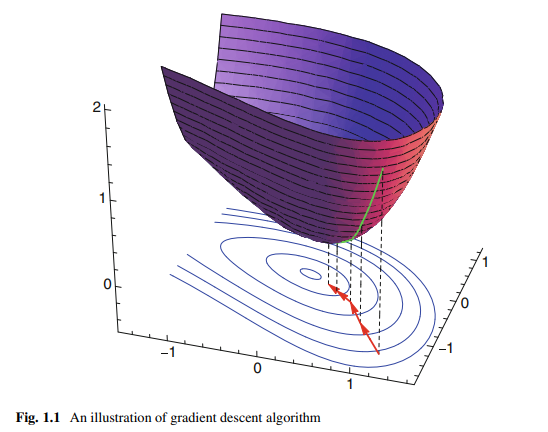

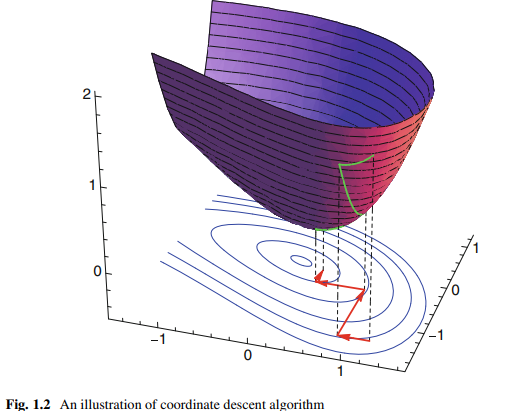

凸优化Convex optimization无约束可以很容易地用梯度下降(最陡下降的特殊情况)或牛顿方法解决,结合线搜索适当的步长;这些可以在数学上证明收敛速度很快,尤其是后一种方法。如果目标函数是二次函数,也可以使用KKT矩阵技术求解具有线性等式约束的凸优化(它推广到牛顿方法的一种变化,即使初始化点不满足约束也有效),但通常也可以通过线性代数消除等式约束或解决对偶问题来解决。

statistics-lab™ 为您的留学生涯保驾护航 在代写凸优化Convex Optimization方面已经树立了自己的口碑, 保证靠谱, 高质且原创的统计Statistics代写服务。我们的专家在代写凸优化Convex Optimization代写方面经验极为丰富,各种代写凸优化Convex Optimization相关的作业也就用不着说。

数学代写|凸优化作业代写Convex Optimization代考|Maximum Likelihood Estimation

Suppose $\boldsymbol{x} \in \mathbb{R}^n$ follows a probability density function $f(\boldsymbol{x} ; \boldsymbol{\theta})$ that is governed by some parameter $\boldsymbol{\theta} \in \Theta$. We had known the exact form of function $f(\cdot)$ but not the value of $\theta$. ${ }^1$

We need to determine the value of $\boldsymbol{\theta}$ from a series of data samples $\boldsymbol{x}_i \in \mathbb{R}^n, i=$ $1, \ldots, n$. One popular method to reach this goal is using the maximum likelihood estimation (MLE) method $[2,3]$.

The likelihood function $L$ is usually defined as the function obtained by reversing the roles of $\boldsymbol{x}$ and $\boldsymbol{\theta}$ as

$$

L\left(\boldsymbol{\theta} ; \boldsymbol{x}_1, \ldots, \boldsymbol{x}_n\right)=f\left(\boldsymbol{x}_1, \ldots, \boldsymbol{x}_n ; \boldsymbol{\theta}\right)

$$

In MLE, we will try to find a value $\boldsymbol{\theta}^$ of the parameter $\boldsymbol{\theta}$ that maximizes $L(\boldsymbol{\theta} ; \boldsymbol{x})$ for all the data sample. Here $\boldsymbol{\theta}^$ is called a maximum likelihood estimator of $\boldsymbol{\theta}$. In this book, we assume the number of data samples is large enough to make a good estimation. Some further discussions on maximum likelihood method can be found in [4].

If these data are independent and identically distributed (i.i.d.), the resulting likelihood function for the samples can be written as

$$

L\left(\boldsymbol{\theta} ; \boldsymbol{x}1, \ldots, \boldsymbol{x}_n\right)=\prod{i=1}^n f\left(\boldsymbol{x}i ; \boldsymbol{\theta}\right) $$ Thus, the parameter estimation problem can then be formulated as the following optimization problem: $$ \max {\boldsymbol{\theta}} L\left(\boldsymbol{\theta} ; \boldsymbol{x}_1, \ldots, \boldsymbol{x}_n\right)

$$

Notice that the natural logarithm function $\ln (\cdot)$ is concave and strictly increasing. If the maximum value of $L(\boldsymbol{\theta} ; \boldsymbol{x})$ does exist, it will occur at the same points as that of $\ln [L(\boldsymbol{\theta} ; \boldsymbol{x})]$. This function is called the log likelihood function and in many cases is easier to work out than the likelihood function, since $L(\boldsymbol{\theta} ; \boldsymbol{x})$ has a product structure.

数学代写|凸优化作业代写Convex Optimization代考|Measurements with iid Noise

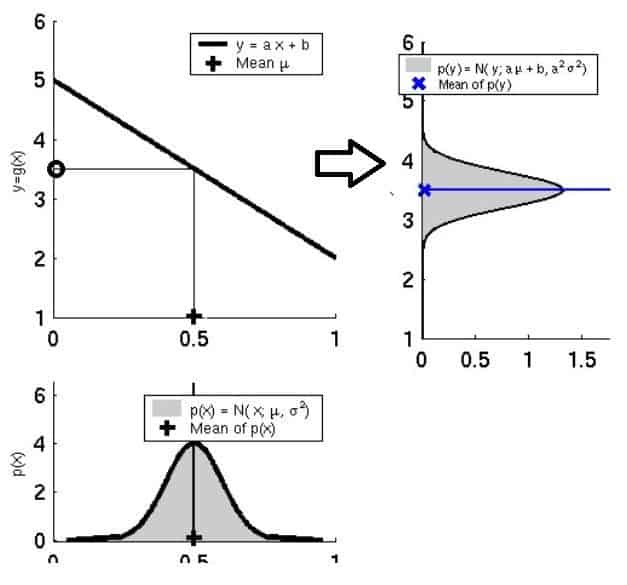

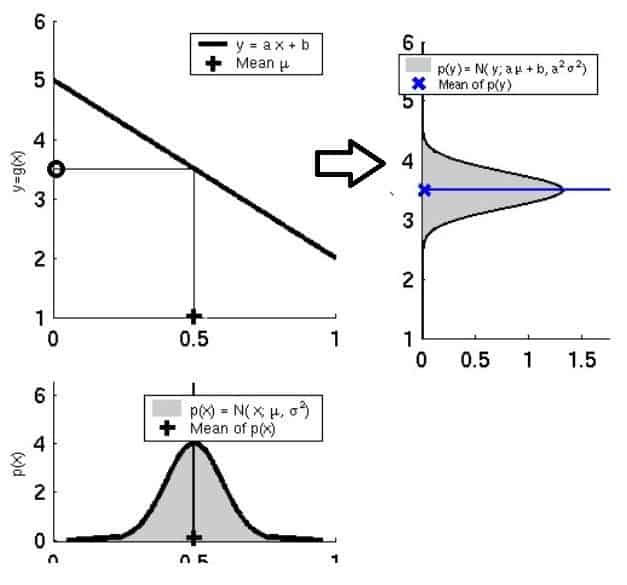

In many cases, we need to determine a vector parameter $\vartheta \in \mathbb{R}^p$ from a series of input-output measurement pairs $\left(\boldsymbol{x}_i, y_i\right), \boldsymbol{x}_i \in \mathbb{R}^p, y_i \in \mathbb{R}, i=1, \ldots, n$. The linear relationship between these variables can be written as

$$

y_i=\boldsymbol{\vartheta}^T \boldsymbol{x}_i+v_i

$$

where $v_i \in \mathbb{R}$ are i.i.d. random variables whose probability density function $f(v)$ is known to us.

We can still use the maximum likelihood estimation (MLE) method to estimate $\vartheta$. The corresponding likelihood function is formulated as

$$

L\left(\boldsymbol{\vartheta} ;\left(\boldsymbol{x}1, y_1\right), \ldots,\left(\boldsymbol{x}_n, y_n\right)\right)=\prod{i=1}^n f\left(y_i-\boldsymbol{\vartheta}^T \boldsymbol{x}_i ; \boldsymbol{\vartheta}\right)

$$

If the probability density function $f(v)$ is log-concave, the estimation of $\vartheta$ can be formulated as a convex optimization problem in terms of $\vartheta$.

Example 3.7 If $v_i$ follows a normal distribution with mean $\mu$ and variance $\sigma^2$, we have the log likelihood function written as

$$

\ln L\left(\boldsymbol{\vartheta} ;\left(\boldsymbol{x}1, y_1\right), \ldots,\left(\boldsymbol{x}_n, y_n\right)\right)=-n \ln \sigma-\frac{n}{2} \ln 2 \pi-\frac{1}{2 \sigma^2} \sum{i=1}^n\left(y_i-\boldsymbol{\vartheta}^T \boldsymbol{x}i-\mu\right)^2 $$ Thus, the maximum likelihood estimator of $\vartheta$ is the optimal solution of the following least squares approximation problem; see also discussions in Sect. 4.1 $$ \min {\vartheta} \sum_{i=1}^n\left(y_i-\vartheta^T \boldsymbol{x}_i-\mu\right)^2

$$

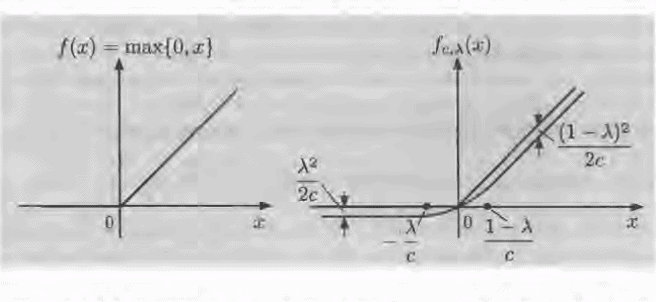

Example 3.8 If $v_i$ follows a Laplacian distribution with probability density function written as

$$

f(v)=\frac{1}{2 \tau} e^{-|v-\mu| / \tau}

$$

where $\tau>0$ and $\mu$ is the mean value.

凸优化代写

数学代写|凸优化作业代写Convex Optimization代考|Maximum Likelihood Estimation

假设$\boldsymbol{x} \in \mathbb{R}^n$遵循由某个参数$\boldsymbol{\theta} \in \Theta$控制的概率密度函数$f(\boldsymbol{x} ; \boldsymbol{\theta})$。我们知道函数$f(\cdot)$的确切形式,但不知道$\theta$的值。 ${ }^1$

我们需要从一系列数据样本$\boldsymbol{x}_i \in \mathbb{R}^n, i=$$1, \ldots, n$中确定$\boldsymbol{\theta}$的值。实现这一目标的一个流行方法是使用最大似然估计(MLE)方法$[2,3]$。

似然函数$L$通常定义为将$\boldsymbol{x}$和$\boldsymbol{\theta}$ as的作用反向得到的函数

$$

L\left(\boldsymbol{\theta} ; \boldsymbol{x}_1, \ldots, \boldsymbol{x}_n\right)=f\left(\boldsymbol{x}_1, \ldots, \boldsymbol{x}_n ; \boldsymbol{\theta}\right)

$$

在MLE中,我们将尝试找到参数$\boldsymbol{\theta}$的值$\boldsymbol{\theta}^$,使所有数据样本的$L(\boldsymbol{\theta} ; \boldsymbol{x})$最大化。这里$\boldsymbol{\theta}^$被称为$\boldsymbol{\theta}$的极大似然估计量。在本书中,我们假设数据样本的数量足够大,可以进行很好的估计。关于极大似然法的进一步讨论可以在[4]中找到。

如果这些数据是独立且同分布的(i.i.d),则得到的样本似然函数可以写成

$$

L\left(\boldsymbol{\theta} ; \boldsymbol{x}1, \ldots, \boldsymbol{x}_n\right)=\prod{i=1}^n f\left(\boldsymbol{x}i ; \boldsymbol{\theta}\right) $$因此,参数估计问题可以表示为如下优化问题:$$ \max {\boldsymbol{\theta}} L\left(\boldsymbol{\theta} ; \boldsymbol{x}_1, \ldots, \boldsymbol{x}_n\right)

$$

注意,自然对数函数$\ln (\cdot)$是凹的,并且严格递增。如果确实存在$L(\boldsymbol{\theta} ; \boldsymbol{x})$的最大值,它将出现在与$\ln [L(\boldsymbol{\theta} ; \boldsymbol{x})]$相同的点上。这个函数被称为对数似然函数,在很多情况下比似然函数更容易计算,因为$L(\boldsymbol{\theta} ; \boldsymbol{x})$有一个乘积结构。

数学代写|凸优化作业代写Convex Optimization代考|Measurements with iid Noise

在许多情况下,我们需要从一系列输入-输出测量对$\left(\boldsymbol{x}_i, y_i\right), \boldsymbol{x}_i \in \mathbb{R}^p, y_i \in \mathbb{R}, i=1, \ldots, n$中确定一个矢量参数$\vartheta \in \mathbb{R}^p$。这些变量之间的线性关系可以写成

$$

y_i=\boldsymbol{\vartheta}^T \boldsymbol{x}_i+v_i

$$

其中$v_i \in \mathbb{R}$为i.i.d随机变量,其概率密度函数$f(v)$为已知。

我们仍然可以使用极大似然估计(MLE)方法来估计$\vartheta$。相应的似然函数表示为

$$

L\left(\boldsymbol{\vartheta} ;\left(\boldsymbol{x}1, y_1\right), \ldots,\left(\boldsymbol{x}_n, y_n\right)\right)=\prod{i=1}^n f\left(y_i-\boldsymbol{\vartheta}^T \boldsymbol{x}_i ; \boldsymbol{\vartheta}\right)

$$

如果概率密度函数$f(v)$是log-凹的,则对$\vartheta$的估计可以表示为一个关于$\vartheta$的凸优化问题。

如果$v_i$服从均值$\mu$和方差$\sigma^2$的正态分布,我们将对数似然函数写成

$$

\ln L\left(\boldsymbol{\vartheta} ;\left(\boldsymbol{x}1, y_1\right), \ldots,\left(\boldsymbol{x}n, y_n\right)\right)=-n \ln \sigma-\frac{n}{2} \ln 2 \pi-\frac{1}{2 \sigma^2} \sum{i=1}^n\left(y_i-\boldsymbol{\vartheta}^T \boldsymbol{x}i-\mu\right)^2 $$因此,$\vartheta$的极大似然估计量是以下最小二乘近似问题的最优解;参见第4.1节$$ \min {\vartheta} \sum{i=1}^n\left(y_i-\vartheta^T \boldsymbol{x}_i-\mu\right)^2

$$的讨论

例3.8如果$v_i$遵循拉普拉斯分布,其概率密度函数为

$$

f(v)=\frac{1}{2 \tau} e^{-|v-\mu| / \tau}

$$

其中$\tau>0$和$\mu$为平均值。

统计代写请认准statistics-lab™. statistics-lab™为您的留学生涯保驾护航。

微观经济学代写

微观经济学是主流经济学的一个分支,研究个人和企业在做出有关稀缺资源分配的决策时的行为以及这些个人和企业之间的相互作用。my-assignmentexpert™ 为您的留学生涯保驾护航 在数学Mathematics作业代写方面已经树立了自己的口碑, 保证靠谱, 高质且原创的数学Mathematics代写服务。我们的专家在图论代写Graph Theory代写方面经验极为丰富,各种图论代写Graph Theory相关的作业也就用不着 说。

线性代数代写

线性代数是数学的一个分支,涉及线性方程,如:线性图,如:以及它们在向量空间和通过矩阵的表示。线性代数是几乎所有数学领域的核心。

博弈论代写

现代博弈论始于约翰-冯-诺伊曼(John von Neumann)提出的两人零和博弈中的混合策略均衡的观点及其证明。冯-诺依曼的原始证明使用了关于连续映射到紧凑凸集的布劳威尔定点定理,这成为博弈论和数学经济学的标准方法。在他的论文之后,1944年,他与奥斯卡-莫根斯特恩(Oskar Morgenstern)共同撰写了《游戏和经济行为理论》一书,该书考虑了几个参与者的合作游戏。这本书的第二版提供了预期效用的公理理论,使数理统计学家和经济学家能够处理不确定性下的决策。

微积分代写

微积分,最初被称为无穷小微积分或 “无穷小的微积分”,是对连续变化的数学研究,就像几何学是对形状的研究,而代数是对算术运算的概括研究一样。

它有两个主要分支,微分和积分;微分涉及瞬时变化率和曲线的斜率,而积分涉及数量的累积,以及曲线下或曲线之间的面积。这两个分支通过微积分的基本定理相互联系,它们利用了无限序列和无限级数收敛到一个明确定义的极限的基本概念 。

计量经济学代写

什么是计量经济学?

计量经济学是统计学和数学模型的定量应用,使用数据来发展理论或测试经济学中的现有假设,并根据历史数据预测未来趋势。它对现实世界的数据进行统计试验,然后将结果与被测试的理论进行比较和对比。

根据你是对测试现有理论感兴趣,还是对利用现有数据在这些观察的基础上提出新的假设感兴趣,计量经济学可以细分为两大类:理论和应用。那些经常从事这种实践的人通常被称为计量经济学家。

Matlab代写

MATLAB 是一种用于技术计算的高性能语言。它将计算、可视化和编程集成在一个易于使用的环境中,其中问题和解决方案以熟悉的数学符号表示。典型用途包括:数学和计算算法开发建模、仿真和原型制作数据分析、探索和可视化科学和工程图形应用程序开发,包括图形用户界面构建MATLAB 是一个交互式系统,其基本数据元素是一个不需要维度的数组。这使您可以解决许多技术计算问题,尤其是那些具有矩阵和向量公式的问题,而只需用 C 或 Fortran 等标量非交互式语言编写程序所需的时间的一小部分。MATLAB 名称代表矩阵实验室。MATLAB 最初的编写目的是提供对由 LINPACK 和 EISPACK 项目开发的矩阵软件的轻松访问,这两个项目共同代表了矩阵计算软件的最新技术。MATLAB 经过多年的发展,得到了许多用户的投入。在大学环境中,它是数学、工程和科学入门和高级课程的标准教学工具。在工业领域,MATLAB 是高效研究、开发和分析的首选工具。MATLAB 具有一系列称为工具箱的特定于应用程序的解决方案。对于大多数 MATLAB 用户来说非常重要,工具箱允许您学习和应用专业技术。工具箱是 MATLAB 函数(M 文件)的综合集合,可扩展 MATLAB 环境以解决特定类别的问题。可用工具箱的领域包括信号处理、控制系统、神经网络、模糊逻辑、小波、仿真等。