计算机代写|图像处理代写Image Processing代考|GPY470

如果你也在 怎样代写图像处理Image Processing这个学科遇到相关的难题,请随时右上角联系我们的24/7代写客服。

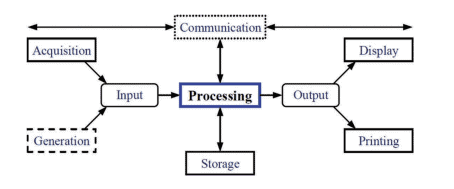

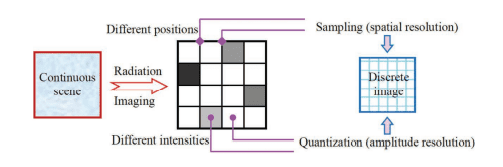

图像处理是使用数字计算机通过一种算法来处理数字图像。

statistics-lab™ 为您的留学生涯保驾护航 在代写图像处理Image Processing方面已经树立了自己的口碑, 保证靠谱, 高质且原创的统计Statistics代写服务。我们的专家在代写图像处理Image Processing代写方面经验极为丰富,各种代写图像处理Image Processing相关的作业也就用不着说。

我们提供的图像处理Image Processing及其相关学科的代写,服务范围广, 其中包括但不限于:

- Statistical Inference 统计推断

- Statistical Computing 统计计算

- Advanced Probability Theory 高等楖率论

- Advanced Mathematical Statistics 高等数理统计学

- (Generalized) Linear Models 广义线性模型

- Statistical Machine Learning 统计机器学习

- Longitudinal Data Analysis 纵向数据分析

- Foundations of Data Science 数据科学基础

计算机代写|图像处理代写Image Processing代考|Material Selection and Contents

This book focuses on (narrowly) IP (refer to Zhang 2017a) and selects seven types of technical fields and directions that are currently receiving widespread attention and are commonly used in many applications for introduction. They are as follows: (i) Image de-noising, (ii) Image de-blurring, (iii) Image repairing, (iv) Image de-fogging, (v) Image reconstruction from projection, (vi) Image watermarking, and (vii) Image super-resolution. Related terms appearing in the book can be found in (Zhang 2021).

Each chapter focuses on one type of technology. The following summarizes the contents of these seven chapters separately:

Chapter 2 introduces image denoising technology. Based on the analysis of common noise types and characteristics, it first summarizes some typical methods based on image filtering to eliminate noise and then discusses the selective filtering framework that can specifically eliminate different types of noise. It also introduces the switching median filtering methods and their improvements that have received a lot of research recently. Finally, some recent developments and further research are included.

Chapter 3 introduces image deblurring technology. After explaining the traditional image deblurring technology, the estimation of motion blur kernel with the help of a neural network and the deblurring method for low-resolution images are discussed. Finally, some recent developments and further research are included.

Chapter 4 introduces image inpainting technology. First, the origin of the name is explained, and then an algorithm combining sparse expression, a weighted sparse nonnegative matrix factorization algorithm and a context-driven hybrid method are introduced. Some recent developments have been introduced. Finally, some recent developments and further research are included.

Chapter 5 introduces the image defogging technology. First, it introduces the typical dark channel priori defogging algorithm and discusses some improvement techniques for its shortcomings. It also introduces the algorithm that focuses on reducing the distortion and the subjective and objective evaluation of the dehazing effect. Some recent developments have been introduced. Finally, some recent developments and further research are included.

计算机代写|图像处理代写Image Processing代考|Structure and Arrangement

The styles of the following chapters of this book are relatively consistent. At the beginning of each chapter, in addition to the introduction of the basic concepts and overall content, some application fields and occasions of the corresponding technologies are listed, which are reflected in the idea of application services; there is also an overview of each section to grasp the context of the whole chapter.

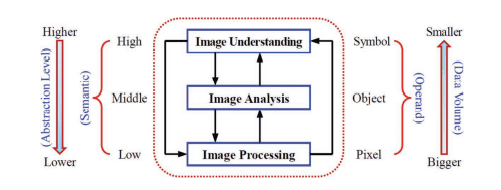

There are some similarities in the arrangement and structure of the body content of each chapter. Each chapter has multiple sections, which can be divided into the following three parts from beginning to end (corresponding to the three levels in Figure 1.14).

- Principle and technology overview

The first section at the beginning of each chapter has the contents as in typical textbooks. It introduces the principle, history, use, method overview and development of the image technology. The goal is to give more comprehensive and basic information (a lot of cxamples and demonstrations can be found in Zhang (2011)), most of which come from professional textbooks (refer to (Zhang 2017a)). - Description of specific technical methods

The next few sections in the middle of each chapter have the contents combined from textbooks and monographs. They introduce several related typical technologies, which are described in detail in terms of methods. The goal is to give some ideas that can effectively and efficiently solve the problems faced by this type of image technology and provide solutions for practical applications. These sections can have a certain progressive relationship or a relatively independent parallel relationship. Many contents are mainly extracted from the literature in journals or conference papers. Most of them are followed up and researched, but they have not been written into professional textbooks or books.

- Introduction to recent developments and directions

The last section of each chapter is more research-oriented. It is based on the analysis and review of relevant new documents in some important journals or conference proceedings in recent years. The goal is to provide some of the latest relevant information on focusing techniques and to help understand the progress and trends in the corresponding technology.

The arrangement of the main text in sections of each chapter is shown in Table 1.5.

From the perspective of understanding the technical overview, one can only look at the sections of the principle introduction. If one wants to solve practical problems, one needs to learn some typical techniques. To master the technology more deeply, one can also refer to the recent progress/trends and look at more references.

图像处理代考

计算机代写|图像处理代写Image Processing代考|Material Selection and Contents

本书以(狭义)IP(参见Zhang 2017a)为重点,选取了目前受到广泛关注、在众多应用中普遍使用的七类技术领域和方向进行介绍。它们如下:(i)图像去噪,(ii)图像去模糊,(iii)图像修复,(iv)图像去雾,(v)投影图像重建,(vi)图像水印,以及(vii) 图像超分辨率。书中出现的相关术语见(张2021)。

每章都侧重于一种技术。下面分别总结这七章的内容:

第2章介绍了图像去噪技术。在分析常见噪声类型和特征的基础上,首先总结了一些典型的基于图像滤波的噪声消除方法,然后讨论了能够针对性地消除不同类型噪声的选择性滤波框架。它还介绍了最近得到大量研究的开关中值滤波方法及其改进。最后,包括一些最近的发展和进一步的研究。

第3章介绍了图像去模糊技术。在阐述了传统的图像去模糊技术之后,讨论了借助神经网络的运动模糊核估计和低分辨率图像的去模糊方法。最后,包括一些最近的发展和进一步的研究。

第4章介绍了图像修复技术。首先解释了名称的由来,然后介绍了结合稀疏表达式、加权稀疏非负矩阵分解算法和上下文驱动混合方法的算法。介绍了最近的一些进展。最后,包括一些最近的发展和进一步的研究。

第5章介绍图像去雾技术。首先介绍了典型的暗通道先验去雾算法,并针对其不足之处讨论了一些改进技术。还介绍了以减少失真为主的算法和去雾效果的主客观评价。介绍了最近的一些进展。最后,包括一些最近的发展和进一步的研究。

计算机代写|图像处理代写Image Processing代考|Structure and Arrangement

本书后面章节的风格比较一致。每章开头除了介绍基本概念和总体内容外,还列举了相应技术的一些应用领域和场合,体现在应用服务的思想上;每个部分还有一个概述,以掌握整章的上下文。

各章正文内容的安排和结构有一些相似之处。每章有多个小节,从头到尾可分为以下三个部分(对应图1.14中的三个层次)。

- 原理与技术概述

每章开头的第一节内容与典型教材相同。介绍了图像技术的原理、历史、用途、方法概述和发展。目标是给出更全面、更基础的信息(Zhang(2011)中可以找到很多例子和演示),其中大部分来自专业教科书(参见(Zhang 2017a))。 - 具体技术方法

的说明 每章中间接下来的几节是结合教材和专着的内容。他们介绍了几个相关的典型技术,并从方法的角度进行了详细的描述。目的是给出一些思路,能够有效、高效地解决这类图像技术所面临的问题,为实际应用提供解决方案。这些部分可以有一定的递进关系,也可以是相对独立的平行关系。许多内容主要摘自期刊或会议论文中的文献。其中大部分被跟踪研究,但没有被写入专业的教科书或书籍。 - 最近的发展和方向介绍

每章的最后一节更偏向于研究。它是在对近年一些重要期刊或会议论文集中的相关新文献进行分析和梳理的基础上得出的。目的是提供一些聚焦技术的最新相关信息,帮助了解相应技术的进展和趋势。

各章各节正文编排见表1.5。

从理解技术概述的角度来看,只能看原理介绍部分。要想解决实际问题,就需要学习一些典型的技术。想要更深入地掌握技术,也可以参考最近的进展/趋势,多看看参考资料。

统计代写请认准statistics-lab™. statistics-lab™为您的留学生涯保驾护航。

金融工程代写

金融工程是使用数学技术来解决金融问题。金融工程使用计算机科学、统计学、经济学和应用数学领域的工具和知识来解决当前的金融问题,以及设计新的和创新的金融产品。

非参数统计代写

非参数统计指的是一种统计方法,其中不假设数据来自于由少数参数决定的规定模型;这种模型的例子包括正态分布模型和线性回归模型。

广义线性模型代考

广义线性模型(GLM)归属统计学领域,是一种应用灵活的线性回归模型。该模型允许因变量的偏差分布有除了正态分布之外的其它分布。

术语 广义线性模型(GLM)通常是指给定连续和/或分类预测因素的连续响应变量的常规线性回归模型。它包括多元线性回归,以及方差分析和方差分析(仅含固定效应)。

有限元方法代写

有限元方法(FEM)是一种流行的方法,用于数值解决工程和数学建模中出现的微分方程。典型的问题领域包括结构分析、传热、流体流动、质量运输和电磁势等传统领域。

有限元是一种通用的数值方法,用于解决两个或三个空间变量的偏微分方程(即一些边界值问题)。为了解决一个问题,有限元将一个大系统细分为更小、更简单的部分,称为有限元。这是通过在空间维度上的特定空间离散化来实现的,它是通过构建对象的网格来实现的:用于求解的数值域,它有有限数量的点。边界值问题的有限元方法表述最终导致一个代数方程组。该方法在域上对未知函数进行逼近。[1] 然后将模拟这些有限元的简单方程组合成一个更大的方程系统,以模拟整个问题。然后,有限元通过变化微积分使相关的误差函数最小化来逼近一个解决方案。

tatistics-lab作为专业的留学生服务机构,多年来已为美国、英国、加拿大、澳洲等留学热门地的学生提供专业的学术服务,包括但不限于Essay代写,Assignment代写,Dissertation代写,Report代写,小组作业代写,Proposal代写,Paper代写,Presentation代写,计算机作业代写,论文修改和润色,网课代做,exam代考等等。写作范围涵盖高中,本科,研究生等海外留学全阶段,辐射金融,经济学,会计学,审计学,管理学等全球99%专业科目。写作团队既有专业英语母语作者,也有海外名校硕博留学生,每位写作老师都拥有过硬的语言能力,专业的学科背景和学术写作经验。我们承诺100%原创,100%专业,100%准时,100%满意。

随机分析代写

随机微积分是数学的一个分支,对随机过程进行操作。它允许为随机过程的积分定义一个关于随机过程的一致的积分理论。这个领域是由日本数学家伊藤清在第二次世界大战期间创建并开始的。

时间序列分析代写

随机过程,是依赖于参数的一组随机变量的全体,参数通常是时间。 随机变量是随机现象的数量表现,其时间序列是一组按照时间发生先后顺序进行排列的数据点序列。通常一组时间序列的时间间隔为一恒定值(如1秒,5分钟,12小时,7天,1年),因此时间序列可以作为离散时间数据进行分析处理。研究时间序列数据的意义在于现实中,往往需要研究某个事物其随时间发展变化的规律。这就需要通过研究该事物过去发展的历史记录,以得到其自身发展的规律。

回归分析代写

多元回归分析渐进(Multiple Regression Analysis Asymptotics)属于计量经济学领域,主要是一种数学上的统计分析方法,可以分析复杂情况下各影响因素的数学关系,在自然科学、社会和经济学等多个领域内应用广泛。

MATLAB代写

MATLAB 是一种用于技术计算的高性能语言。它将计算、可视化和编程集成在一个易于使用的环境中,其中问题和解决方案以熟悉的数学符号表示。典型用途包括:数学和计算算法开发建模、仿真和原型制作数据分析、探索和可视化科学和工程图形应用程序开发,包括图形用户界面构建MATLAB 是一个交互式系统,其基本数据元素是一个不需要维度的数组。这使您可以解决许多技术计算问题,尤其是那些具有矩阵和向量公式的问题,而只需用 C 或 Fortran 等标量非交互式语言编写程序所需的时间的一小部分。MATLAB 名称代表矩阵实验室。MATLAB 最初的编写目的是提供对由 LINPACK 和 EISPACK 项目开发的矩阵软件的轻松访问,这两个项目共同代表了矩阵计算软件的最新技术。MATLAB 经过多年的发展,得到了许多用户的投入。在大学环境中,它是数学、工程和科学入门和高级课程的标准教学工具。在工业领域,MATLAB 是高效研究、开发和分析的首选工具。MATLAB 具有一系列称为工具箱的特定于应用程序的解决方案。对于大多数 MATLAB 用户来说非常重要,工具箱允许您学习和应用专业技术。工具箱是 MATLAB 函数(M 文件)的综合集合,可扩展 MATLAB 环境以解决特定类别的问题。可用工具箱的领域包括信号处理、控制系统、神经网络、模糊逻辑、小波、仿真等。